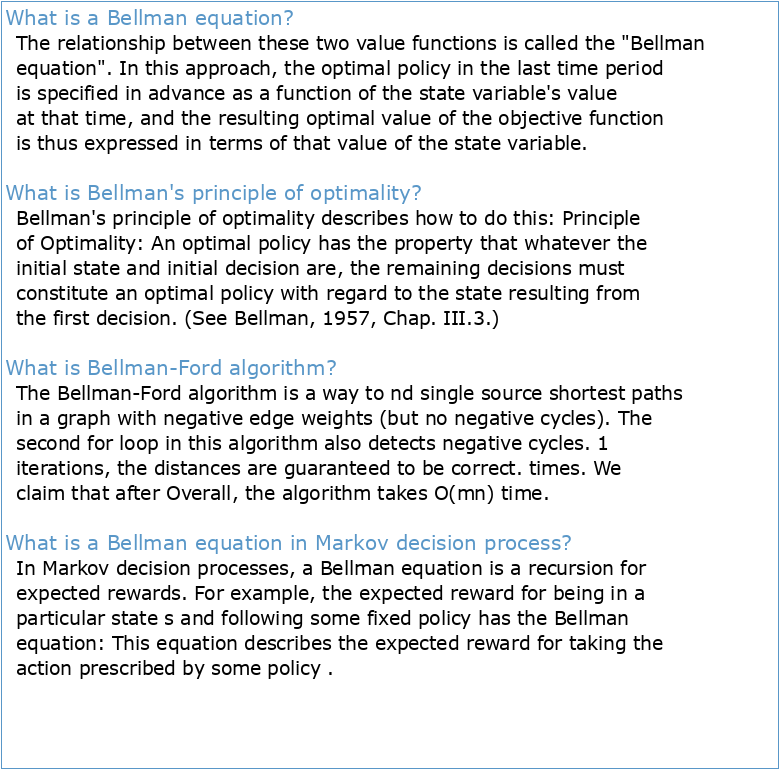

What is a Bellman equation?

The relationship between these two value functions is called the "Bellman equation". In this approach, the optimal policy in the last time period is specified in advance as a function of the state variable's value at that time, and the resulting optimal value of the objective function is thus expressed in terms of that value of the state variable.

What is Bellman's principle of optimality?

Bellman's principle of optimality describes how to do this: Principle of Optimality: An optimal policy has the property that whatever the initial state and initial decision are, the remaining decisions must constitute an optimal policy with regard to the state resulting from the first decision. (See Bellman, 1957, Chap. III.3.)

What is Bellman-Ford algorithm?

The Bellman-Ford algorithm is a way to nd single source shortest paths in a graph with negative edge weights (but no negative cycles). The second for loop in this algorithm also detects negative cycles. 1 iterations, the distances are guaranteed to be correct. times. We claim that after Overall, the algorithm takes O(mn) time.

What is a Bellman equation in Markov decision process?

In Markov decision processes, a Bellman equation is a recursion for expected rewards. For example, the expected reward for being in a particular state s and following some fixed policy has the Bellman equation: This equation describes the expected reward for taking the action prescribed by some policy .

Institut de la Communication Formations en Informatique et Statistique

Informatique Decisionnelle

CHAPITRE 1 Généralités sur les tissus

Histologie – anatomie pathologique

UE 2 HISTOLOGIE FICHE DE COURS 1 LES EPITHELIUMS DE REVETEMENT

Intégrales doubles

Ep 3-Pharmacognosie 27/05/2013 QCM (1) plusieurs réponses

Le droit économique par ALEX JACQUEMIN et GUY SCHRANS Un

320 QCM en physiologie