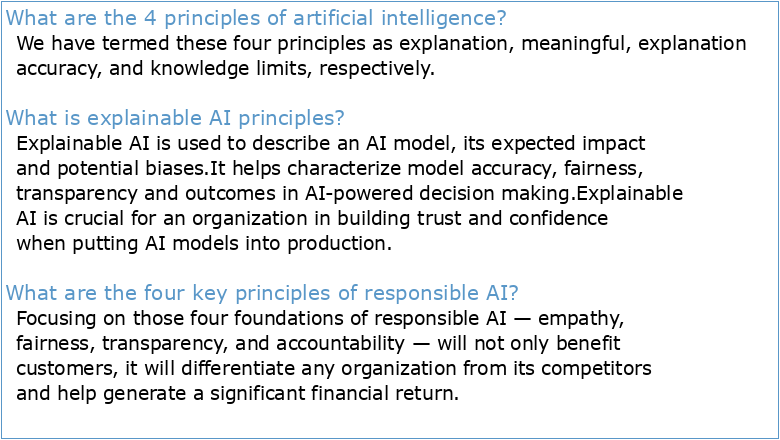

What are the 4 principles of artificial intelligence?

We have termed these four principles as explanation, meaningful, explanation accuracy, and knowledge limits, respectively.

What is explainable AI principles?

Explainable AI is used to describe an AI model, its expected impact and potential biases.

It helps characterize model accuracy, fairness, transparency and outcomes in AI-powered decision making.

Explainable AI is crucial for an organization in building trust and confidence when putting AI models into production.What are the four key principles of responsible AI?

Focusing on those four foundations of responsible AI — empathy, fairness, transparency, and accountability — will not only benefit customers, it will differentiate any organization from its competitors and help generate a significant financial return.

- For those of us who like to look under the hood, there are four foundational elements to understand: categorization, classification, machine learning, and collaborative filtering.

The Impact of Artificial Intelligence on Innovation

Géographie histoire et définition d'une identité régionale

Pitte Jean-Robert éd (1995) Géographie historique et culturelle de

ChatGPT: Applications Opportunities and Threats

ArXiv:230104655v1 [csLG] 11 Jan 2023

GGR-1002 : Géographie humaine : les établissements humains

Géo militaire et géostratégie du monde contemporain

La géographie militaire enjeu de puissance et de force pour les

OCR Document

Intelligence artificielle – Exercices – Devoirs

Géographie histoire et définition d'une identité régionale

Pitte Jean-Robert éd (1995) Géographie historique et culturelle de

ChatGPT: Applications Opportunities and Threats

ArXiv:230104655v1 [csLG] 11 Jan 2023

GGR-1002 : Géographie humaine : les établissements humains

Géo militaire et géostratégie du monde contemporain

La géographie militaire enjeu de puissance et de force pour les

OCR Document

Intelligence artificielle – Exercices – Devoirs