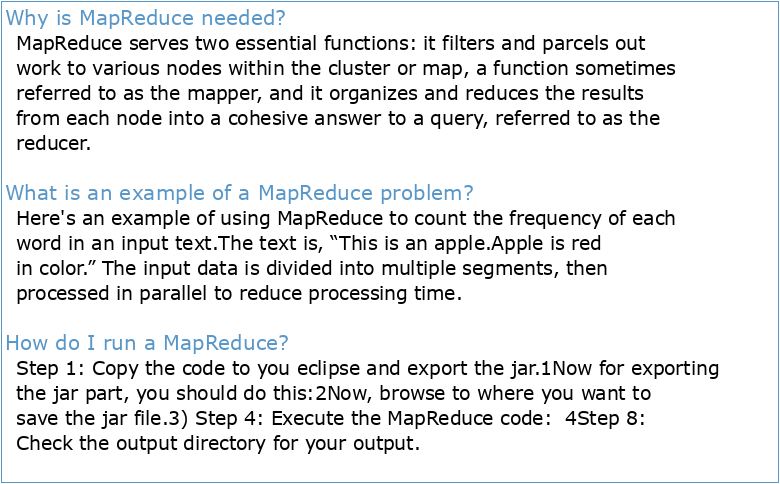

Why is MapReduce needed?

MapReduce serves two essential functions: it filters and parcels out work to various nodes within the cluster or map, a function sometimes referred to as the mapper, and it organizes and reduces the results from each node into a cohesive answer to a query, referred to as the reducer.

What is an example of a MapReduce problem?

Here's an example of using MapReduce to count the frequency of each word in an input text.

The text is, “This is an apple.

Apple is red in color.” The input data is divided into multiple segments, then processed in parallel to reduce processing time.How do I run a MapReduce?

Step 1: Copy the code to you eclipse and export the jar.

1Now for exporting the jar part, you should do this:2Now, browse to where you want to save the jar file.

3) Step 4: Execute the MapReduce code: 4Step 8: Check the output directory for your output.- MapReduce Workflow

The processing can be done on a single file or a directory that has multiple files.

The input format defines the input specification and how the input files would be split and read.

The input split logically represents the data to be processed by an individual mapper.

MapReduce is a Java-based, distributed execution framework within the Apache Hadoop Ecosystem. It takes away the complexity of distributed programming by exposing two processing steps that developers implement: 1) Map and 2) Reduce. In the Mapping step, data is split between parallel processing tasks.

TIW4 : sécurité des systèmes d'information

– LES MÉTIERS DE LA DATA

L'écriture examinée sous toutes ses coutures

GAUTHERON

La visualisation d'information à l'ère du Big Data

Annexe 3 Glossaire

S-10 Sécurité informationnelle

Gestion des risques informationnels dans les organisations

Politique de sécurité de l'information

Guide de sensibilisation à la sécurité de l'information

– LES MÉTIERS DE LA DATA

L'écriture examinée sous toutes ses coutures

GAUTHERON

La visualisation d'information à l'ère du Big Data

Annexe 3 Glossaire

S-10 Sécurité informationnelle

Gestion des risques informationnels dans les organisations

Politique de sécurité de l'information

Guide de sensibilisation à la sécurité de l'information