Active Learning Based 3D Semantic Labeling From Images and

Active Learning Based 3D Semantic Labeling From Images and

tic segmentation 2D-3D semantic fusion

SimpleRecon: 3D Reconstruction Without 3D Convolutions

SimpleRecon: 3D Reconstruction Without 3D Convolutions

2D image features from keyframes and backprojecting these features into 3D space to produce a 4D feature volume [63]. In ATLAS [46] 3D convolutions on such

Solving Stochastic Inverse Problems - Diffusion with Forward Models

Solving Stochastic Inverse Problems - Diffusion with Forward Models

the distribution of 3D scenes trained only on 2D images. In contrast to Renderdiffusion: Image diffusion for 3d reconstruction inpainting and generation.

3D Shape Reconstruction From 2D Images With Disentangled

3D Shape Reconstruction From 2D Images With Disentangled

Code is available at https: //github.com/junshengzhou/3DAttriFlow. *Equal contribution. †The corresponding author is Yu-Shen Liu. This work was sup- ported

ARTIC3D: Learning Robust Articulated 3D Shapes from Noisy Web

ARTIC3D: Learning Robust Articulated 3D Shapes from Noisy Web

7 Jun 2023 page: https://chhankyao.github.io/artic3d/ ... how well a 3D reconstruction looks in its 2D renders and calculate pixel gradients from the image.

Panoptic 3D Scene Reconstruction From a Single RGB Image

Panoptic 3D Scene Reconstruction From a Single RGB Image

In contrast to prior approaches for 3D reconstruction from 2D images we 1Code can be found at https://github.com/xheon/panoptic-reconstruction. 2. Page 3 ...

Implicit Neural Representation for 3D Shape Reconstruction Using

Implicit Neural Representation for 3D Shape Reconstruction Using

to acquire a 2D tactile image. Next a convolutional neural network maps the. 2D image into a set of 3D points corresponding to the local surface of the.

3D-C2FT: Coarse-to-fine Transformer for Multi-view 3D Reconstruction

3D-C2FT: Coarse-to-fine Transformer for Multi-view 3D Reconstruction

Predica- ments in estimating 3D structure of an object include solving an ill-posed inverse problem of 2D images violation of overlapping views

MagicPony: Learning Articulated 3D Animals in the Wild

MagicPony: Learning Articulated 3D Animals in the Wild

The code can be found on the project page at https://3dmagicpony.github.io/. 1. Introduction. Reconstructing the 3D shape of an object from a sin- gle image of

3D Shape Reconstruction From 2D Images With Disentangled

3D Shape Reconstruction From 2D Images With Disentangled

Code is available at https: //github.com/junshengzhou/3DAttriFlow. *Equal contribution. †The corresponding author is Yu-Shen Liu. This work was sup- ported

3D Reconstruction of Clothes using a Human Body Model and its

3D Reconstruction of Clothes using a Human Body Model and its

Image-based virtual try-on (VTON) approaches are get- ting attention since they do not require 3D modeling. How- ever 2D cloth warping algorithms cannot

Automated 3D reconstruction from satellite images - SIAM IS18

Automated 3D reconstruction from satellite images - SIAM IS18

Jun 8 2018 Epipolar rectification: why? Why epipolar rectification: ? speed: reduces the exploration from 2D to 1D. ? robustness: reduces the risks ...

AUV-Net: Learning Aligned UV Maps for Texture Transfer and

AUV-Net: Learning Aligned UV Maps for Texture Transfer and

since synthesizing 2D images is a well-studied problem. We propose AUV-Net which learns to embed The field of 3D shape reconstruction and synthesis has.

SICGAN - Single Image 3D Reconstruction based on Conditional GAN

SICGAN - Single Image 3D Reconstruction based on Conditional GAN

herent 3D structure in the world is an important area of re- search in Computer Vision. Inferring 3D shape from 2D images has always been an important

GAL: Geometric Adversarial Loss for Single-View 3D-Object

GAL: Geometric Adversarial Loss for Single-View 3D-Object

2D views and following the 3D semantics of point cloud. Recently data-driven 3D reconstruction from single images [4

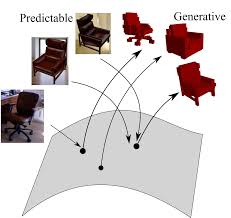

Domain-Adaptive Single-View 3D Reconstruction

Domain-Adaptive Single-View 3D Reconstruction

The second challenge is that there are multiple shapes that can explain a given 2D image. In this paper we propose a framework to improve over these challenges

DISN: Deep Implicit Surface Network for High-quality Single-view 3D

DISN: Deep Implicit Surface Network for High-quality Single-view 3D

While many single-view 3D reconstruction methods [2 10

PC-HMR: Pose Calibration for 3D Human Mesh Recovery from 2D

PC-HMR: Pose Calibration for 3D Human Mesh Recovery from 2D

reconstruct human body by directly learning mesh parameters from images or videos while lacking explicit guidance of 3D human pose in visual data.

Self-Supervised 3D Face Reconstruction via Conditional Estimation

Self-Supervised 3D Face Reconstruction via Conditional Estimation

bined to reconstruct the 2D face image. In order to learn semantically meaningful 3D facial parameters without ex- plicit access to their labels

Learning a Predictable and Generative Vector Representation for

Learning a Predictable and Generative Vector Representation for