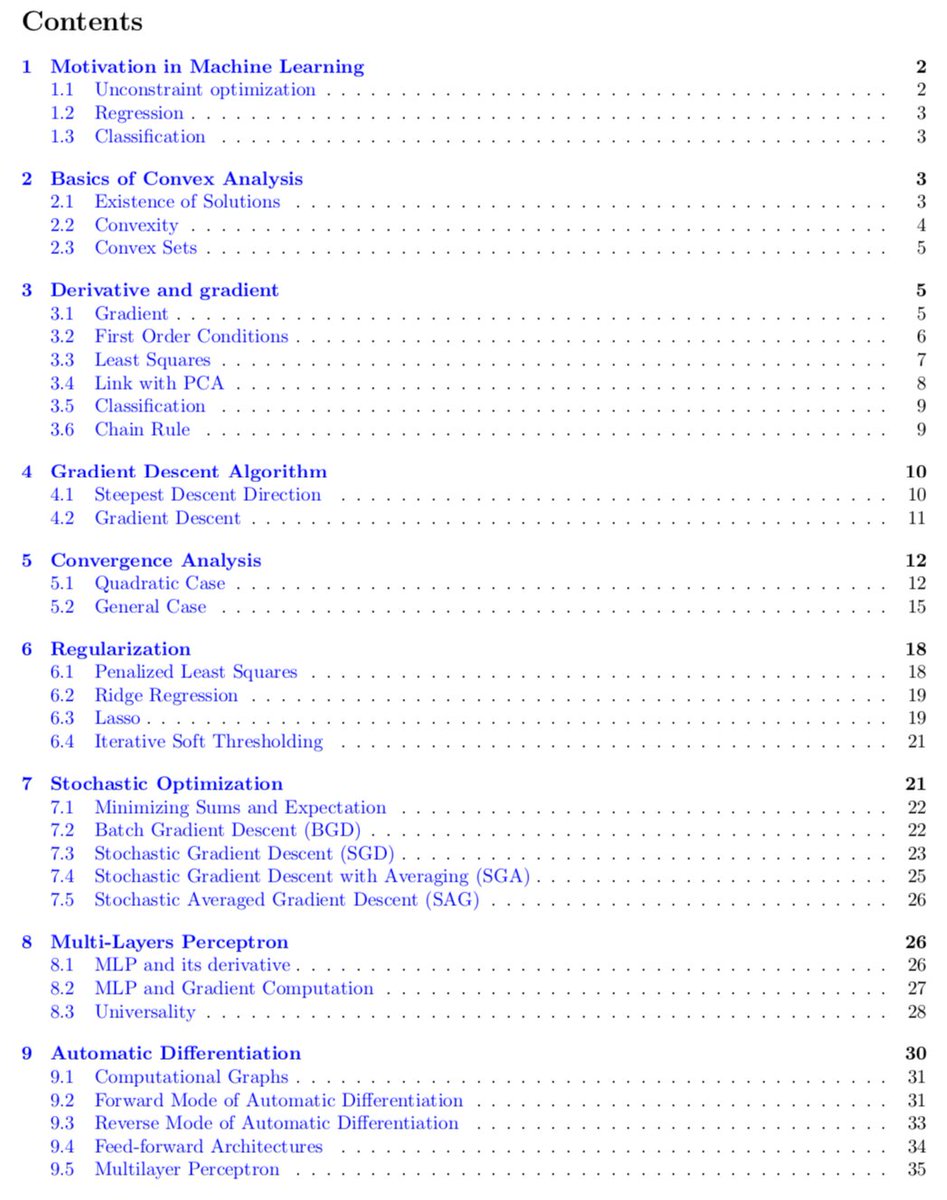

regularization and optimization

|

Connecting Optimization and Regularization Paths

We study the implicit regularization properties of optimization techniques by explicitly connecting their optimization paths to the regularization paths of |

|

Deep Learning Basics Lecture 3: regularization

What is regularization? • In general: any method to prevent overfitting or help the optimization. • Specifically: additional terms in the training |

|

Fast Optimization Methods for L1 Regularization: A Comparative

L1 regularization is effective for feature selection but the resulting optimization is challenging due to the non-differentiability of the 1-norm. In this |

|

Regularization Penalty Optimization for Addressing Data Quality

Regularization Penalty Optimization for Addressing Data Quality Variance in. OoD Algorithms. Runpeng Yu1 |

|

CS273B Lecture 6: regularization and optimization for deep learning

L2 regularization (aka weight decay). • Multi-task learning; data augmentation. • Dropout. Optimization—overcome underfitting. • SGD SGD with momentum. |

|

On the regularization and optimization in quantum detector

22 juil. 2022 On the regularization and optimization in quantum detector tomography. Shuixin Xiao Yuanlong Wang |

|

Least Squares Optimization with L1-Norm Regularization

case approximate optimization |

|

Learning with Kernels

Support Vector Machines Regularization |

|

A Machine Learning Approach for Air Quality Prediction: Model

24 févr. 2018 Prediction: Model Regularization and Optimization ... on big data by using large-scale optimization algorithms. Although there exist some ... |

|

Regularization and Optimization of Backpropagation

Sum of absolute values of all weights across the whole neural network. Keith L. Downing. Regularization and Optimization of Backpropagation |

|

(PDF) Kernels: Regularization and Optimization - ResearchGate

PDF This thesis extends the paradigm of machine learning with kernels This paradigm is based on the idea of generalizing an inner product between |

|

Deep learning - Optimization and Regularization in deep networks

6 mar 2021 · Optimization and Regularization in deep networks Easy data augmentation techniques (EDA) consists of four simple but powerful |

|

Deep Learning Basics Lecture 3: regularization - csPrinceton

What is regularization? • In general: any method to prevent overfitting or help the optimization • Specifically: additional terms in the training |

|

Regularization Optimization and Approximation in - ENSA-Kénitra

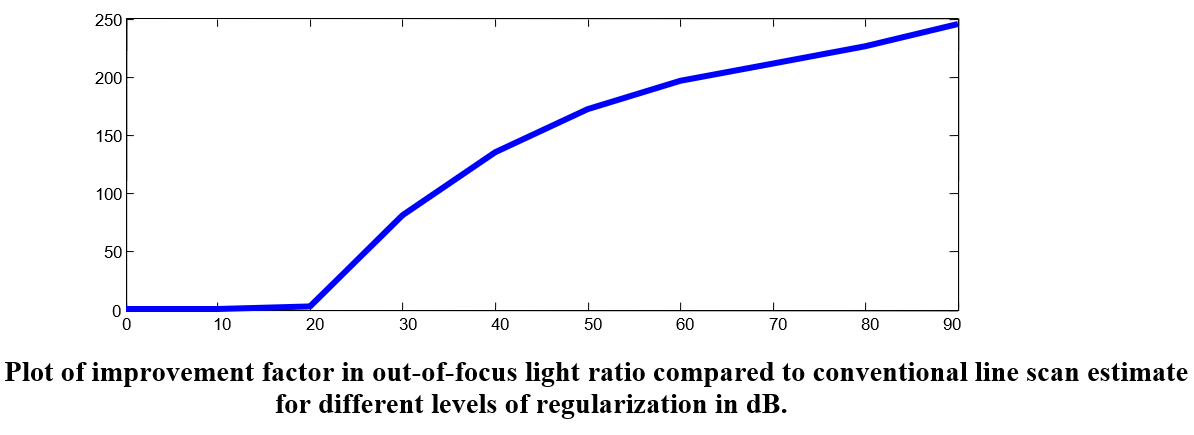

The problem of studying the spectral composition of a beam of light: Suppose that the observed radiation is non-homogeneous and that the |

|

Lecture 2: Overfitting Regularization

A different view of regularization: we want to optimize the error while keeping the L2 norm of the weights wT w bounded COMP-652 and ECSE-608 Lecture 2 |

|

Connecting Optimization and Regularization Paths

responding” regularized problems This surprising connection shows that iterates of optimization techniques such as gradient descent and mirror descent are |

|

Regularization and Optimization strategies in Deep Convolutional

13 déc 2017 · Abstract: Convolution Neural Networks known as ConvNets exceptionally perform well in many complex machine learning tasks |

|

Fast Optimization Methods for L1 Regularization

L1 regularization is effective for feature selection but the resulting optimization is challenging due to the non-differentiability of the 1-norm In this |

|

A Perspective Analysis of Regularization and Optimization

28 juil 2021 · To deal with such scenarios conventional optimization and regularization methods have been practiced to solve for optimum solutions |

|

Coursera-Ng-Improving-Deep-Neural-Networks-Hyperparameter

-Ng-Improving-Deep-Neural-Networks-Hyperparameter-tuning-Regularization-and-Optimization Slides/Week 1 Practical aspects of Deep Learning pdf |

What is the difference between optimization and regularization?

The main conceptual difference is that optimization is about finding the set of parameters/weights that maximizes/minimizes some objective function (which can also include a regularization term), while regularization is about limiting the values that your parameters can take during the optimization/learning/training,What is regularization in optimization?

Regularization is a technique which makes slight modifications to the learning algorithm such that the model generalizes better. This in turn improves the model's performance on the unseen data as well.Does regularization improve optimization?

Very often, regularization techniques optimize estimators by reducing their variance without increasing the corresponding bias( read my previous article about bias and variance).- While training a machine learning model, the model can easily be overfitted or under fitted. To avoid this, we use regularization in machine learning to properly fit a model onto our test set. Regularization techniques help reduce the chance of overfitting and help us get an optimal model.

|

Deep Learning Basics Lecture 3: regularization

What is regularization? • In general: any method to prevent overfitting or help the optimization • Specifically: additional terms in the training optimization |

|

Connecting Optimization and Regularization Paths - NeurIPS

We study the implicit regularization properties of optimization techniques by explicitly connecting their optimization paths to the regularization paths of “cor- |

|

Neural Networks: Optimization & Regularization - GitHub Pages

Optimization Regularization Shan-Hung Wu Dropout Manifold Regularization So far, we modify the optimization algorithm to better train the model |

|

Regularization Matters: Generalization and Optimization of Neural

Motivated by the provably better generalization of regularized neural nets for our constructed instance, in Section 3 we study their optimization, as the previously |

|

Regularization and Optimization of Backpropagation - Department of

L1 Regularization Ω1(θ) = ∑wi ∈θ wi Sum of absolute values of all weights across the whole neural network Keith L Downing Regularization and |

|

Least Squares Optimization with L1-Norm Regularization

This project surveys and examines optimization ap- proaches proposed for parameter estimation in Least Squares linear regression models with an L1 penalty |

|

Fast Optimization Methods for L1 Regularization - UBC Computer

L2 regularization), but yields sparse models that are more easily interpreted two new optimization techniques that can be used for L1-regularized optimiza- |

|

Stochastic Methods for l1-regularized Loss Minimization - Journal of

Keywords: L1 regularization, optimization, coordinate descent, mirror descent, sparsity 1 Introduction We present optimization procedures for solving problems |

![PDF] Regularization and Optimization strategies in Deep PDF] Regularization and Optimization strategies in Deep](https://i1.rgstatic.net/publication/321794407_Regularization_and_Optimization_strategies_in_Deep_Convolutional_Neural_Network/links/5a31ebaaaca2727144f30f9d/largepreview.png)