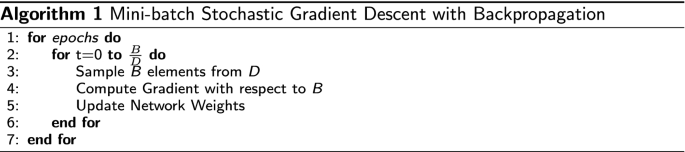

stochastic gradient descent

|

Accelerating Stochastic Gradient Descent using Predictive Variance

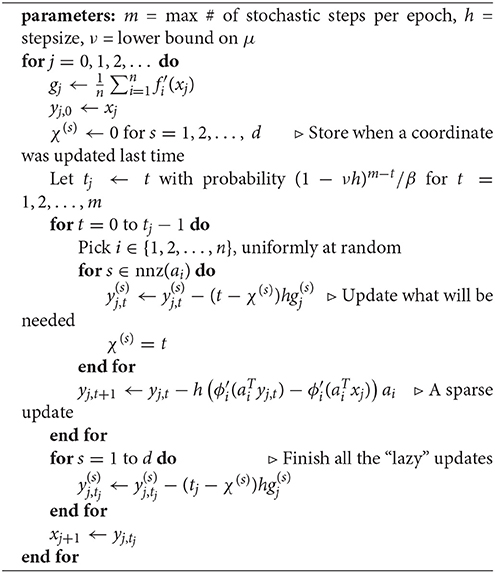

To remedy this prob- lem we introduce an explicit variance reduction method for stochastic gradient descent which we call stochastic variance reduced gradient |

| Byzantine Stochastic Gradient Descent |

|

Hogwild: A Lock-Free Approach to Parallelizing Stochastic Gradient

Stochastic Gradient Descent (SGD) is a popular algorithm that can achieve state- of-the-art performance on a variety of machine learning tasks. |

|

Train faster generalize better: Stability of stochastic gradient descent

We show that parametric models trained by a stochastic gradient method (SGM) with few it- erations have vanishing generalization error. We. |

|

Fine-Grained Analysis of Stability and Generalization for Stochastic

Finally we study learning problems with (strongly) convex objectives but non-convex loss functions. 1. Introduction. Stochastic gradient descent (SGD) has |

|

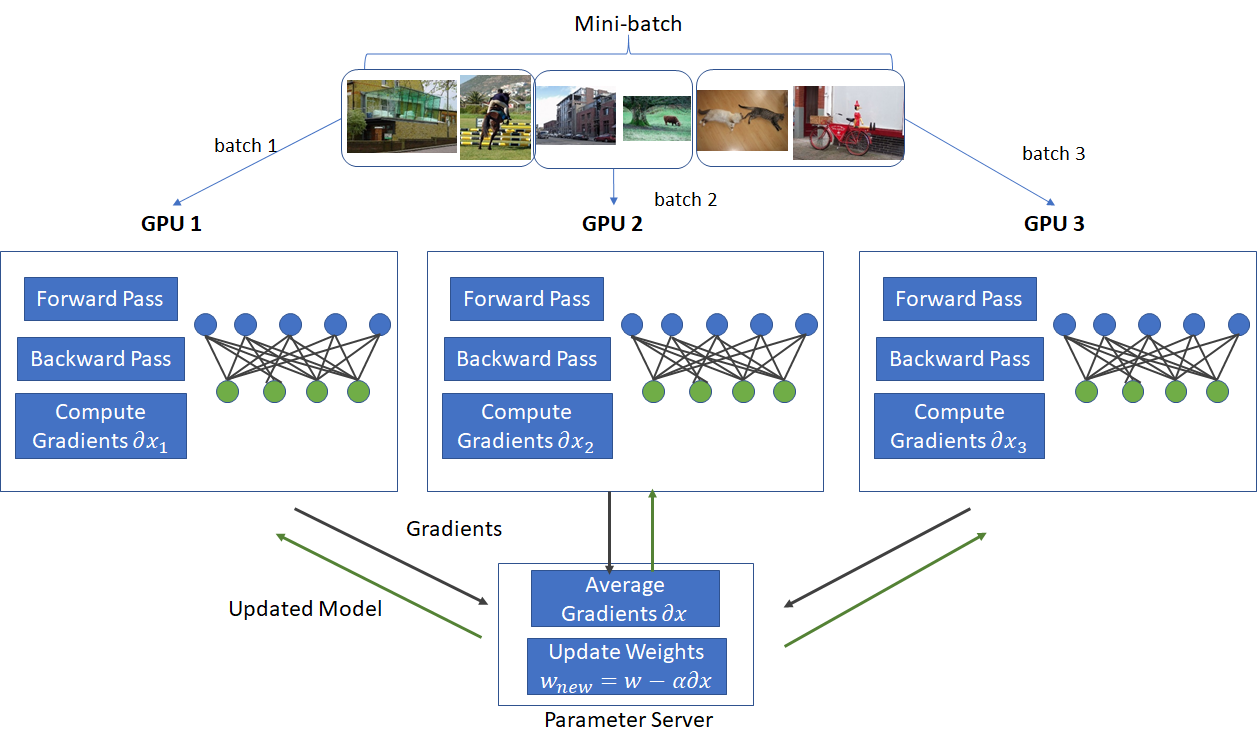

Asynchronous Decentralized Parallel Stochastic Gradient Descent

In AllReduce Stochastic Gradient Descent implementa- tion (AllReduce-SGD) (Luehr 2016; Patarasuk and Yuan |

|

Parallelized Stochastic Gradient Descent

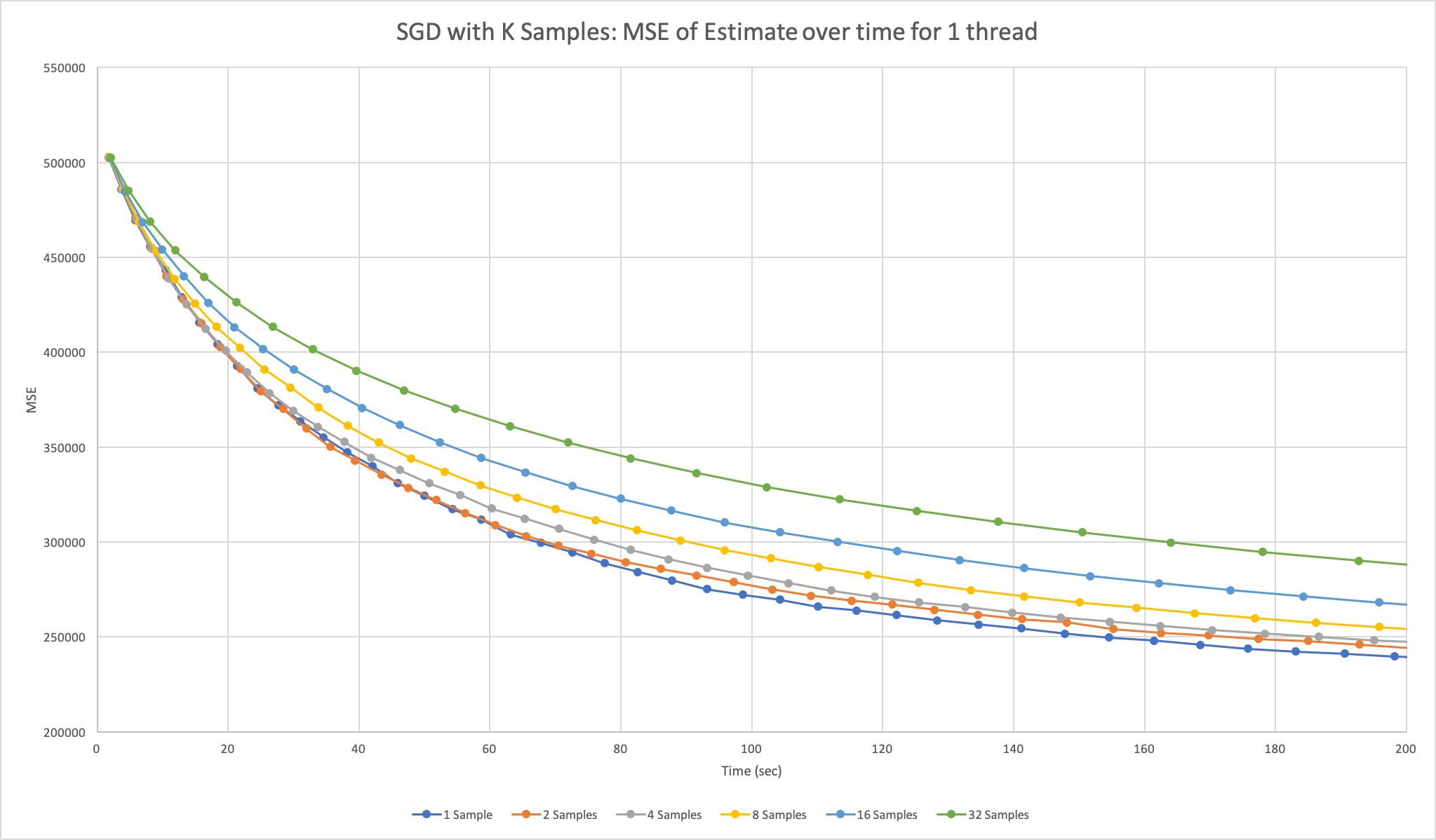

In this paper we present the first parallel stochastic gradient descent algorithm including a detailed analysis and experimental evi- dence. Unlike prior work |

|

Data-Dependent Stability of Stochastic Gradient Descent

We establish a data-dependent notion of algo- rithmic stability for Stochastic Gradient Descent. (SGD) and employ it to develop novel general-. |

|

Stochastic Gradient Descent as Approximate Bayesian Inference

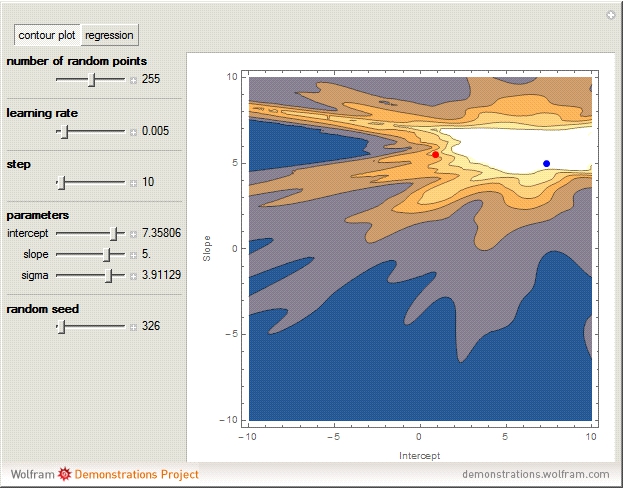

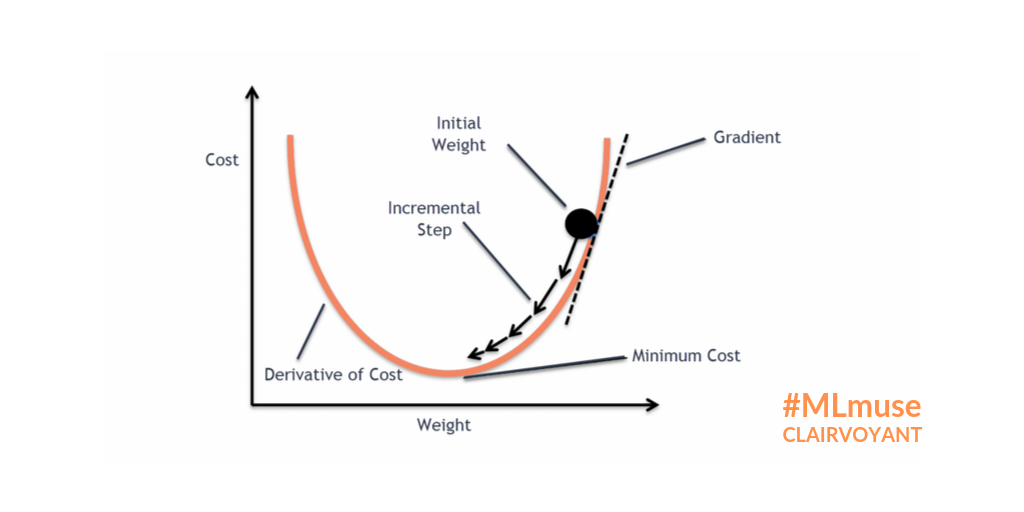

Stochastic gradient descent (SGD) has become crucial to modern machine learning. SGD optimizes a function by following noisy gradients with a decreasing step |

|

Tight analyses for non-smooth stochastic gradient descent 1

Keywords: Gradient Descent Lipschitz Functions |

|

Accelerating Stochastic Gradient Descent using Predictive Variance

lem we introduce an explicit variance reduction method for stochastic gradient descent which we call stochastic variance reduced gradient (SVRG). |

|

Stochastic Gradient Boosting Jerome H. Friedman* March 26 1999

1999. 3. 26. Abstract. Gradient boosting constructs additive regression models by sequentially fitting a simple parameterized function (base learner) to ... |

|

Hogwild: A Lock-Free Approach to Parallelizing Stochastic Gradient

Stochastic Gradient Descent (SGD) is a popular algorithm that can achieve state- of-the-art performance on a variety of machine learning tasks. |

|

Stochastic Gradient Descent in Correlated Settings: A Study on

Stochastic gradient descent (SGD) and its variants have established themselves as the go-to algorithms for large-scale machine learning problems with |

|

SGD-QN: Careful Quasi-Newton Stochastic Gradient Descent

The SGD-QN algorithm is a stochastic gradient descent algorithm that makes careful use of second- order information and splits the parameter update into |

|

Understanding and Optimizing Asynchronous Low-Precision

Stochastic gradient descent (SGD) is one of the most popular numer- ical algorithms used in machine learning and other domains. Since this is likely to continue |

|

FLUCTUATION-DISSIPATION RELATIONS FOR STOCHASTIC

Here we derive stationary fluctuation-dissipation relations that link measurable quantities and hyperparameters in the stochastic gradient descent al- gorithm. |

|

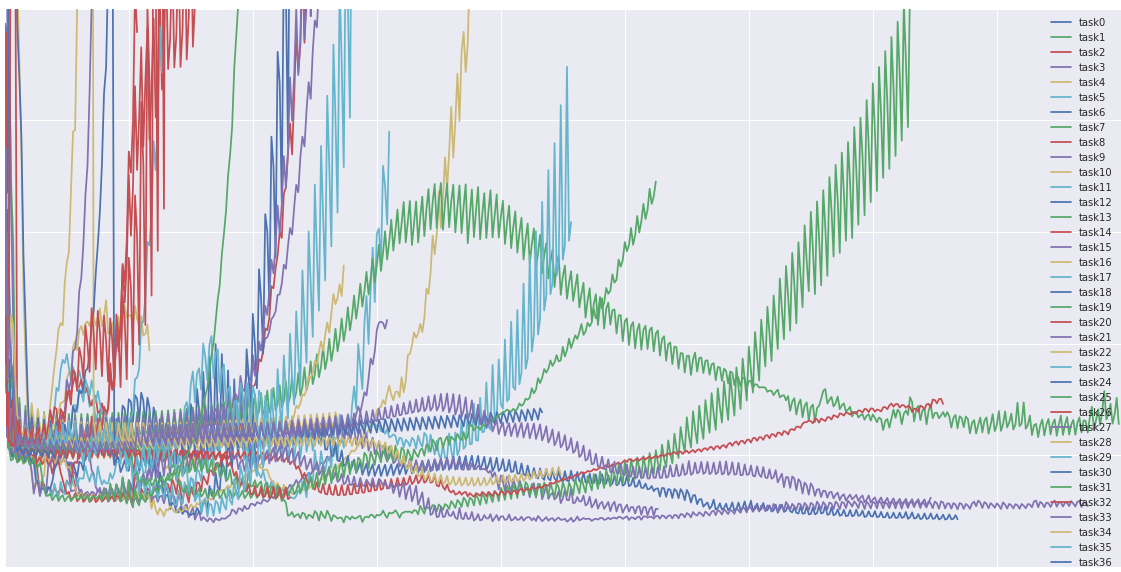

Semi-Cyclic Stochastic Gradient Descent

Semi-Cyclic Stochastic Gradient Descent. Hubert Eichner 1 Tomer Koren 1 H. Brendan McMahan 1 Nathan Srebro 2 Kunal Talwar 1. Abstract. |

|

Train faster generalize better: Stability of stochastic gradient descent

Stability of stochastic gradient descent distribution that generated the training data. Throughout we assume we are training models using n sampled data. |

|

Barzilai-Borwein Step Size for Stochastic Gradient Descent

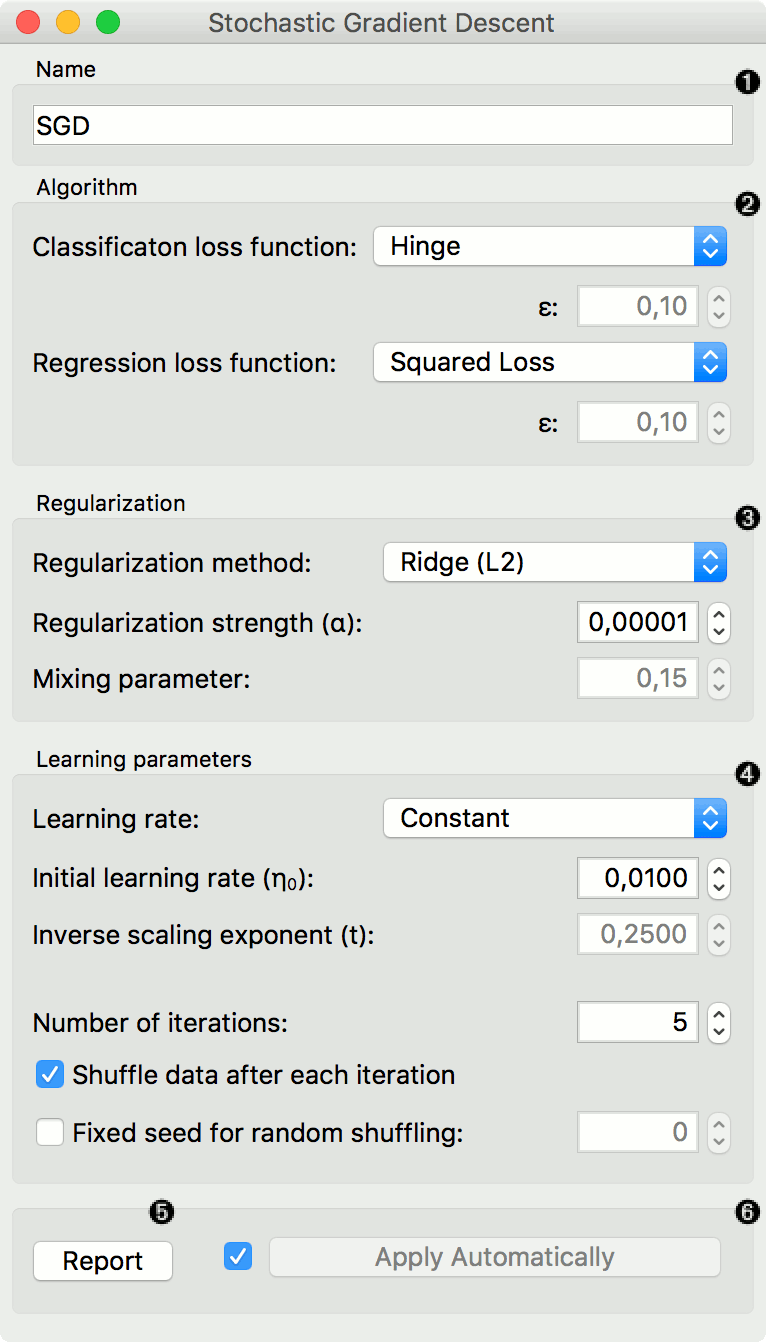

One of the major issues in stochastic gradient descent (SGD) methods is how to choose an appropriate step size while running the algorithm. |

|

Stochastic Gradient Descent - CMU Statistics

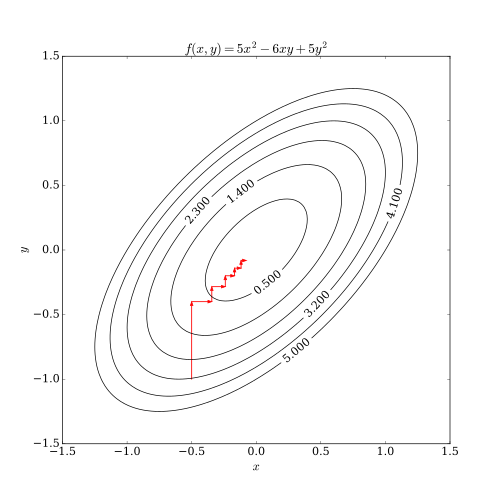

Proximal gradient descent: let x(0) ? Rn repeat: In comparison stochastic gradient descent or SGD (or incremental gradient descent) repeats: |

|

Lecture 24: November 26 241 Stochastic Gradient Descent

This is called stochastic gradient descent or short SGD Figure 24 3: Convergence rates of Standard Gradient Descent vs Stochastic Gradient Descent |

|

Stochastic Gradient Descent in Theory and Practice - Stanford AI Lab

Stochastic gradient descent (SGD) is the most widely used optimization method in the machine learning community Researchers in both academia and industry |

|

Machine Learning I Lecture 52: Stochastic Gradient Descent

Lecture 5 2: Stochastic Gradient Descent Spring 2020 Stanley Chan School of Electrical and Computer Engineering Purdue University |

|

Stochastic Gradient Descent: The Workhorse of Machine Learning

Answer: bound it using the variance! • Variance of a constant plus a random variable is just the variance of that random variable so we don't need to think |

|

Lecture 5: Stochastic Gradient Descent - Cornell Computer Science

Lecture 5: Stochastic Gradient Descent CS4787 — Principles of Large-Scale Machine Learning Systems Combining two principles we already discussed into one |

|

Stochastic gradient methods for machine learning - DI ENS

Stochastic gradient methods (Robbins and Monro 1951) – Mixing statistics and optimization – Using smoothness to go beyond stochastic gradient descent |

|

Stochastic Gradient Descent Methods - University of Melbourne

22 oct 2020 · 2 Gradient Descent 3 Stochastic Gradient Descent 4 Stochastic Subgradient Methods Non-Smooth Optimization Problems |

|

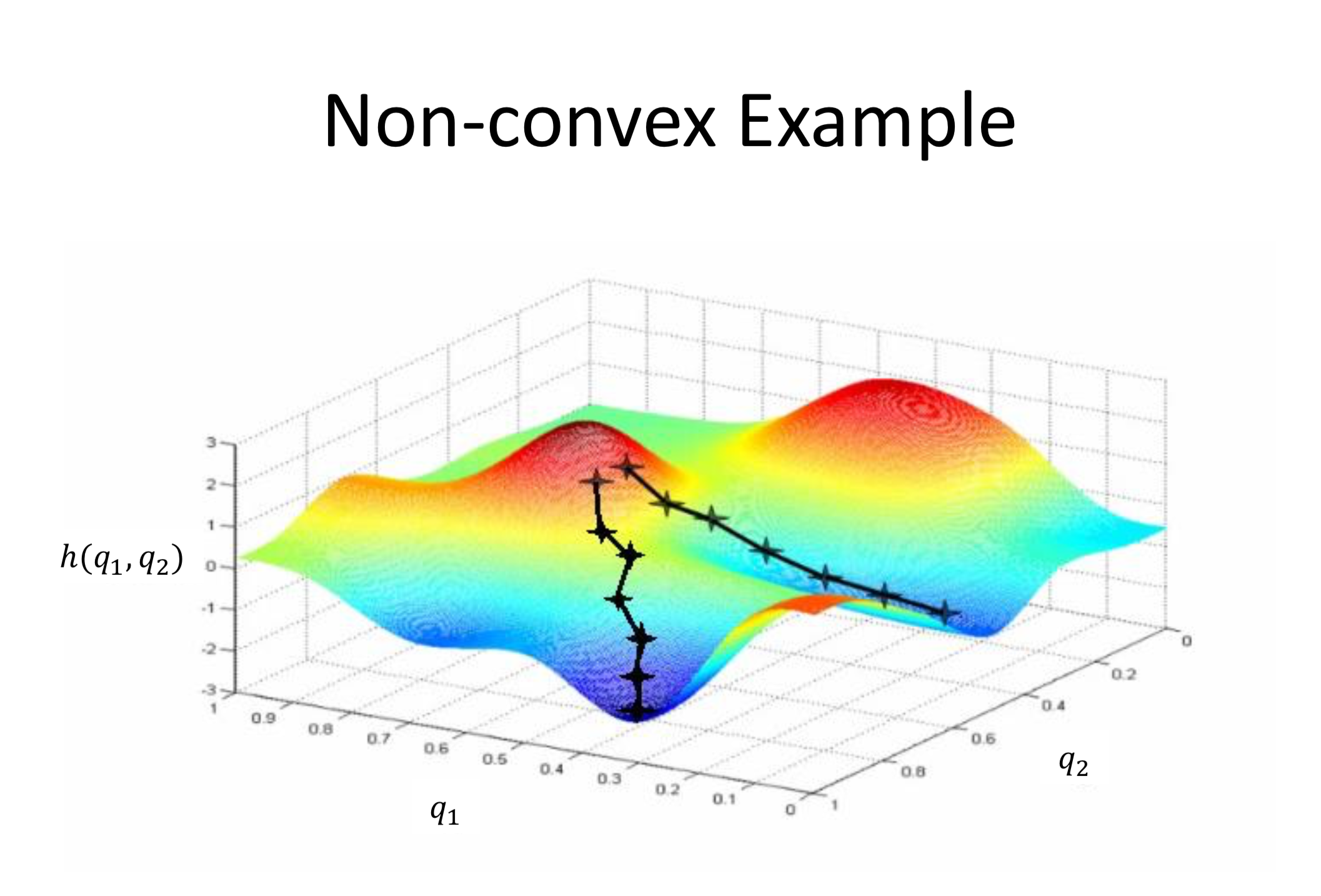

Lecture notes: Optimization for Machine Learning - arXiv

8 sept 2019 · The most important optimization algorithm in the context of machine learn- ing is stochastic gradient descent (SGD) especially for non-convex |

|

ECE 595: Machine Learning I Lecture 05 Gradient Descent

Stochastic Gradient Descent Most machine learning has built-in (stochastic) gradient descents (https://arxiv org/ pdf /1802 06175 pdf ICML 2018) |

|

Stochastic Gradient Descent - CMU Statistics

In comparison, stochastic gradient descent or SGD (or incremental gradient descent) repeats: x(k) = x(k−1) − tk · ∇fik (x(k−1)), k = 1,2,3, |

|

Lecture 24: November 26 241 Stochastic Gradient Descent

The idea is now to just use a subset of all samples, i e all possible fi(x)'s to approximate the full gradient This is called stochastic gradient descent, or short, SGD |

|

Stochastic Gradient Descent Tricks - Microsoft

stochastic gradient descent (SGD) This chapter provides background material, explains why SGD is a good learning algorithm when the training set is large, |

|

Descente de gradient pour le machine learning - Université Lumière

9 fév 2018 · « Stochastic gradient descent » est utilisé – parmi d'autres – pour l'apprentissage des réseaux de neurones profonds (deep learning) Page 26 |

|

Stochastic Gradient Descent as Approximate Bayesian Inference

Stochastic gradient descent (SGD) has become crucial to modern machine learning SGD optimizes a function by following noisy gradients with a decreasing step |

|

Stochastic gradient methods - Princeton University

Stochastic gradient descent (stochastic approximation) • Convergence analysis • Reducing variance via iterate averaging Stochastic gradient methods 11-2 |

|

Stochastic Gradient Descent with Only One Projection - NIPS

Although many variants of stochastic gradient descent have been proposed for large-scale convex optimization, most of them require projecting the solution at |

|

Variations of the Stochastic Gradient Descent for Multi-label

Stochastic Gradient Descent (SGD) methods have become popular in today's world of data abundance Many variants of SGD has since surfaced to attempt to |

|

Large-Scale Machine Learning with Stochastic Gradient Descent

optimization algorithms such as stochastic gradient descent show amazing perfor - mance for large-scale problems In particular, second order stochastic |

![PDF] Stochastic Gradient Descent for Efficient Logistic Regression PDF] Stochastic Gradient Descent for Efficient Logistic Regression](https://0.academia-photos.com/attachment_thumbnails/30793048/mini_magick20190426-841-1bc392h.png?1556315602)