choosing features for clustering

|

Feature selection for clustering using instance-based learning by

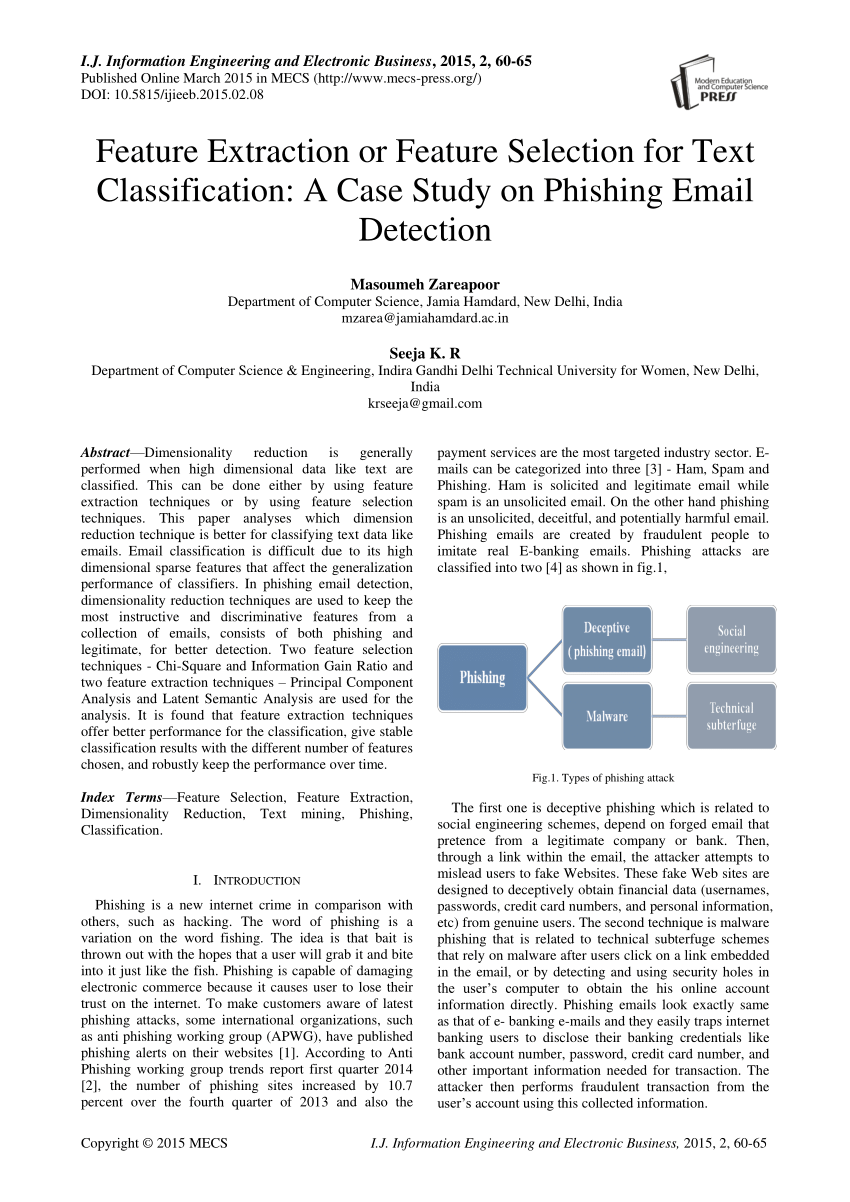

Feature selection for clustering is an active research topic and is used to identify salient features that are helpful for data clustering |

|

Feature Selection for Clustering

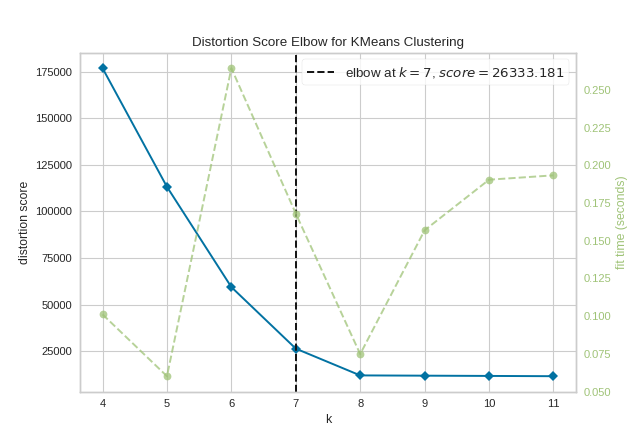

on the selected features and choose the subset that produces best cluster quality We choose k-means clustering algorithm which is very popular and simple |

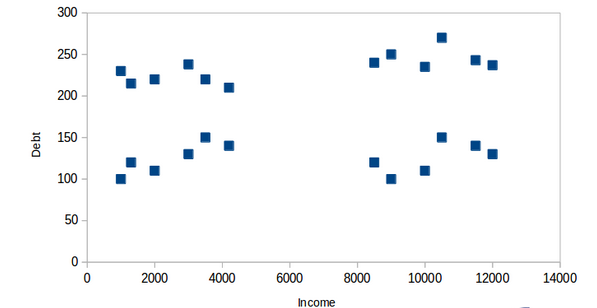

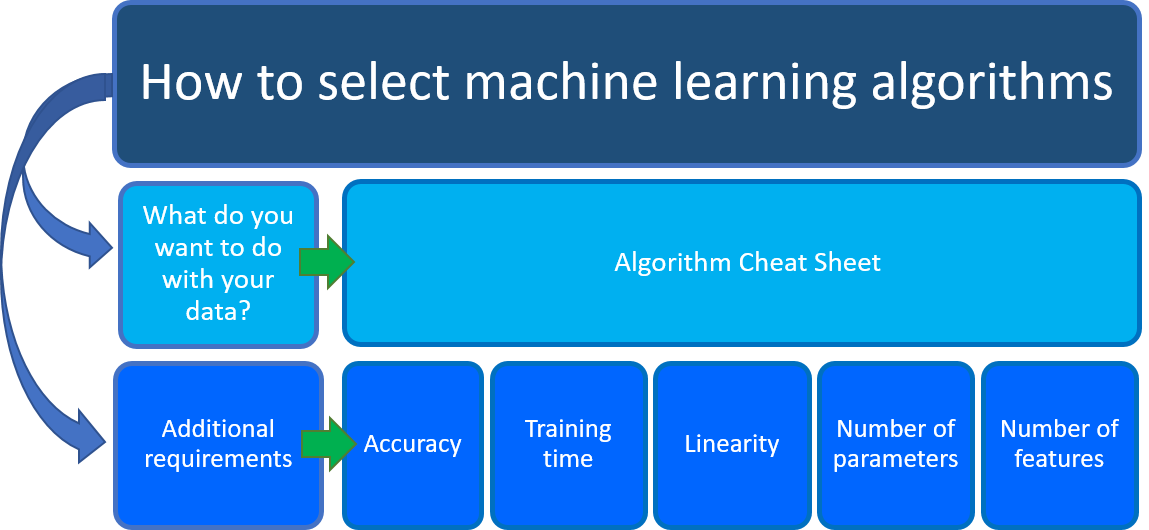

When choosing a clustering algorithm, you should consider whether the algorithm scales to your dataset.

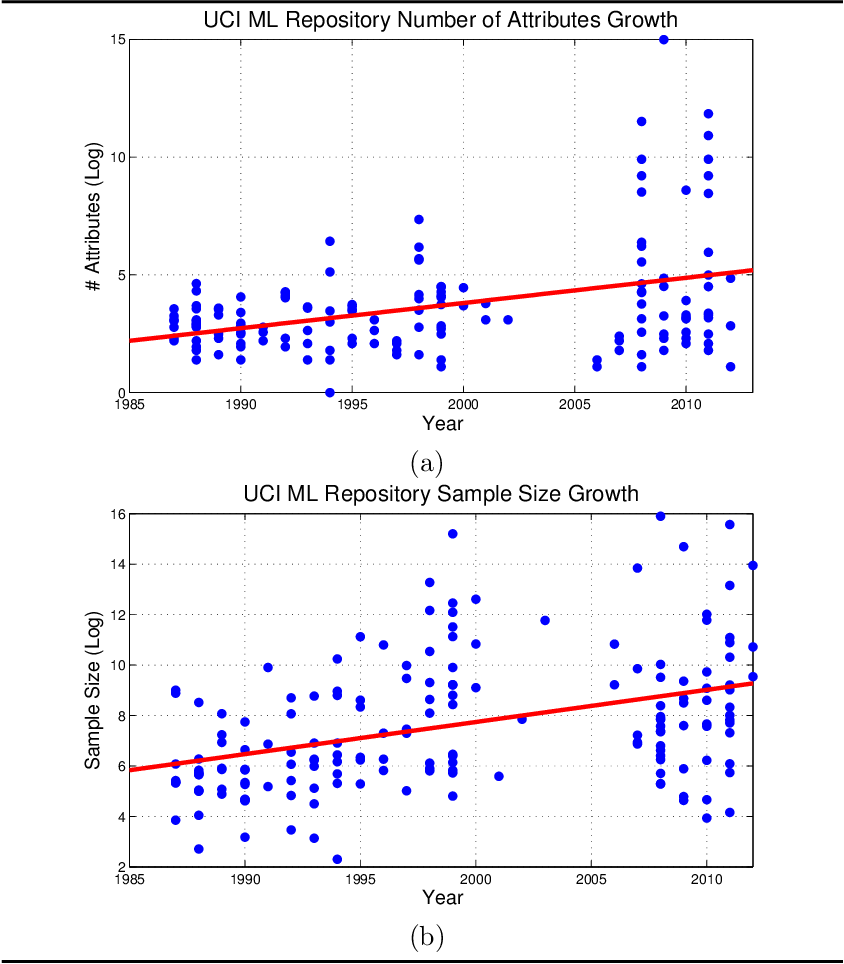

Datasets in machine learning can have millions of examples, but not all clustering algorithms scale efficiently.

Many clustering algorithms work by computing the similarity between all pairs of examples.

How do I choose the best features for clustering?

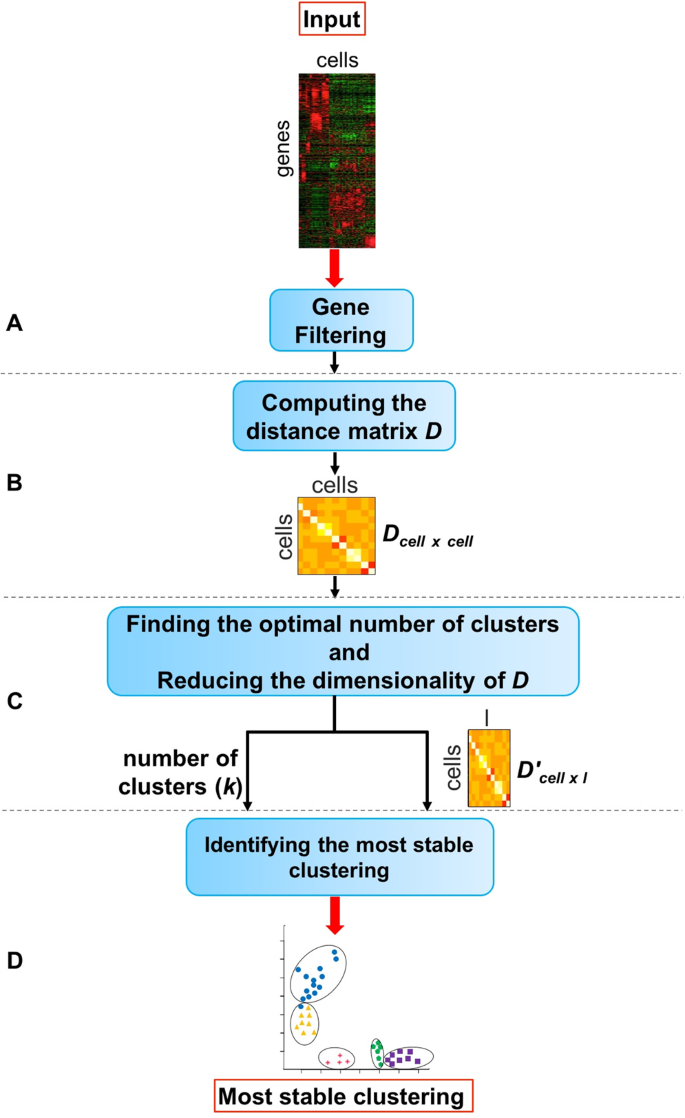

Ranking of features is done according to their importance on clustering.

An entropy-based ranking measure is introduced.

We then select a subset of features using a criterion function for clustering that is invariant with respect to different numbers of features.

What are features of a good clustering method?

A good clustering method requirements are: The ability to discover some or all of the hidden clusters.

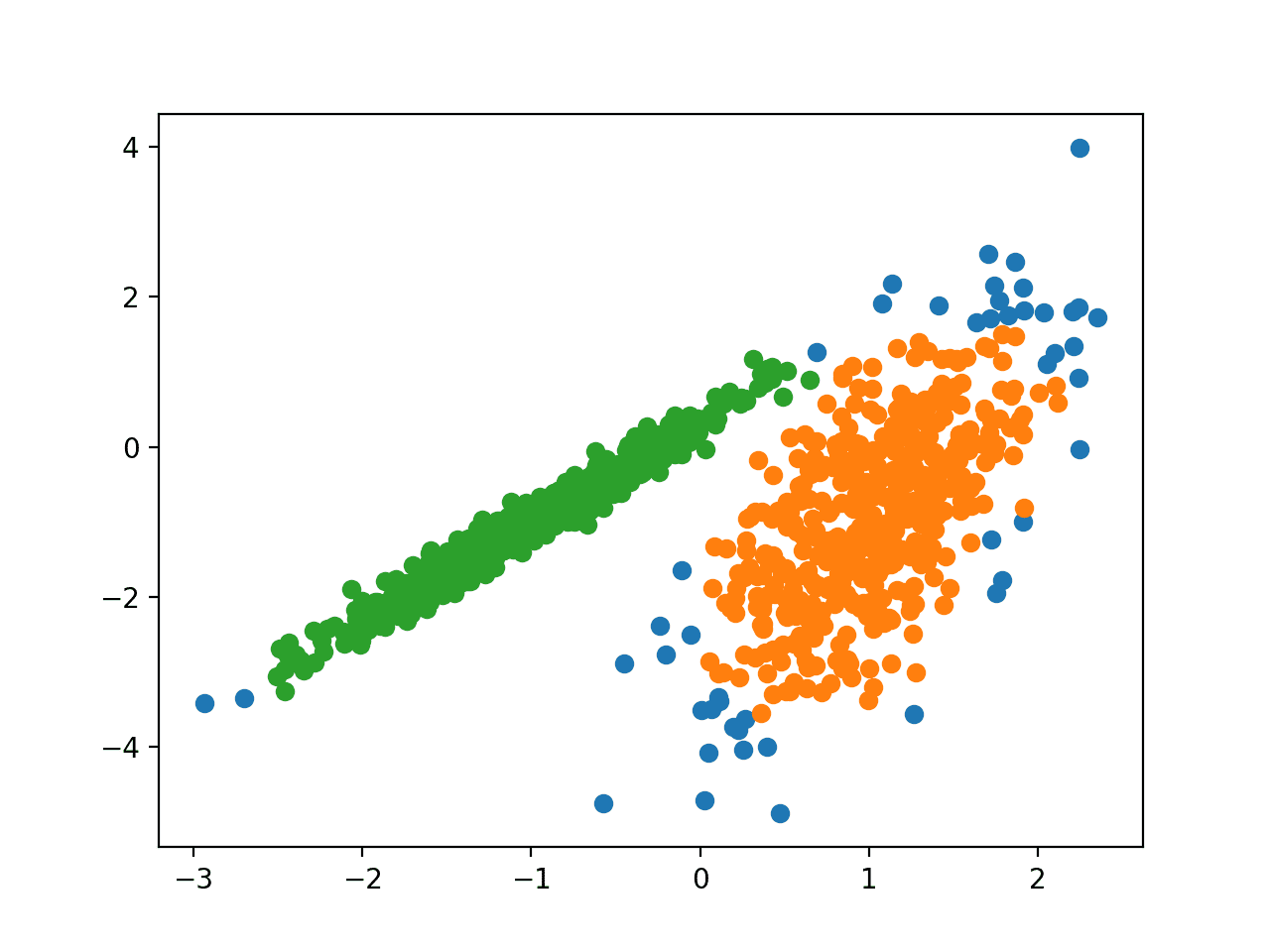

Within-cluster similarity and between-cluster dissimilarity.

Ability to deal with various types of attributes.

What is feature selection through clustering?

A framework for feature selection in clustering

1 Introduction.Let X denote an n×p data matrix, with n observations and p features. 2 An overview of sparse clustering. 2.

1) Past work on sparse clustering. 3 Sparse K-means clustering. 3.

1) The sparse K-means method. 4 Sparse hierarchical clustering.

|

Feature Selection for Clustering

some are important for clusters while others may hinder the clustering task. An efficient way of handling it is by selecting a subset of important features. |

|

Exploratory Analysis Feature Selection and Clustering

Feature Selection and Clustering. Davide Bacciu. Computational Intelligence & Machine Learning Group. Dipartimento di Informatica. Università di Pisa. |

|

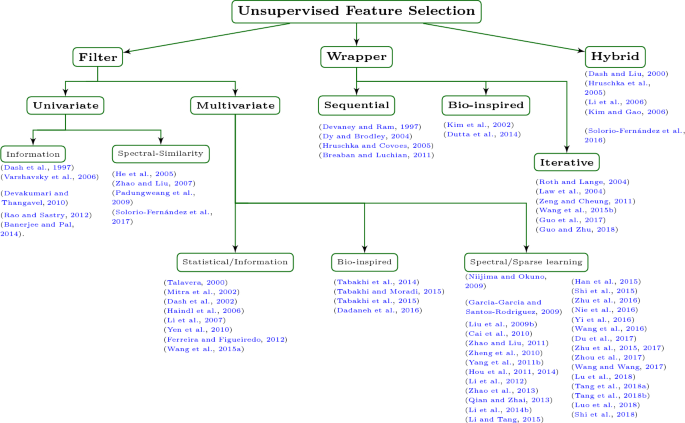

Feature Selection for Unsupervised Learning

We choose EM clustering as our clustering algorithm but other clustering methods can also be used in this framework. Recall that to cluster data |

|

2016 Weighted Multi-view Clustering with Feature Selection.pdf

Data clustering. Multi-view. Feature selection. Weighting. a b s t r a c t. In recent years combining multiple sources or views of datasets for data |

|

Unsupervised Feature Selection for the k-means Clustering Problem

It is worth not- ing that feature selection selects a small subset of actual features from the data and then runs the clustering algorithm only on the selected |

|

Feature clustering and mutual information for the selection of

Spectral data often have a large number of highly-correlated features making feature selection both necessary and uneasy. A methodology combining hierarchical |

|

Local feature selection in text clustering

to perform local feature selection for partitional hierarchical clustering of text collections. The proposed method explores the diversity of clus-. |

|

Integrative Generalized Convex Clustering Optimization and Feature

To perform feature selection in such scenarios we develop an adaptive shifted group- lasso penalty that selects features by shrinking them towards their loss- |

|

Unsupervised Feature Selection for Noisy Data

21 nov. 2019 It improves the quality of posterior analysis. (i.e. clustering classification). 4 RIFS Algorithm Description. In the this section |

|

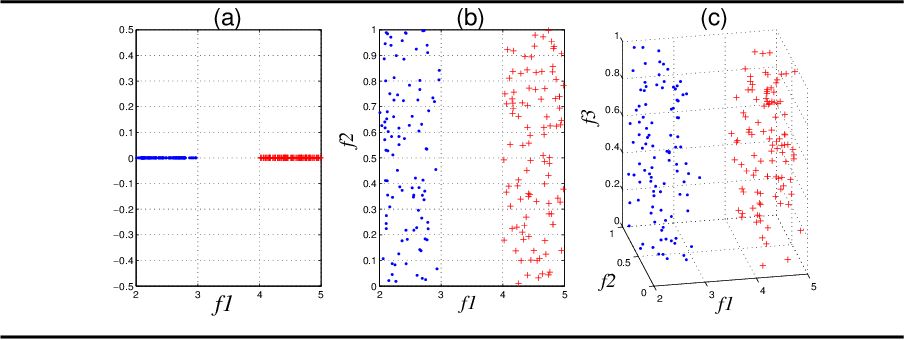

Feature Selection for Clustering – A Filter Solution

where c is the number of clusters. Below we discuss two important properties of unsupervised data that affect feature selection. Importance of Features over |

|

Feature Selection for Clustering - publicasuedu

An efficient way of handling it is by selecting a subset of important features It helps in finding clusters efficiently, understanding the data better and reducing data size for efficient storage, collection and process- ing The task of finding original important features for unsupervised data is largely untouched |

|

Feature Selection for Unsupervised Learning - Journal of Machine

We repeat this process until we find the best feature subset with its corresponding clusters based on our feature evaluation criterion The wrapper approach divides |

|

Feature Selection in Clustering Problems

A novel approach to combining clustering and feature selection is pre- The task of selecting relevant features in classification problems can be viewed as one |

|

Feature Selection for Unsupervised Learning - Journal of Machine

The basic idea is to search through feature subset space, evaluating each candidate subset, Ft, by first clustering in space Ft using the clustering algorithm and |

|

Feature Selection with Attributes Clustering by - ScienceDirectcom

Attribute clustering methods make features cluster together rather than instances In this case, instances dis- tance metric is replaced by feature similarity measure |

|

Local feature selection in text clustering - CIn UFPE

At each division step, a feature selection criterion is applied to choose the features which are more relevant only considering the cluster being divided The number |

|

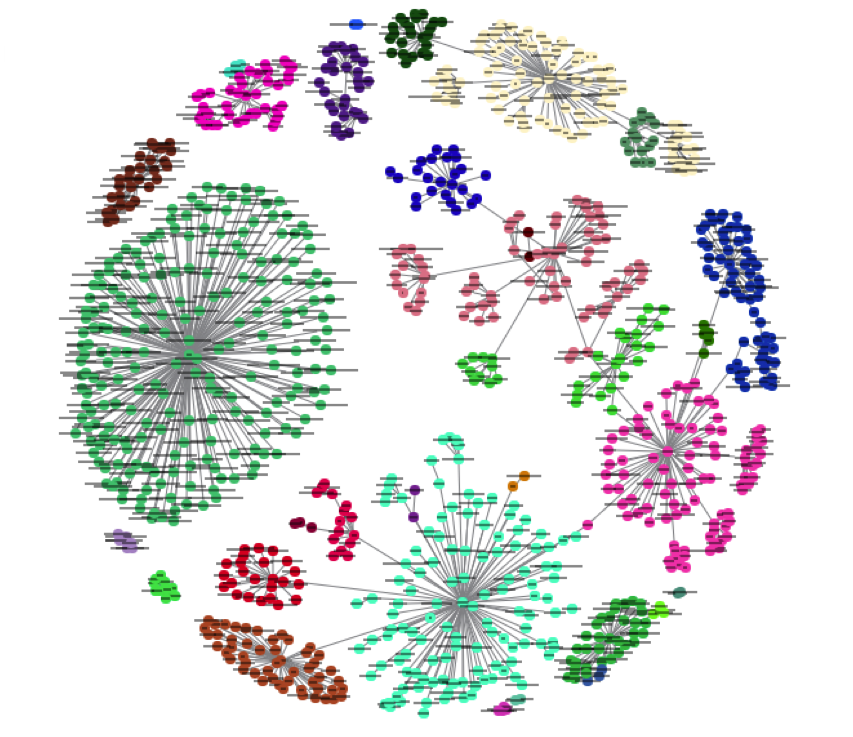

Localized feature selection for clustering - Jing Hua - Wayne State

In clustering, global feature selection algorithms attempt to select a common feature subset that is relevant to all clusters Conse- quently, they are not able to |