euclidean vs manhattan distance knn

Which distance is best for KNN?

Overall speaking, we can observe that using the Chi square distance function is the best choice for the categorical, numerical, and mixed types of datasets whereas k-NN by the cosine and Euclidean (and Minkowsky) distance function perform the worst over the mixed type of datasets.

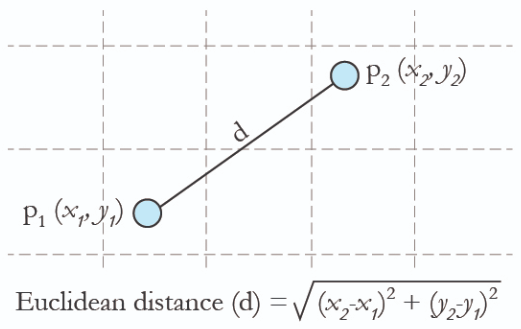

One of the biggest advantages of Euclidean distance is its simplicity.

The calculation is straightforward and intuitive, representing the most direct path between two points.

This also makes it easy to interpret and understand.

Euclidean distance can be applied across different dimensions - be it two, three, or more.

When to use Manhattan distance and when to use Euclidean distance?

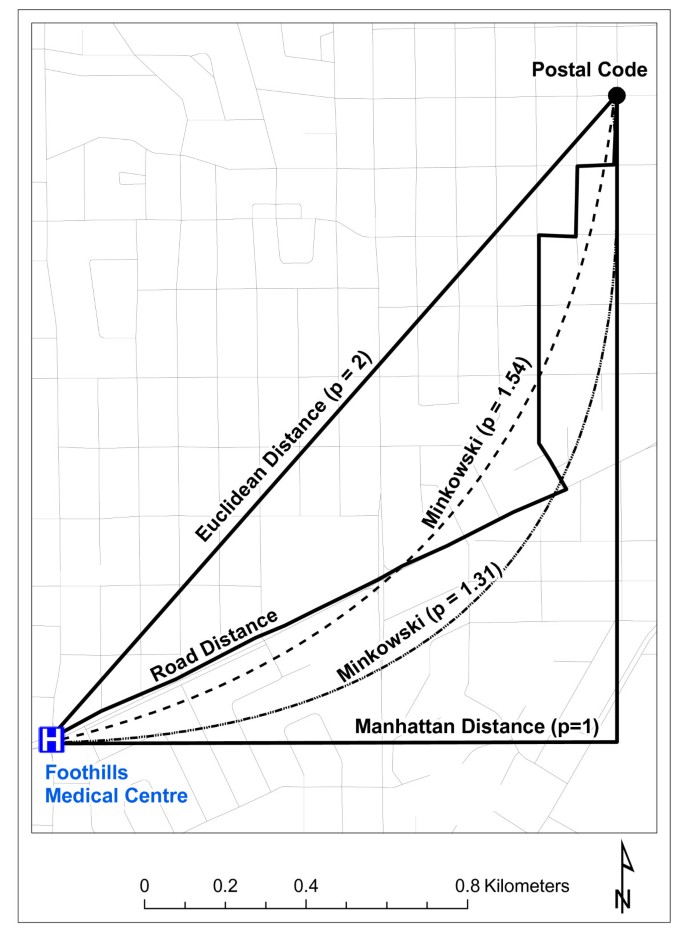

Manhattan distance is usually preferred over the more common Euclidean distance when there is high dimensionality in the data.

Hamming distance is used to measure the distance between categorical variables, and the Cosine distance metric is mainly used to find the amount of similarity between two data points.10 nov. 2019

|

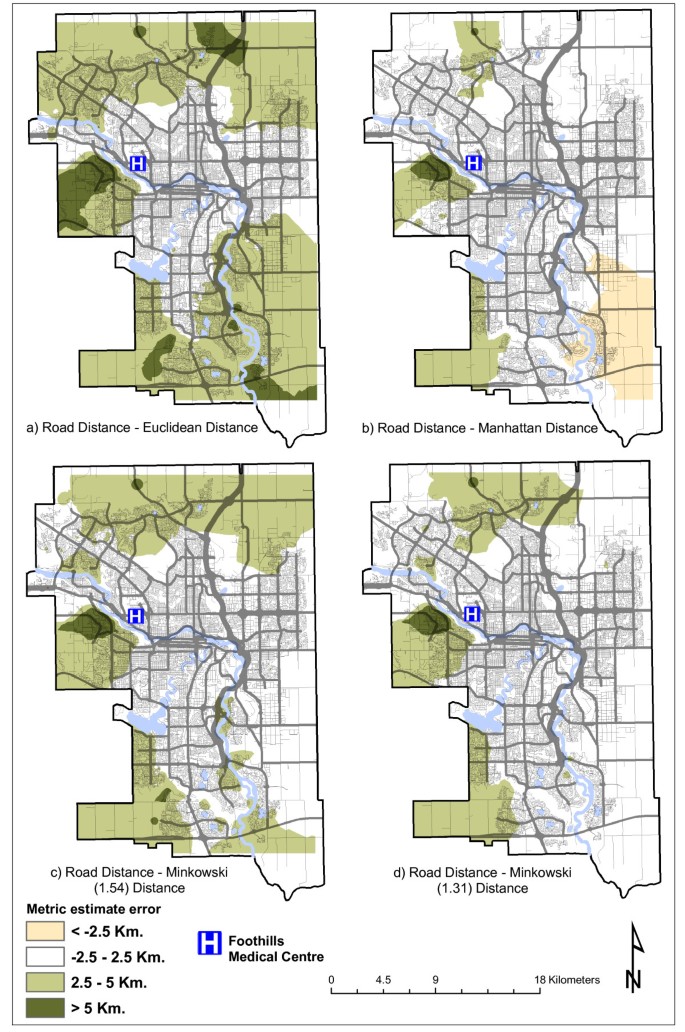

Comparison of A* Euclidean and Manhattan distance using

In the above figure the green line represents Euclidean distance whereas red blue and yellow lines are used to represent Manhattan distances. A* is a computer |

|

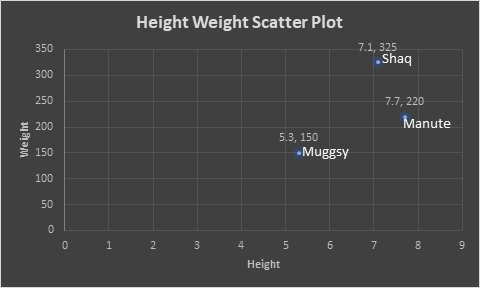

Project 2: KNN with Different Distance Metrics

Manhattan distance is the distance between two points measured along axes. Euclidean distance between points X and Y is the length. |

|

Analysis of Euclidean Distance and Manhattan Distance in the K

In addition to the K value the distance matrix is an important factor that depends on the KNN algorithm data set. The resulting distance matrix value will |

|

Performance Analysis of Distance Measures in K-Nearest Neighbor

Manhattan Distance and Euclidean Distance [3]. Alamri et al have also done an experimental study about satellite classification using distance matrices by |

|

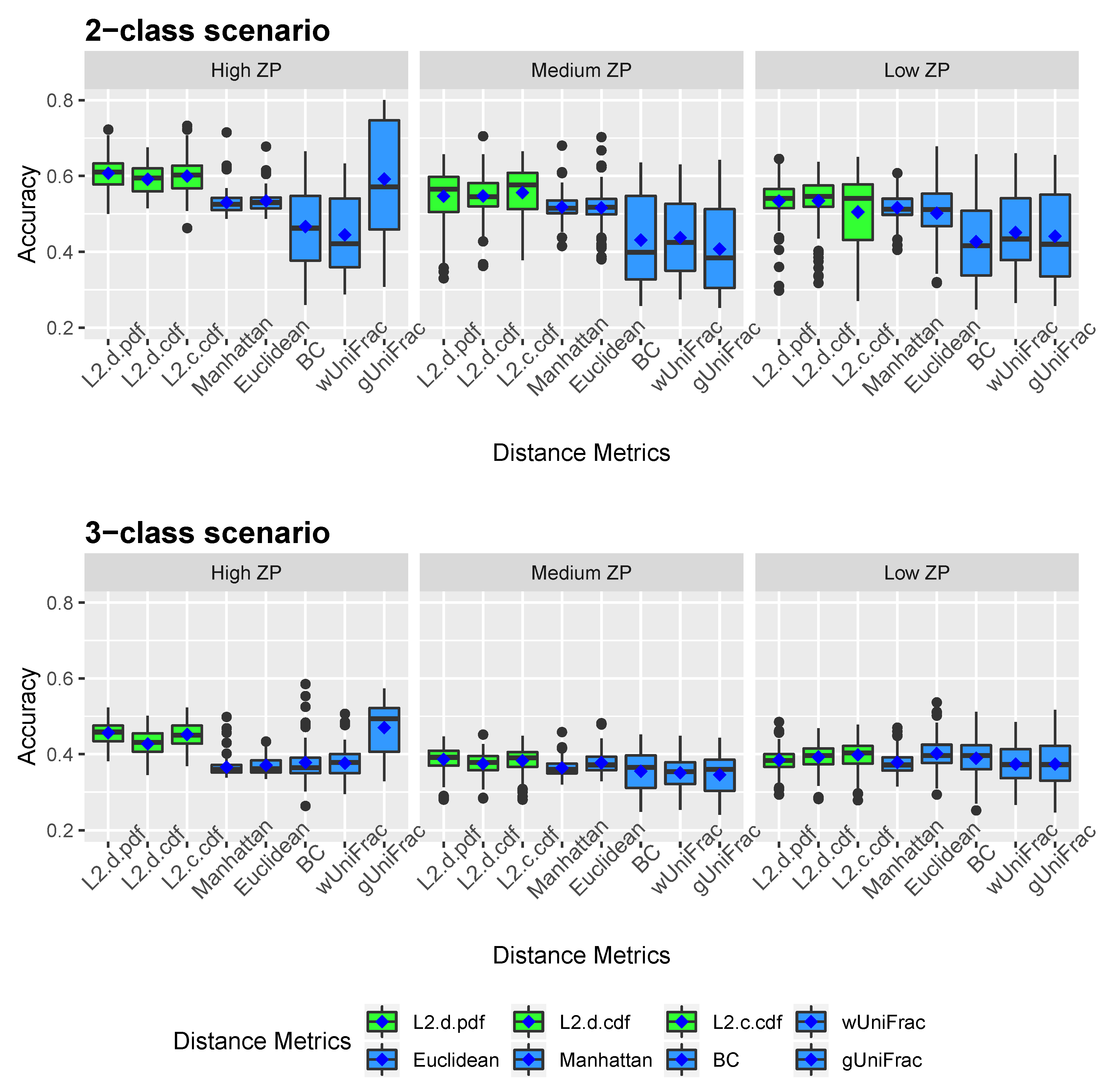

Effects of Distance Measure Choice on KNN Classifier Performance

These include. Euclidean Mahalanobis |

|

Comparison of Distance Models on K-Nearest Neighbor Algorithm in

research the author compared the Euclidean |

|

K-Nearest Neighbors(KNN) Classification with Different Distance

May 11 2020 It can be seen that Manhattan distance |

|

Effect of Dynamic Time Warping using different Distance Measures

Euclidean Distance Manhattan Distance |

|

A KNN Model Based on Manhattan Distance to Identify the SNARE

Jun 29 2020 188D |

|

Comparative analysis of performance K-nearest neighbor and

Keywords: Global encoding k-nearest neighbor |

|

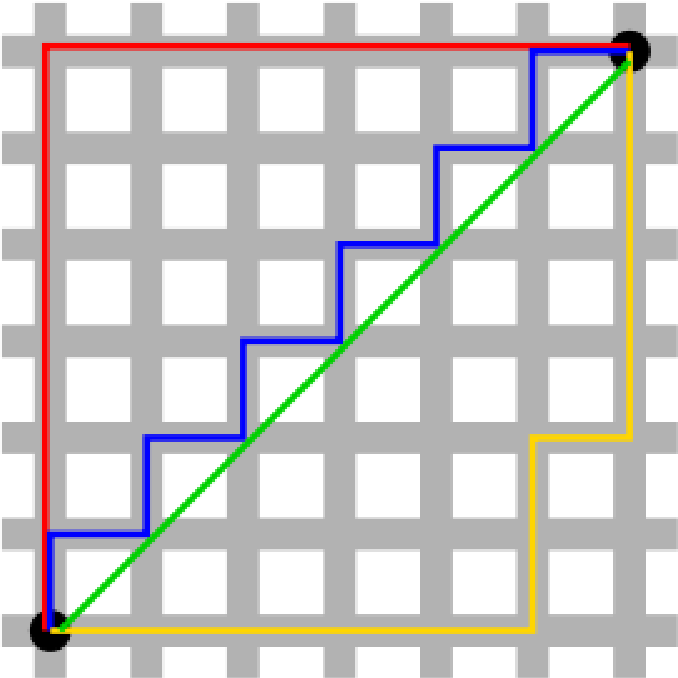

Distances in classification

The Euclidean distance or Euclidean metric is the "ordinary" (i e straight-line) distance The Manhattan distance, also known as rectilinear distance, city block |

|

Comparison of A*, Euclidean and Manhattan distance using - DiVA

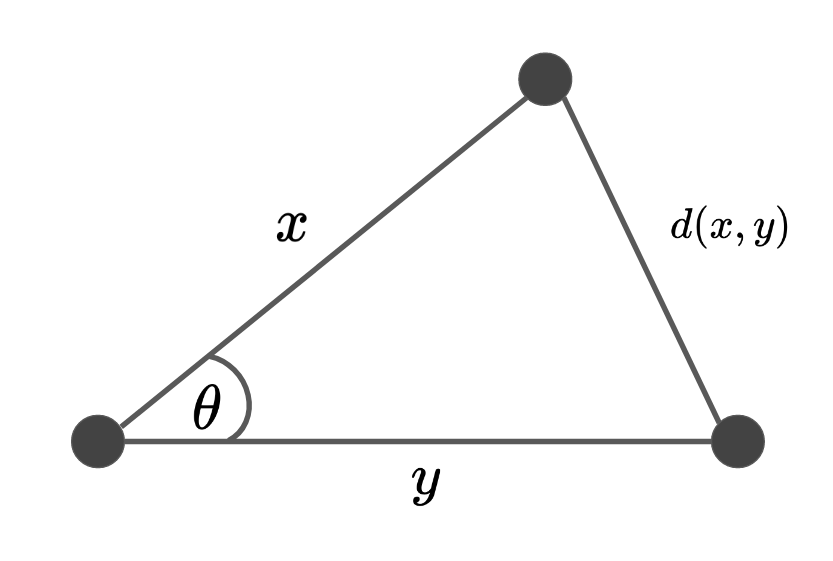

Euclidean distance is the shortest path between source and destination which is a straight line as shown in Figure 1 3 but Manhattan distance is sum of all the real distances between source(s) and destination(d) and each distance are always the straight lines as shown in Figure 1 4 |

|

K-Nearest Neighbors(KNN) Classification with Different Distance

11 mai 2020 · or the vector form features are extracted to represent the original data It can be seen that Manhattan distance, Euclidean distance and |

|

L02 - STAT 479: Machine Learning Lecture Notes

2 4 k-Nearest Neighbor Classification and Regression A generalization of the Euclidean or Manhattan distance is the so-called Minkowski distance, d(x[a] |

|

K-Nearest Neighbour (Continued)

What is k-nearest-neighbor classification • How can we determine Hamming distance (or L0 norm): count the number of features for which two instances differ Manhattan distance: where x Suppose that Euclidean distance is used |

|

Comprehensive Analysis of Distance and Similarity - CORE

23 nov 2015 · ments for the k-NN algorithm and Wi-Fi fingerprinting known measures such as the Euclidean distance or the Manhattan distance (City Block |