k means gradient descent

|

61 Clustering formalisms

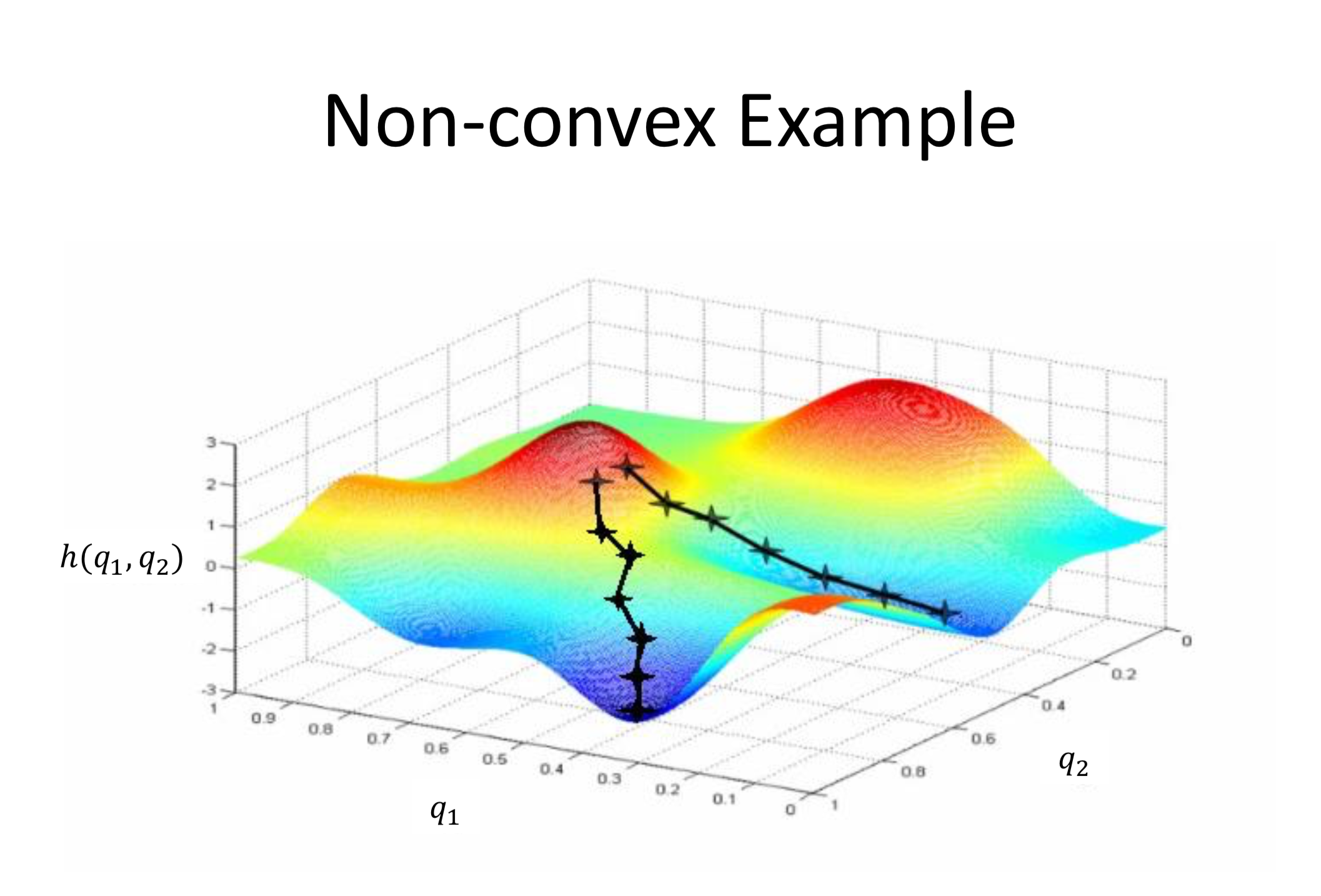

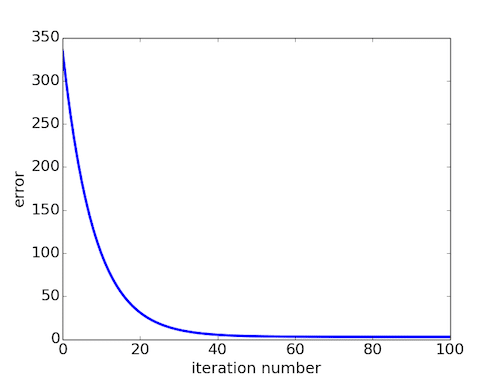

The standard k-means algorithm as well as the variant that uses gradient descent both are only guaranteed to converge to a local minimum not necessarily the |

|

Convergence Properties of the K-Means Algorithms

The K-Means algorithm can be de- scribed either as a gradient descent algorithm or by slightly extend- ing the mathematics of the EM algorithm to this hard |

|

Convergence Properties of the K-Means Algorithms

The K-Means algorithm can be de- scribed either as a gradient descent algorithm or by slightly extend- ing the mathematics of the EM algorithm to this hard |

|

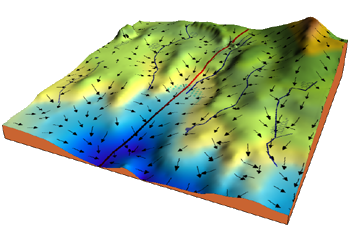

Clustering with Gradient Descent

Clustering with Gradient Descent compiled by Alvin Wan from Professor We also explored k-means clustering Again our objective is Minimizeµ1 µT ∑ i |

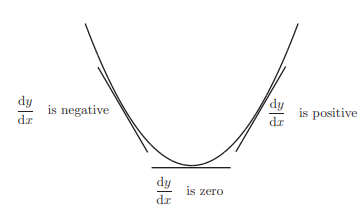

Stochastic gradient descent updates the model's parameters using the gradient of one training example at a time.

It randomly selects a training example, computes the gradient of the cost function for that example, and updates the parameters in the opposite direction.

Is K-means coordinate descent?

The answer is yes.

We have shown that k-means algorithm is exactly coordinate descent on L.

Remember that coordinate descent is to minimize the cost function with respect to one of the variables while holding the others static.

Every update to c and μ decreases the loss function to the previous value.

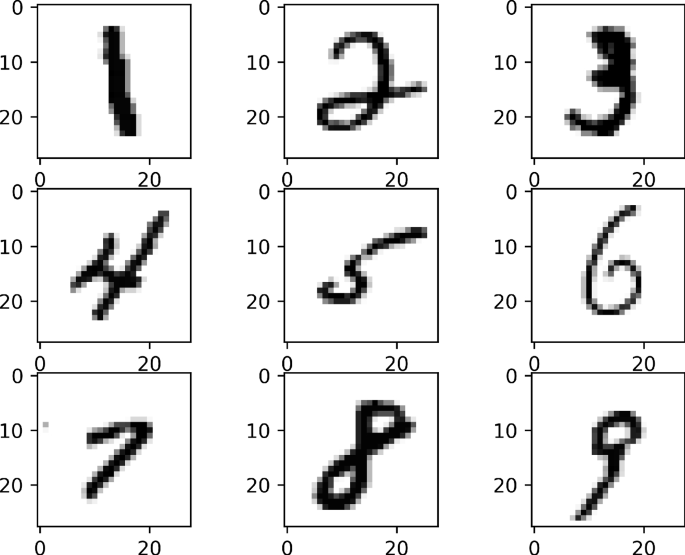

How does K-means work?

K-Means is an unsupervised machine learning algorithm that assigns data points to one of the K clusters.

Unsupervised, as mentioned before, means that the data doesn't have group labels as you'd get in a supervised problem.

What is the K-means algorithm in gradient descent?

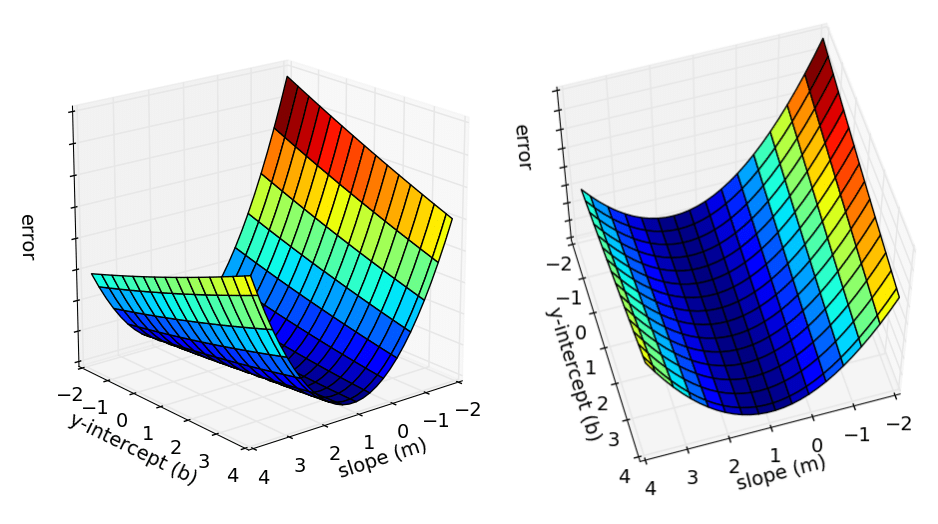

2 K-MEANS AS A GRADIENT DESCENT.

Given a set of P examples (xi), the K-Means algorithm computes k prototypes. w = (wk) which minimize the quantization error, i.e., the average distance between. each pattern and the closest prototype: E(w)

|

Convergence Properties of the K-Means Algorithms

K-Means clustering algorithm. The K-Means algorithm can be de- scribed either as a gradient descent algorithm or by slightly extend- ing the mathematics of |

|

Stochastic Backward Euler: An Implicit Gradient Descent Algorithm

21 mai 2018 In this paper we propose a backward Euler based algorithm for k-means clustering. Fixed-point iteration is performed to solve the implicit ... |

|

Convergence Properties of the K-Means Algorithms

K-Means clustering algorithm. The K-Means algorithm can be de- scribed either as a gradient descent algorithm or by slightly extend- ing the mathematics of |

|

15 Clustering

It includes discrete variables (the labels L) and so gradient-based methods aren't K-medoids clustering can also be improved by coordinate descent. |

|

Unsupervised Visual Representation Learning by Online

Based on this we propose a novel clustering-based pre- text task with online Constrained K-means (CoKe). Com- pared with the balanced clustering that each |

|

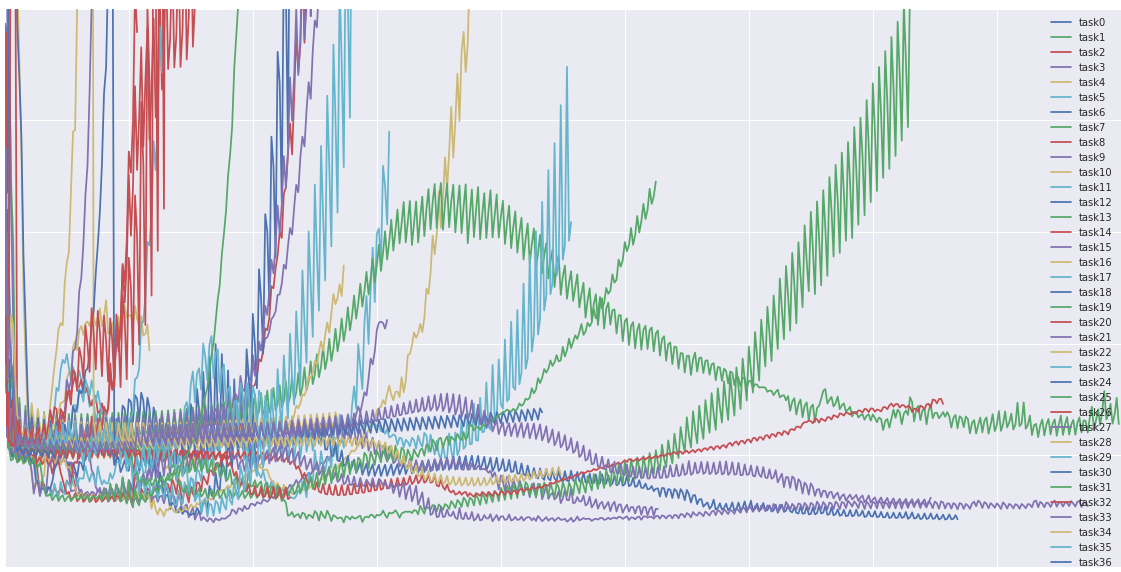

Convergence of online k-means

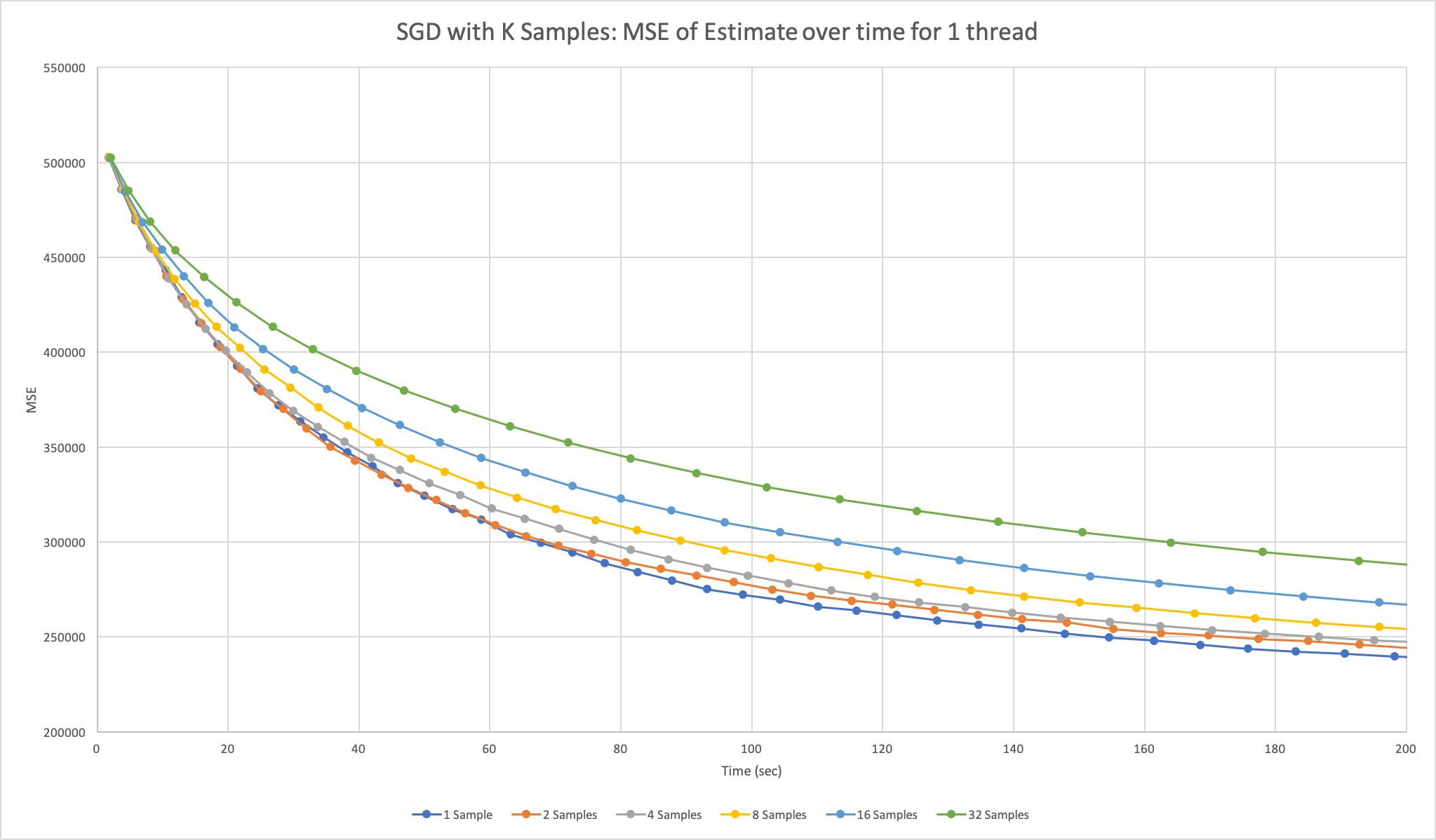

22 févr. 2022 To do so we show that online k-means over a distribution can be interpreted as stochastic gradient descent with a stochastic learning rate ... |

|

Labeled Graph Clustering via Projected Gradient Descent

Labeled Graph Clustering via Projected Gradient Descent. Shiau Hong Lim. Gregory Calvez. IBM Research shonglim@sg.ibm.com. Ecole Polytechnique. |

|

Convergence of online k-means

To do so we show that on- line k-means over a distribution can be inter- preted as stochastic gradient descent with a stochastic learning rate schedule. |

|

COMS 4721: Machine Learning for Data Science 4ptLecture 14 3

21 mars 2017 However the algorithm we will use is different from gradient methods: w ? w ? ??wL ... Coordinate descent (in the context of K-means). |

|

Convergence of online k-means

22 févr. 2022 To do so we show that online k-means over a distribution can be interpreted as stochastic gradient descent with a stochastic learning rate ... |

|

15 Clustering

Clustering a's One solution is to project onto the constraints during optimization: at each gradient descent step (and inside the line search loop), we clamp all |

|

Clustering with Gradient Descent - Alvin Wan

Clustering with Gradient Descent compiled by Alvin Wan from Professor Benjamin Recht's lecture 1 Performance We explored SVD Note that in practice , you |

|

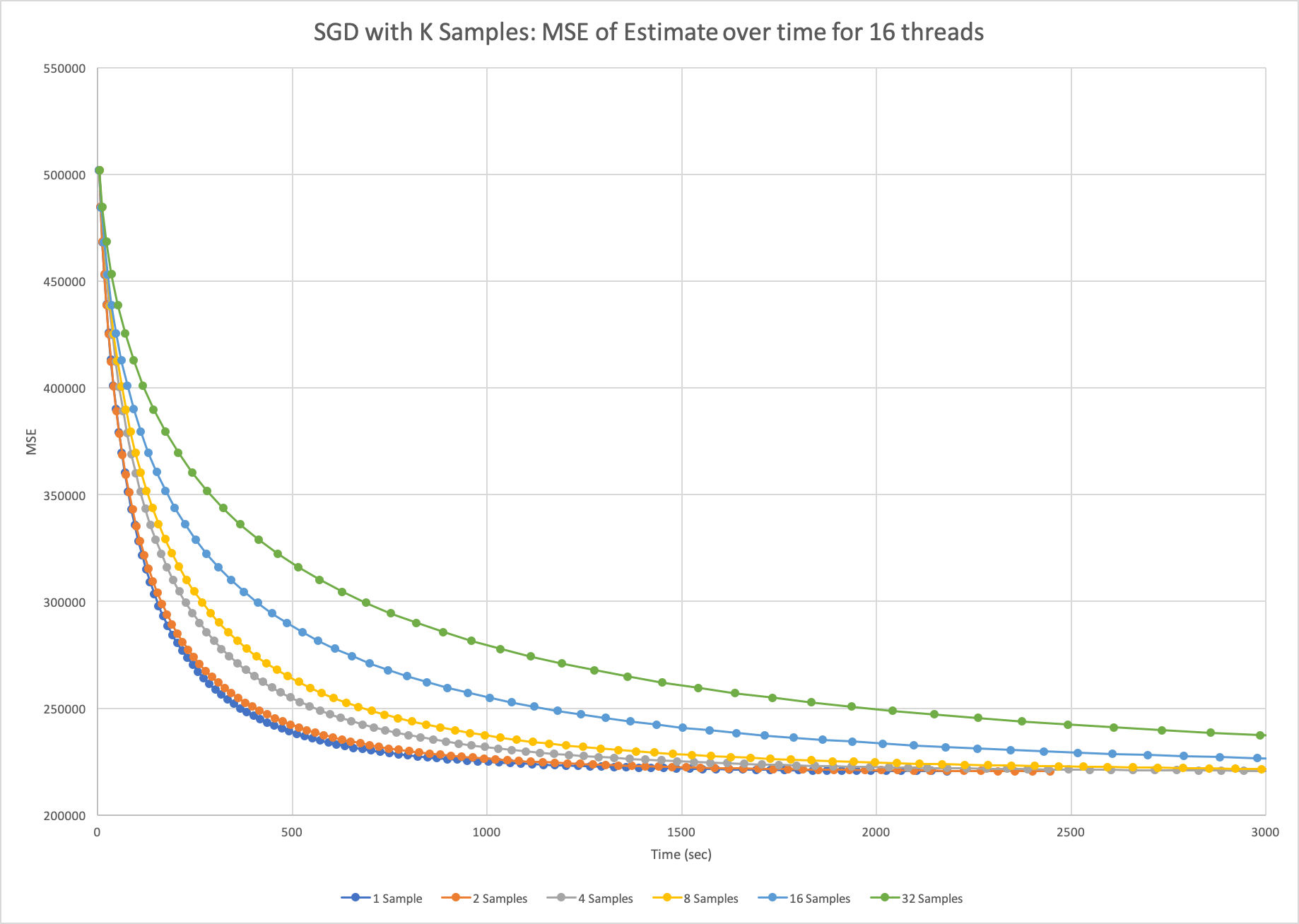

101 Introduction 102 Gradient descent 103 Stochastic gradient

4 mai 2015 · The streaming SGD algorithm for linear models is currently available in Spark 10 7 K-means We now consider the problem of clustering n |

|

Lecture 4: Machine learning III Question Review Review

that a simple algorithm, stochastic gradient descent, works quite well K-means is a particular method for performing clustering which is based on associating |

|

COMS 4721 - Columbia University

21 mar 2017 · Coordinate descent We will discuss a new and widely used optimization procedure in the context of K-means clustering We want to minimize |

|

Web-Scale K-Means Clustering

26 avr 2010 · Bottou and Bengio proposed an online, stochastic gradient descent (SGD) vari- ant that computed a gradient descent step on one example at a |

.png)

.png)