model explainers

|

InterpretML: A toolkit for understanding machine learning models

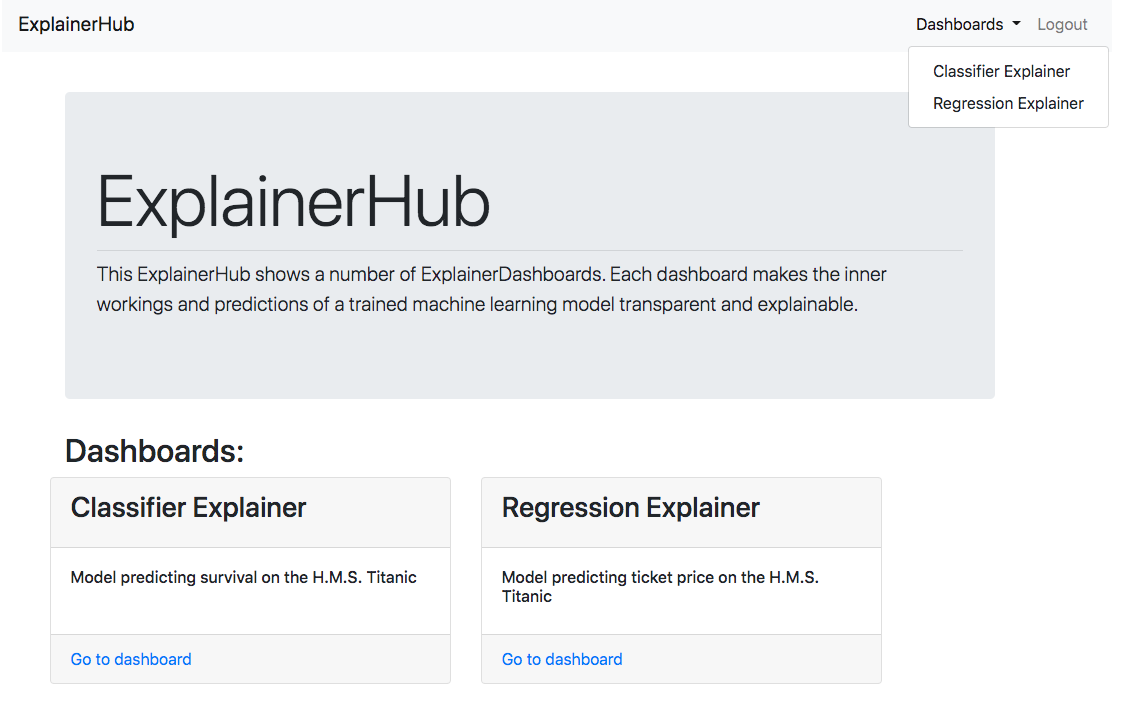

InterpretML also applies optimizations that enable running real-world datasets at scale InterpretML’s built-in interactive and exploratory visualization dashboard gives data scientists a wide range of insights about their dataset model performance and model explanations |

What makes a model explainable?

It will be explainable once you dig into the data and features behind the generated results. Understanding what features contribute to the model’s prediction and why they do is what explainability is all about. A car needs fuel to move, i.e it is the fuel that causes the engines to move – interpretability.

What are the different types of model explanations?

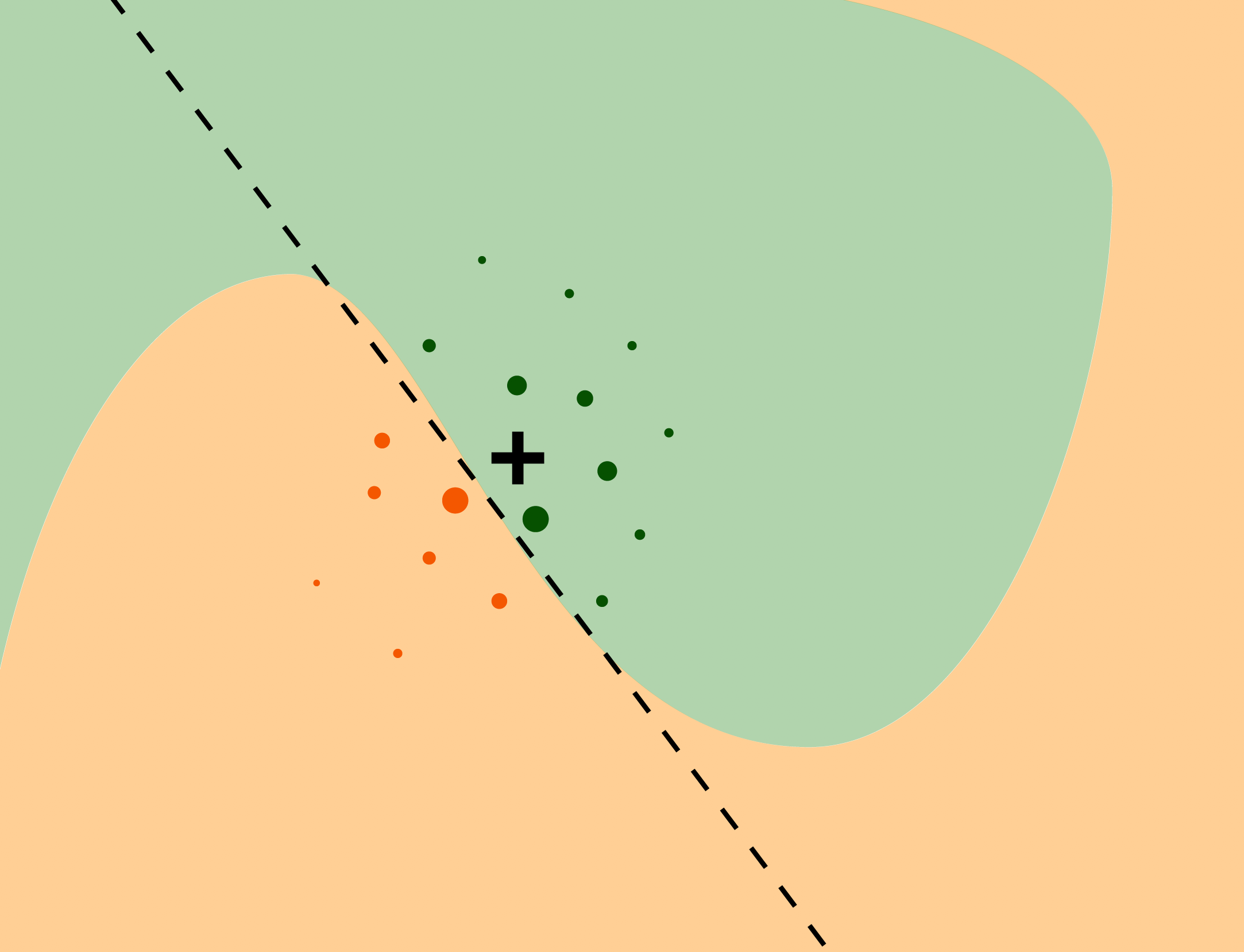

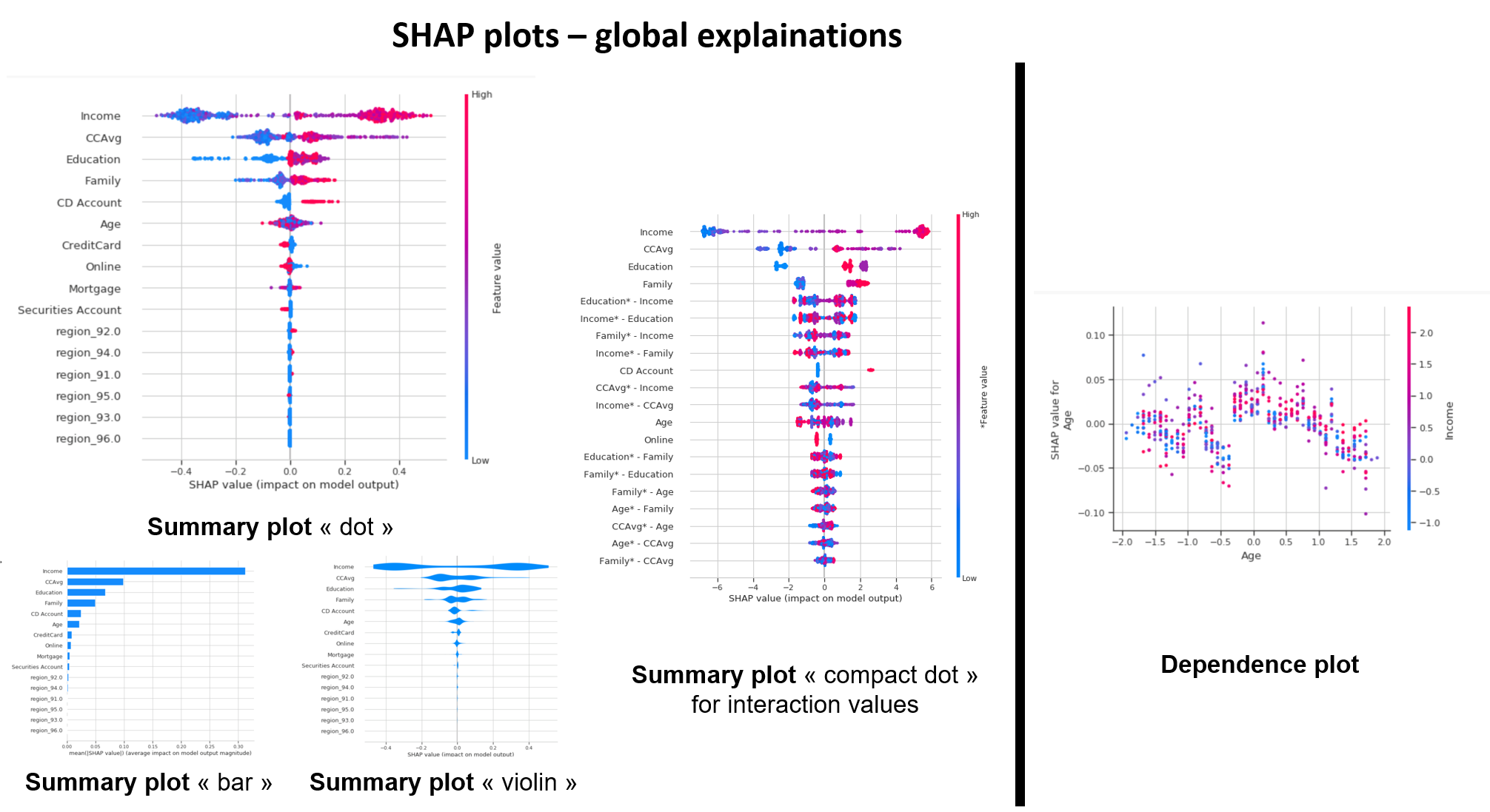

There are two types of model explanations, global and local. Global model explanations provide an overall understanding aggregated over a whole set of observations; local model explanations provide information about a prediction for a single observation. The tidymodels framework does not itself contain software for model explanations.

How do I compute a model explanation using Dalex?

To compute any kind of model explanation, global or local, using DALEX, we first prepare the appropriate data and then create an explainer for each model: A linear model is typically straightforward to interpret and explain; you may not often find yourself using separate model explanation algorithms for a linear model.

How do you explain a linear model?

A linear model is typically straightforward to interpret and explain; you may not often find yourself using separate model explanation algorithms for a linear model. However, it can sometimes be difficult to understand or explain the predictions of even a linear model once it has splines and interaction terms!

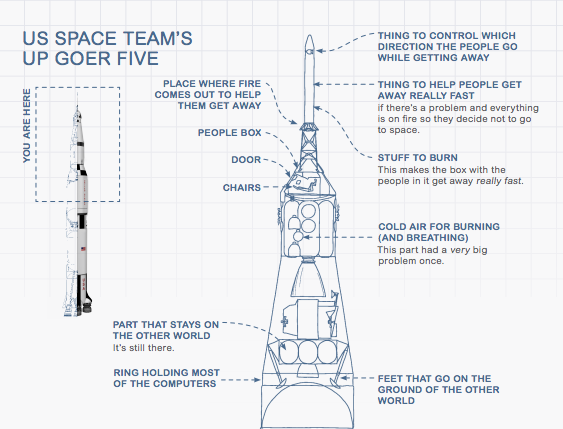

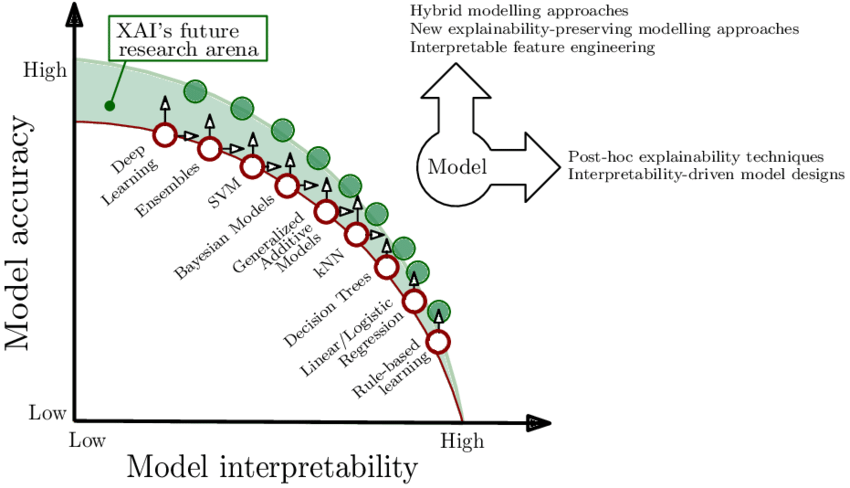

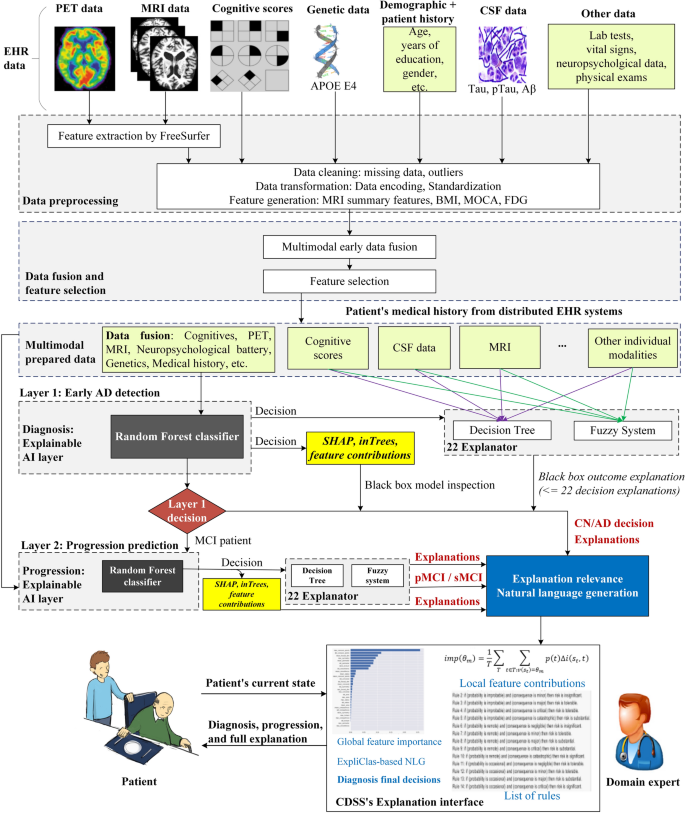

What Is Explainable Ai (Xai)?

Explainable AI refers to a set of processes and methods that aim to provide a clear and human-understandable explanation for the decisions generated by AI and machine learning models. Integrating an explainability layer into these models, Data Scientists and Machine Learning practitioners can create more trustworthy and transparent systems to assis

Building Trust Through Explainable Ai

Here are some explainable AI principles that can contribute to building trust: 1. Transparency.Ensuring stakeholders understand the models’ decision-making process. 2. Fairness.Ensuring that the models’ decisions are fair for everyone, including people in protected groups (race, religion, gender, disability, ethnicity). 3. Trust.Assessing the confi

Explainable Ai Examples

There are two broad categories of model explainability: model-specific methods and model-agnostic methods. In this section, we will understand the difference between both, with a specific focus on the model-agnostic methods. Both techniques can offer valuable insights into the inner working of machine learning models while ensuring that the models

Challenges of Xai and Future Perspectives

As AI technology continues to advance and become more sophisticated, understanding and interpreting the algorithms to discern how they produce outcomes is becoming increasingly challenging, allowing researchers to continue exploring new approaches and improving existing ones. Many explainable AI models require simplifying the underlying model, lead

Conclusion

This article has provided a good overview of what explainable AI is and some principles that contribute to building trust and can provide Data Scientists and other stakeholders with relevant skillsets to build trustworthy models to help make actionable decisions. We also covered model-agnostic and model-specific methods with a special focus on the

| Does Dataset Complexity Matters for Model Explainers? |

|

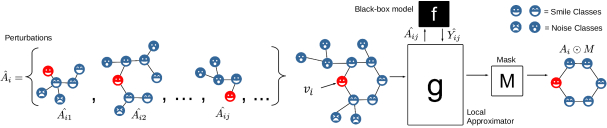

RelEx: A Model-Agnostic Relational Model Explainer

30 mai 2020 Relational Models include Statistical relational learning (SRL) methods [1] ... There are mainly two groups of these iid model explainers. |

|

Optimal Local Explainer Aggregation for Interpretable Prediction

Global explainers attempt to explain the full black box model across the entirety of the data. These models have a hard constraint to provide 100% coverage |

|

Explainer on SIP settlement model

What is the Single Instructing Party settlement model for instant payments? ----------. May 2021. With Riksbank joining the TARGET Instant Payment |

|

Training calibration-based counterfactual explainers for deep

counterfactual explainers for deep learning models in medical image analysis. Jayaraman J. Thiagarajan1? Kowshik Thopalli2 |

|

The Canna model: explainer. Assessing the impact of NHS Test and

13 sept. 2021 The Canna model: explainer. Assessing the impact of NHS Test and. Trace on COVID-19 transmission: June. 2020 to April 2021. |

|

Analysis of Explainers of Black Box Deep Neural Networks for

27 nov. 2019 Abstract: Deep Learning is a state-of-the-art technique to make inference on extensive or complex data. As a black box model due to their ... |

|

The Rùm Model Technical Annex - an explainer - GOV.UK

11 févr. 2021 The Rùm Model Technical Annex. - an explainer. Assessing the impact of test trace and isolate parameters on COVID-19 transmission in an ... |

|

Efficient Explanations from Empirical Explainers

11 nov. 2021 expensive explainers. We train and test Em- pirical Explainers in the language domain and find that they model their expensive counter-. |

|

PGM-Explainer: Probabilistic Graphical Model Explanations for

PGM-Explainer: Probabilistic Graphical Model. Explanations for Graph Neural Networks. Minh N. Vu. University of Florida. Gainesville FL 32611. |

|

Package DALEX

20 mar 2021 · All model explainers are model agnostic and can be compared across different models DALEX package is the cornerstone for 'DrWhy |

|

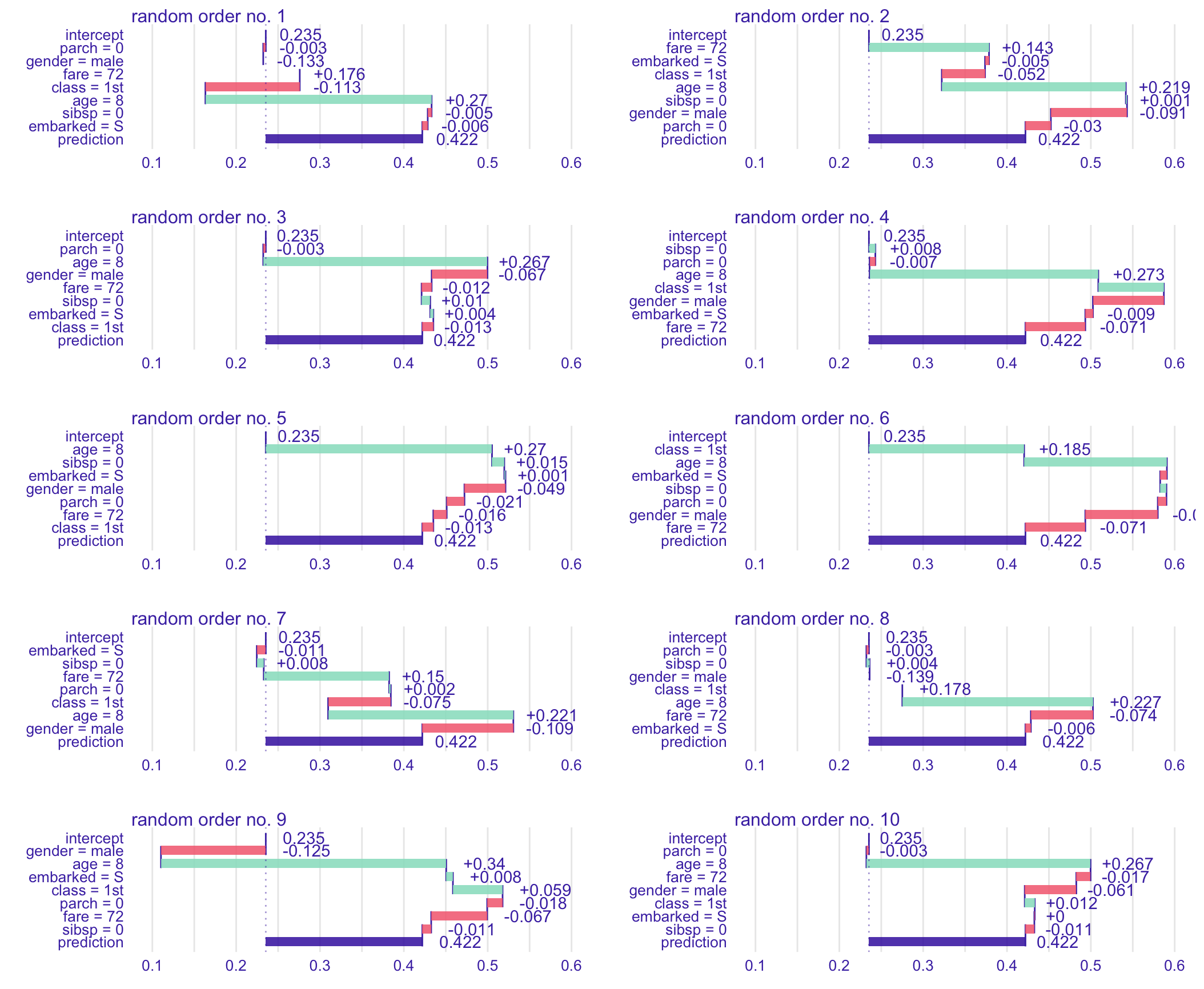

How do you explain that? - Vrije Universiteit Amsterdam

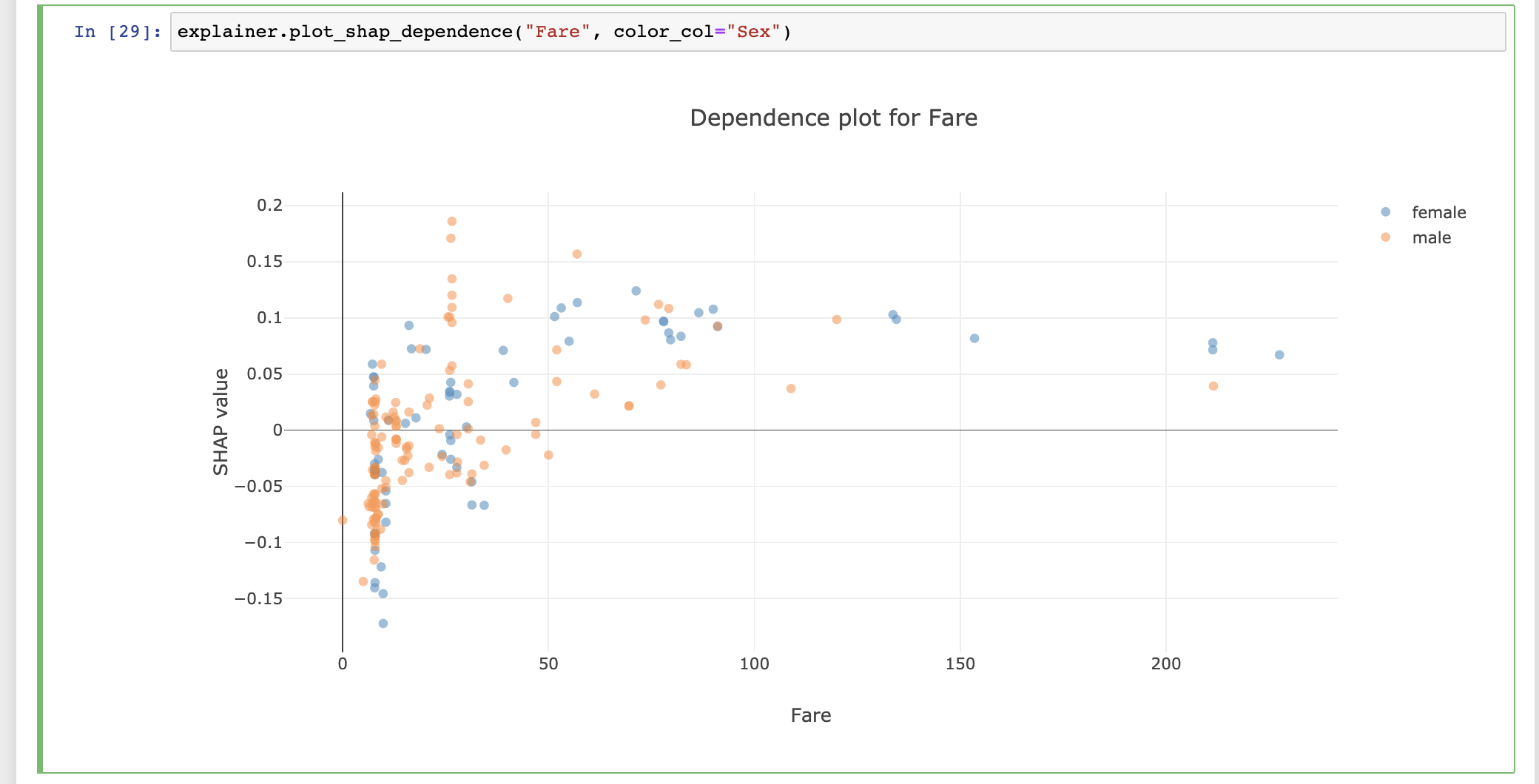

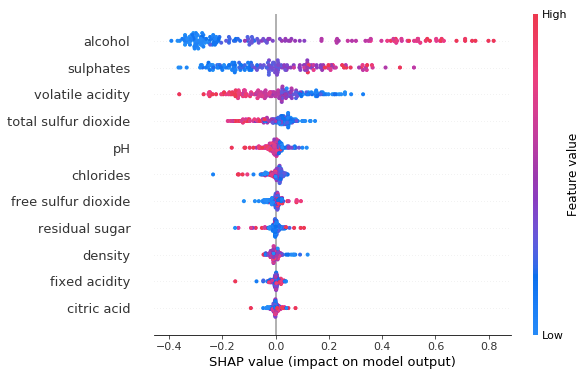

20 fév 2020 · This study compares model-agnostic explainers Lime and Shap in two ex- periments with real-world and synthetically generated data Shap is |

|

RFEX: Simple Random Forest Model and Sample Explainer for non

16 jui 2019 · significant improvement in RFEX Model explainer compared to the version published previously, a new RFEX Sample explainer that provides |

|

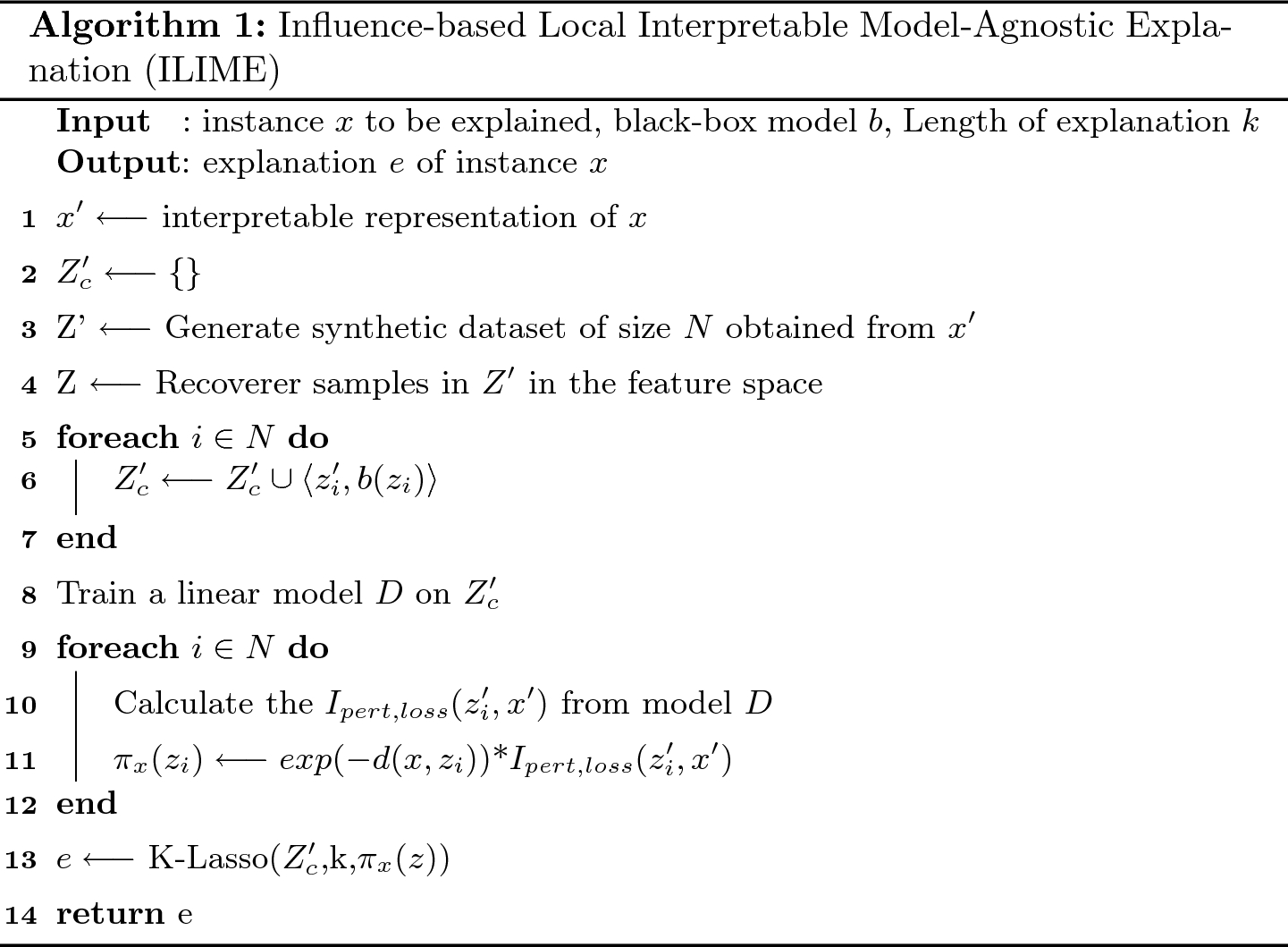

QLIME-A Quadratic Local Interpretable Model-Agnostic - CORE

to add interpretability and explainability to black box models LIME is a versatile explainer capable of handling different types of data and models At the local |

|

ExplAIner: A Visual Analytics Framework for Interactive - IEEE Xplore

diagnosis, and refinement of ML models Explainers have five properties; they take one or more model states as input, applying an XAI method, to output an |

|

Toward Faithful Explanatory Active Learning with - CEUR-WSorg

Explanatory active learning (XAL) tackles this issue by making the model predict and explain its own queries using local explainers By witnessing the model's |