newton's method for unconstrained optimization

|

Chapter 4: Unconstrained Optimization

Newton’s method PART I: One-Dimensional Unconstrained Optimization Techniques 1 Analytical approach (1-D) minx F (x) or maxx F (x) 2 Let F 0(x) = 0 and find x = x¤ 2 If F 00(x¤) > 0 F (x¤) = minx F (x) x¤ is a local minimum of F (x); 2 If F 00(x¤) < 0 F (x¤) = maxx |

|

Lecture 14 Newton Algorithm for Unconstrained Optimization

Newton Method can be applied to solve the corresponding optimality condition ∇f(x∗) = 0 resulting in x k+1 = x k − ∇f2(x k)−1∇f(x k) This is known as pure Newton method As discussed in this form the method may not always converge Convex Optimization 6 |

|

Newton’s Method for Unconstrained Optimization

Newton’s Method for Unconstrained Optimization Robert M Freund February 2004 2004 Massachusetts Institute of Technology 1 Newton’s Method Suppose we want to solve: (P:) min f (x) ∈ x n At x = ̄x f (x) can be approximated by: (x) h(x) := f ( ̄ x) + f ( ̄ x)T (x x) ̄ + (x x)tH ̄ ( ̄ x)(x x) ̄ ≈ ∇ − 2 − − |

|

Newton’s Method

Newton\'s method Given unconstrained smooth convex optimization min f(x) x where f is convex twice di erentable and dom(f) = Rn Recall that gradient descent chooses initial x(0) 2 Rn and repeats x(k) = x(k 1) tk rf(x(k 1)); k = 1; 2; 3; : : : In comparison Newton\'s method repeats x(k) = x(k 1) r2f(x(k 1)) 1rf(x(k 1)); k = 1; 2; 3; : : : |

How do you find a descent direction using unconstrained minimization?

Unconstrained minimization ▶ these produce a sequence of points x(k) ∈ dom f, k = 0, 1, . . . General descent method. Determine a descent direction Δx. Line search. Choose a step size t > Update. x := x + tΔx. 0. until stopping criterion is satisfied. Δx := −∇f (x). Line search. Choose step size t via exact or backtracking line search.

How can Newton's method be applied to a twice-differentiable function?

As such, Newton's method can be applied to the derivative f ′ of a twice-differentiable function f to find the roots of the derivative (solutions to f ′ (x) = 0 ), also known as the critical points of f.

What is equality-constrained Newton's method?

In equality-constrained Newton’s method, we start with x(0)such that Ax(0)= b. Then we repeat the updates x+= x+ tv; where v= argmin

How does Newton's method solve a convergent iteration problem?

Newton's method attempts to solve this problem by constructing a sequence from an initial guess (starting point) that converges towards a minimizer of by using a sequence of second-order Taylor approximations of around the iterates. The second-order Taylor expansion of f around is

Lecture 39

Newtons Method

Newtons Method for optimization

|

Newtons Method for Unconstrained Optimization

Newton's Method for Unconstrained Optimization. Robert M. Freund. February 2004 1.2 Quadratic Convergence of Newton's Method. |

|

Newtons Method for Unconstrained Optimization

Newton's Method for Unconstrained Optimization. Robert M. Freund. March 2014 iterations this way |

|

Lecture 14 Newton Algorithm for Unconstrained Optimization

21?/10?/2008 Newton Method for System of Nonlinear Equations. • Newton's Method for Optimization. • Classic Analysis. Convex Optimization. |

|

UNCONSTRAINED MULTIVARIABLE OPTIMIZATION

In this chapter we discuss the solution of the unconstrained optimization Newton's method makes use of the second-order (quadratic) approximation of. |

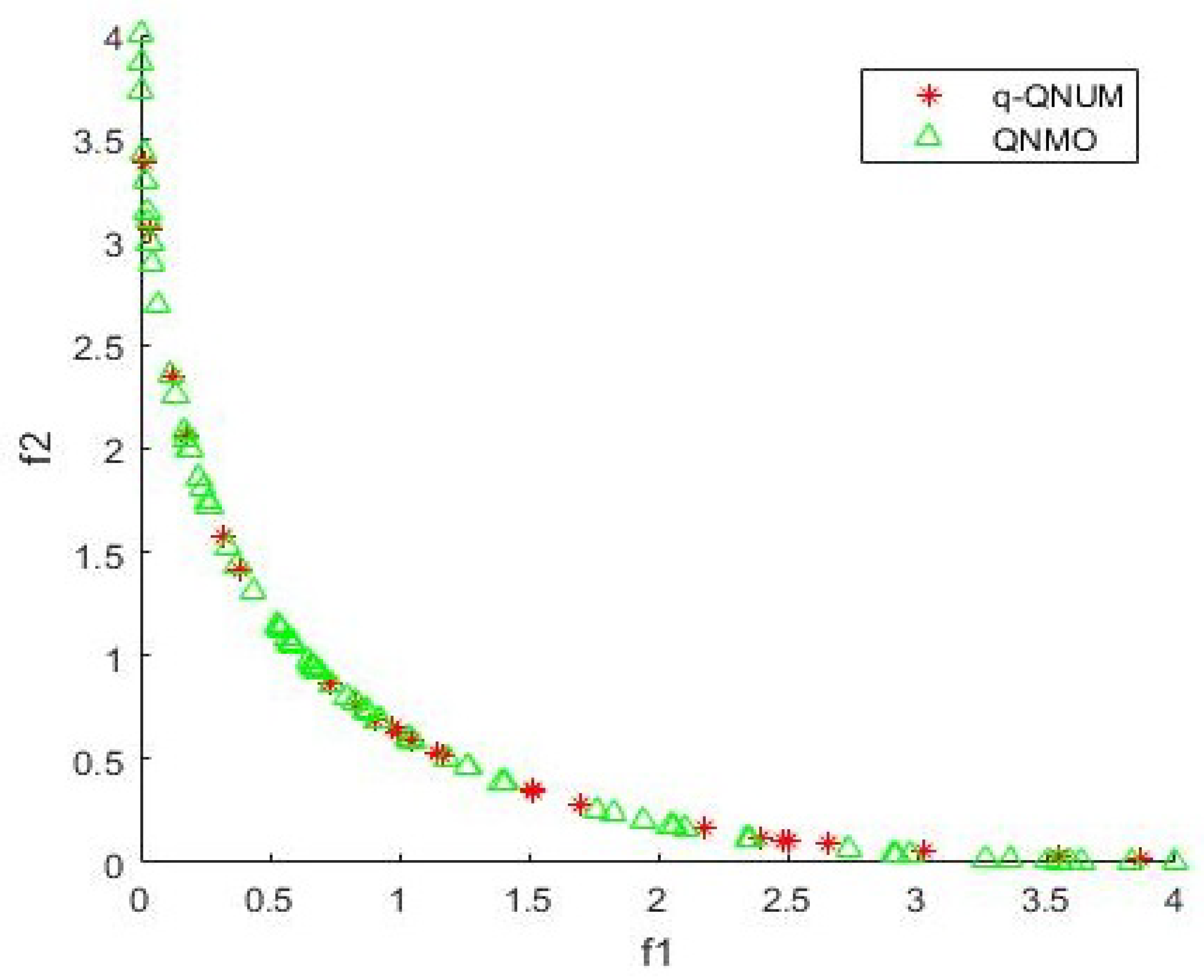

| A Noise-Tolerant Quasi-Newton Algorithm for Unconstrained |

|

A Newtons Method for Nonlinear Unconstrained Optimization

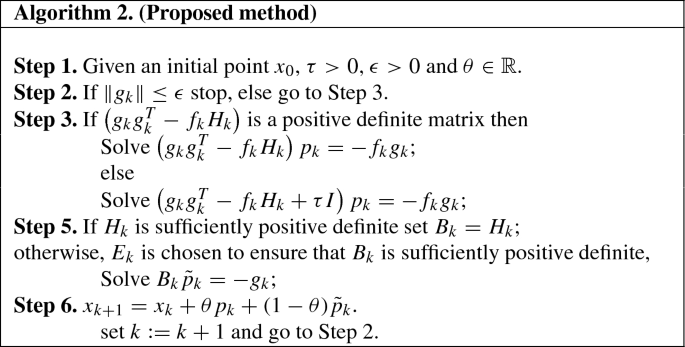

Abstract: In this paper a modification of the classical Newton Method for solving nonlinear univariate and unconstrained optimization problems based on the |

|

GENERALIZED NEWTONS METHOD FOR LC¹ UNCONSTRAINED

and optimality conditions for LC1 optimization problem (2) in Section 3. In Section. 4 the local convergence and the global convergence under the exact line |

|

Chapter 4: Unconstrained Optimization

Part II: multidimensional unconstrained optimization. – Analytical method. – Gradient method — steepest ascent (descent) method. – Newton's method. |

|

Regularized Newton Method for unconstrained Convex Optimization

25?/04?/2006 At each iteration it requires solving an unconstrained minimization problem with the same quadratic term as in the. Newton method |

|

On the complexity of steepest descent Newtons and regularized

15?/10?/2009 It is shown that the steepest descent and Newton's method for unconstrained nonconvex optimization under standard assumptions may be both ... |

|

Newtons Method for Unconstrained Optimization - AWS Simple

1 Newton's Method Consider the unconstrained optimization problem: 1 1 Linear, Superlinear, and Quadratic Convergence Rates It turns out that Newton's method converges to a solution extremely rapidly under certain circumstances 1 2 Quadratic Convergence of Newton's Method 2 Proof of Theorem 1 1 |

|

Notes for Newtons Method for Unconstrained Optimization

If δ = 0 in the above expression, the sequence exhibits superlinear conver- gence A sequence of numbers {si} exhibits quadratic convergence if limi→∞ si = s¯ |

|

Newtons Method

Newton's method Given unconstrained, smooth convex optimization min x f(x) where f is convex, twice differentable, and dom(f) = Rn Recall that gradient |

|

Lecture 14 Newton Algorithm for Unconstrained Optimization

21 oct 2008 · This is known as pure Newton method As discussed, in this form the method may not always converge Convex Optimization 6 Page 8 Lecture |

|

Methods for unconstrained optimization The general optimization

The Newton-Raphson method in ℜ1 (1) Consider the non-linear problem f (x) = 0 , where f ,x ∈ ℜ 1 Replace the function f by a simpler model function mk; |

|

UNCONSTRAINED OPTIMIZATION - DTU Orbit

optimization We present Conjugate Gradient, Damped Newton and Quasi Newton methods together with the relevant theoretical background The reader is |

|

A Review of Methods for Unconstrained Optimization - CORE

and Polak-Ribière conjugate gradient methods, the Newton method and the The variant of the Newton method for unconstrained minimization is formu- |

|

Solution methods for unconstrained optimization problems - Unipi

Exercise 3 Implement in MATLAB the gradient method for solving the problem Newton method (tangent method): write the first order approximation of ϕ at ti : |

|

Descent methods for unconstrained optimization

each iteration (This is also called the “gradient descent method”) Newton's method We have seen how solving a unconstrained quadratic problem of the form |

![PDF] A Newton ' s Method for Nonlinear Unconstrained Optimization PDF] A Newton ' s Method for Nonlinear Unconstrained Optimization](https://i1.rgstatic.net/publication/339599191_Newton-MSOR_Method_for_Solving_Large-Scale_Unconstrained_Optimization_Problems_with_an_Arrowhead_Hessian_Matrices/links/5e5b21e94585152ce8fc8081/largepreview.png)