self supervised few shot learning

|

Boosting Few-Shot Visual Learning With Self-Supervision

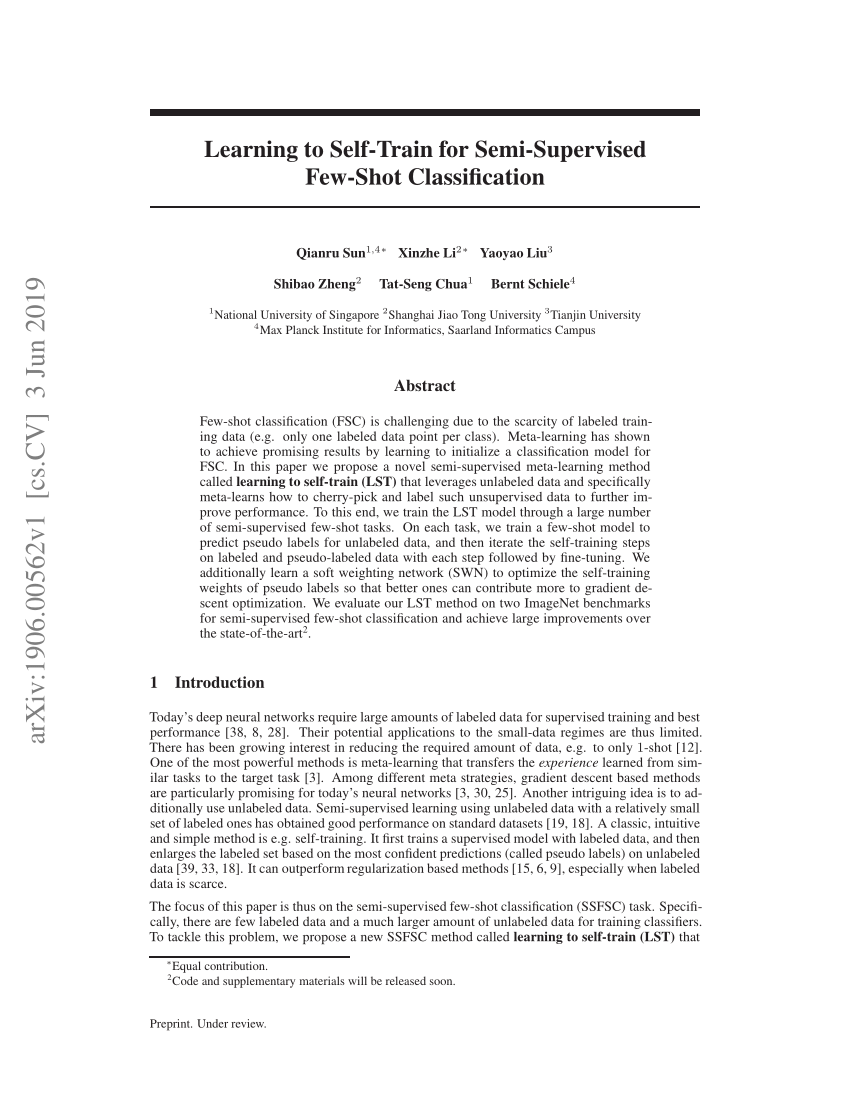

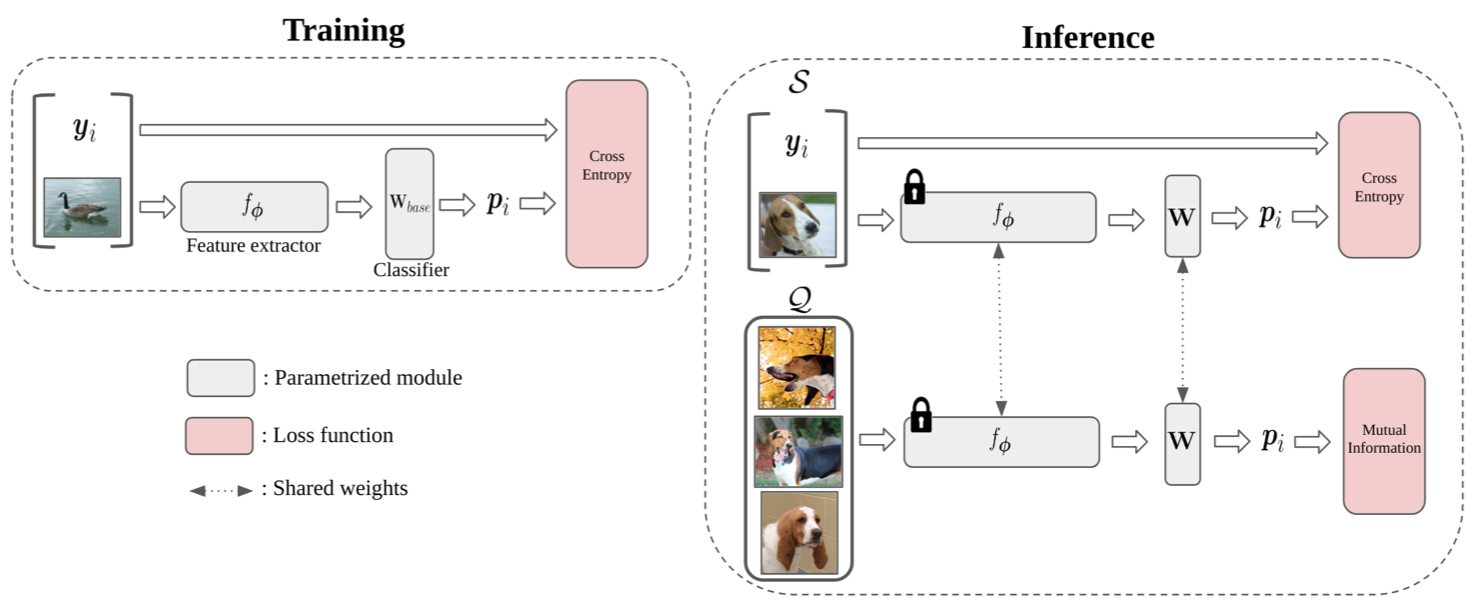

We use self-supervision as an auxiliary task in a few-shot learning pipeline enabling feature extractors to learn richer and more transferable visual representations while still using few anno-tated samples |

Is self-supervised learning a good way to prove few-shot learning?

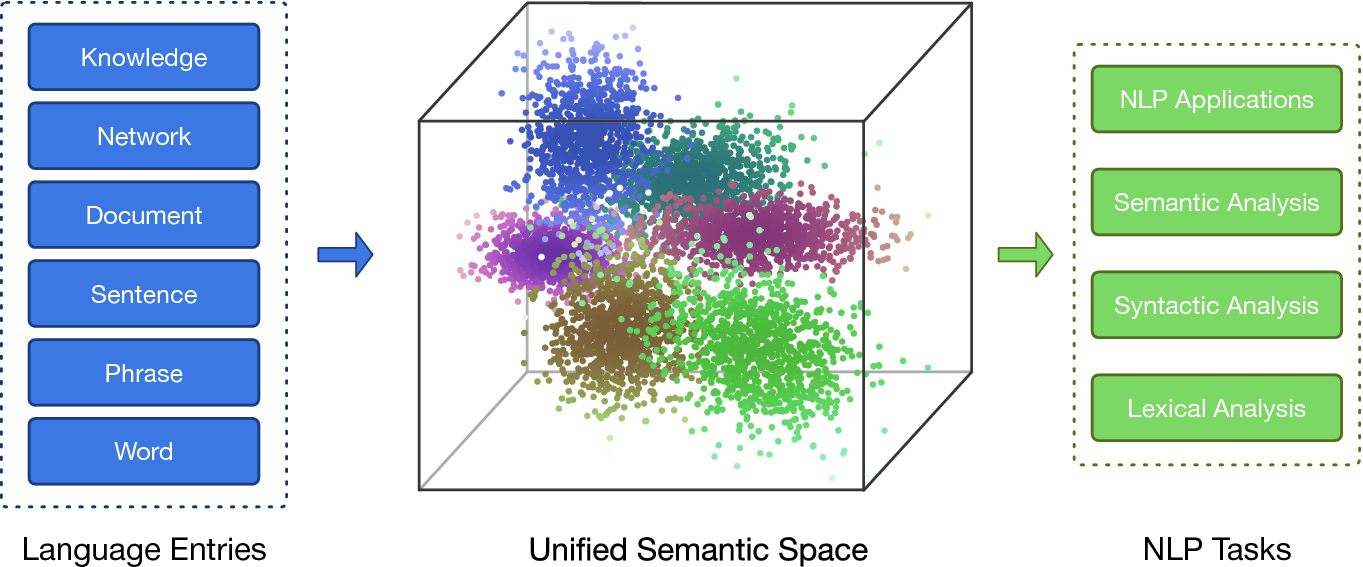

Self-supervised learning focuses instead on unlabeled data and looks into it for the supervisory signal to feed high capacity deep neu- ral networks. In this work we exploit the complementar- ity of these two domains and propose an approach for im- proving few-shot learning through self-supervision.

What is Pareto self-supervised training for few-shot auxiliary learning?

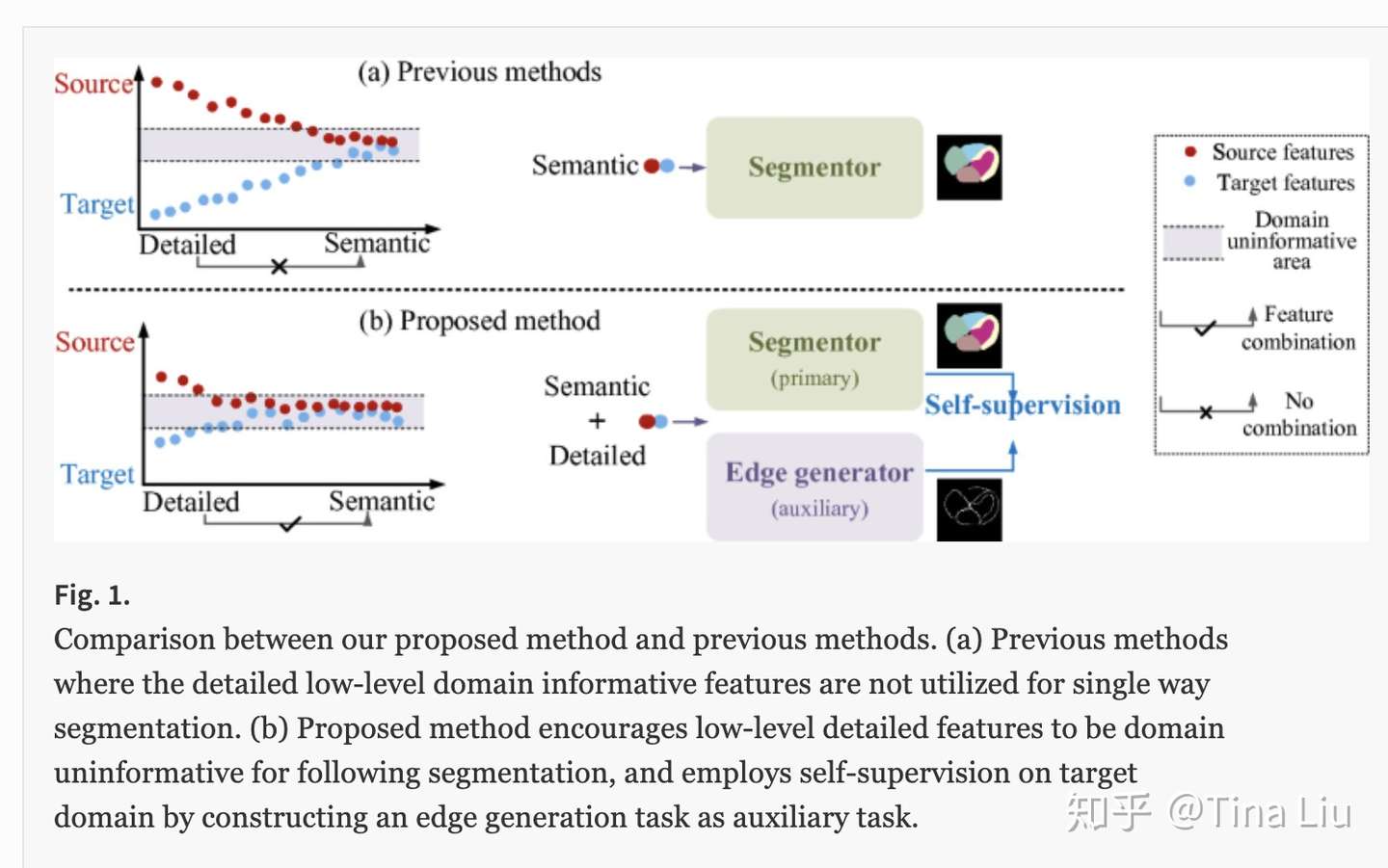

To overcome the issue, we propose a novel approach named Pareto self-supervised training (PSST) for few-shot learning. PSST explicitly casts few-shot auxiliary learning as a multi-objective op-timization problem, with the overall objective of finding a Pareto optimal solution of network parameters [26, 27].

What is few-shot learning?

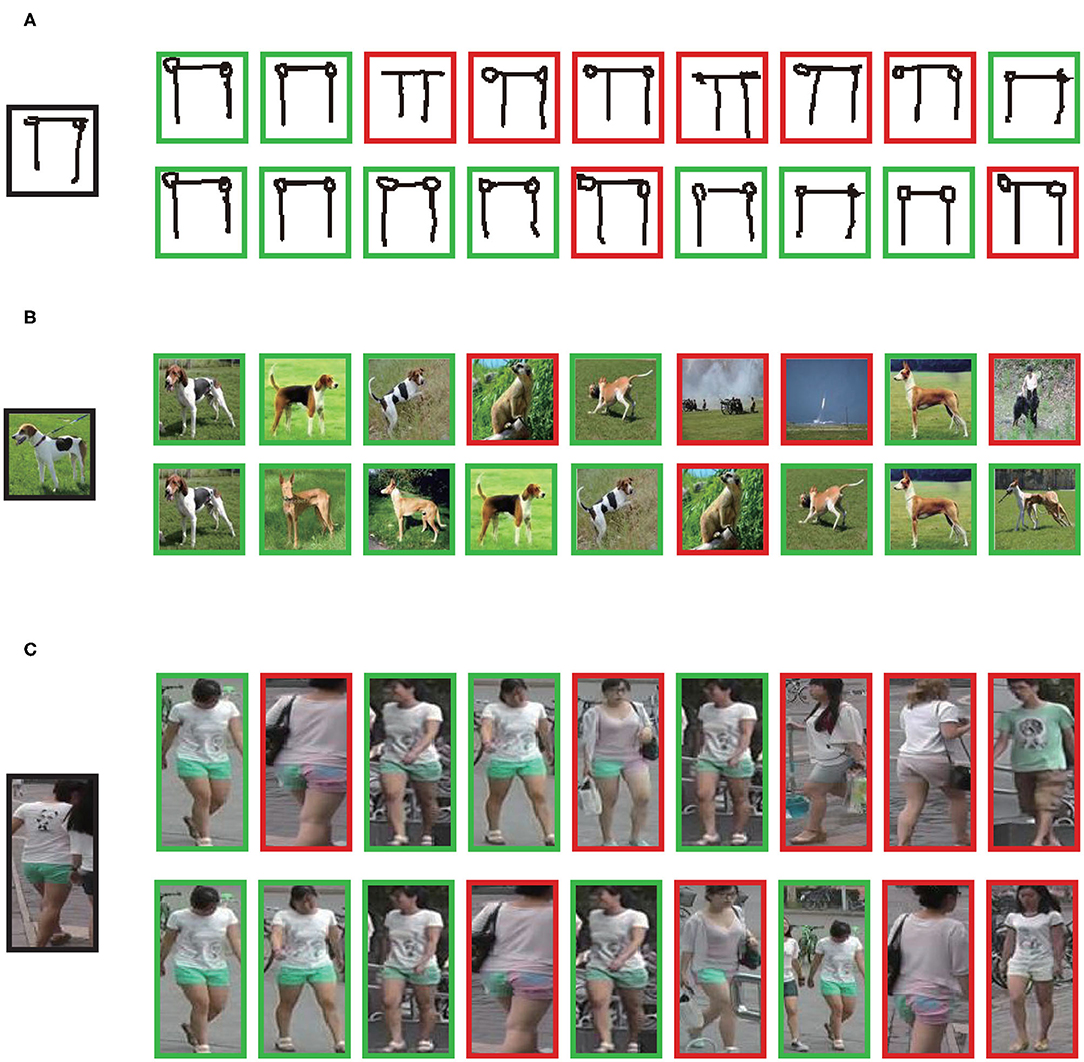

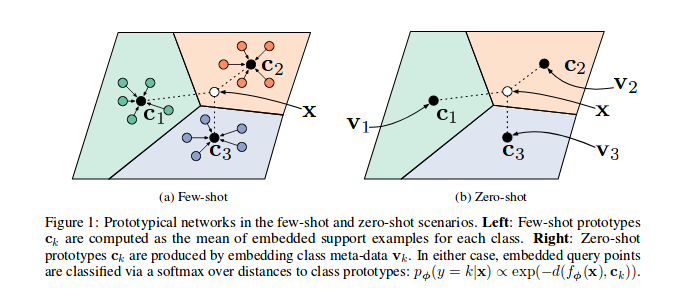

Few-Shot Learning. Few-shot learning aims to learn representations that generalize well to the novel classes where only a few images are available. To this end, several meta-learning approaches have been proposed that evaluate representations by sampling many few-shot tasks within the domain of a base dataset.

Can self-supervision augment supervised training for few-shot transfer learning?

In contrast, our work focuses on an important counterexample: self-supervision can in fact augment standard supervised training for few-shot transfer learning in the low training data regime without relying on any external dataset. The most related work is that of Gidaris et al. [ 18] who also use self-supervision to improve few-shot learning.

Results on Few-Shot Learning

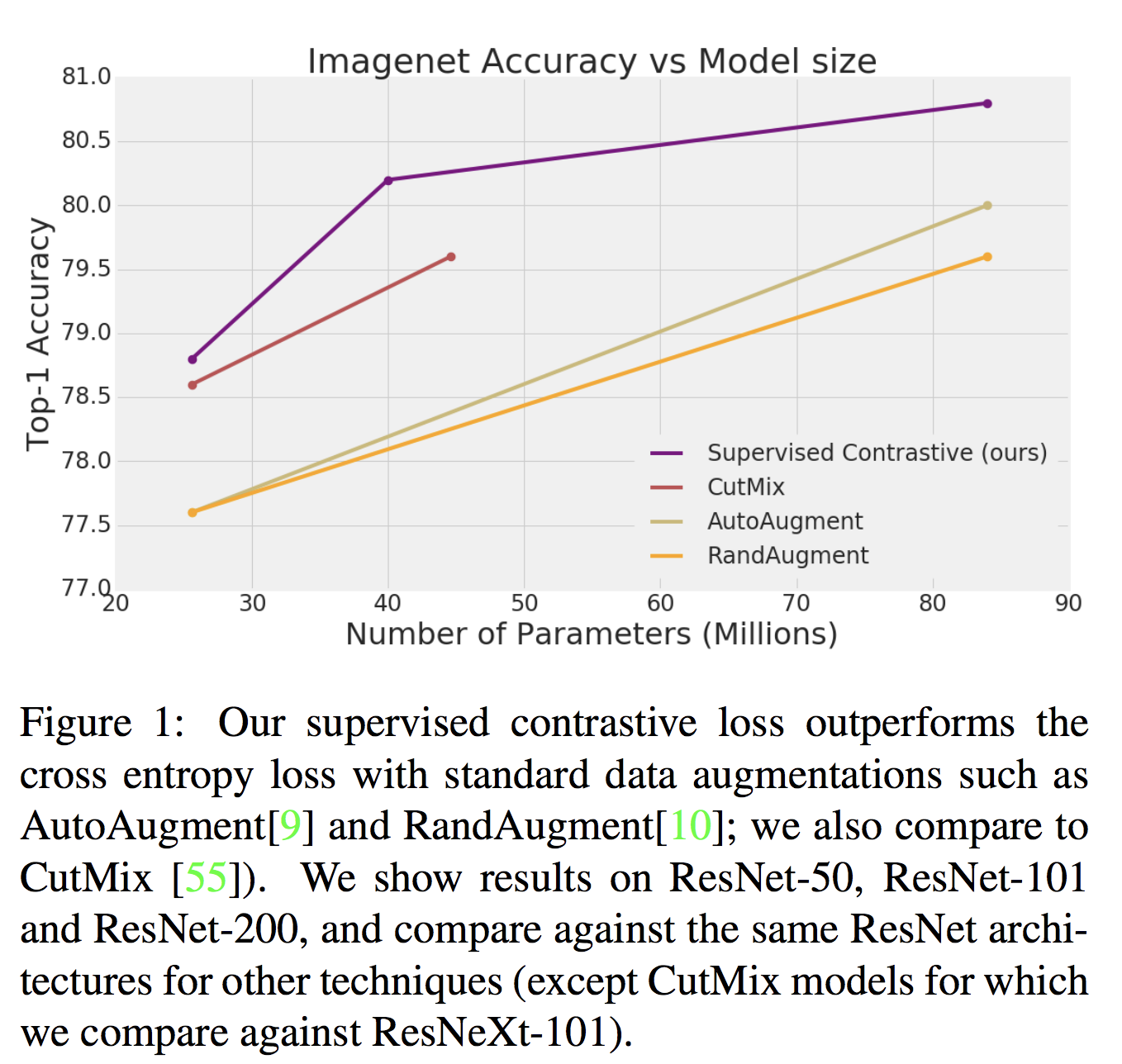

Self-supervised Learning Improves Few-Shot Learning. Figure 2 shows the accuracies of various models on few-shot learning benchmarks. Our ProtoNet baseline matches the results of the mini-ImageNet and birds datasets presented in [10] (in their Table A5). Our results show that jigsaw puzzle task improves the ProtoNet baseline on all seven datasets.

Analyzing The Effect of Domain Shift For Self-Supervision

Scaling SSL to massive unlabeled datasets that are readily available for some domains is a promising avenue for improvement. However, do more unlabeled data always help for a task in hand? This question hasn’t been sufficiently addressed in the literature as most prior works study the effectiveness of SSL on a curated set of images, such as ImageNe

Selecting Images For Self-Supervision

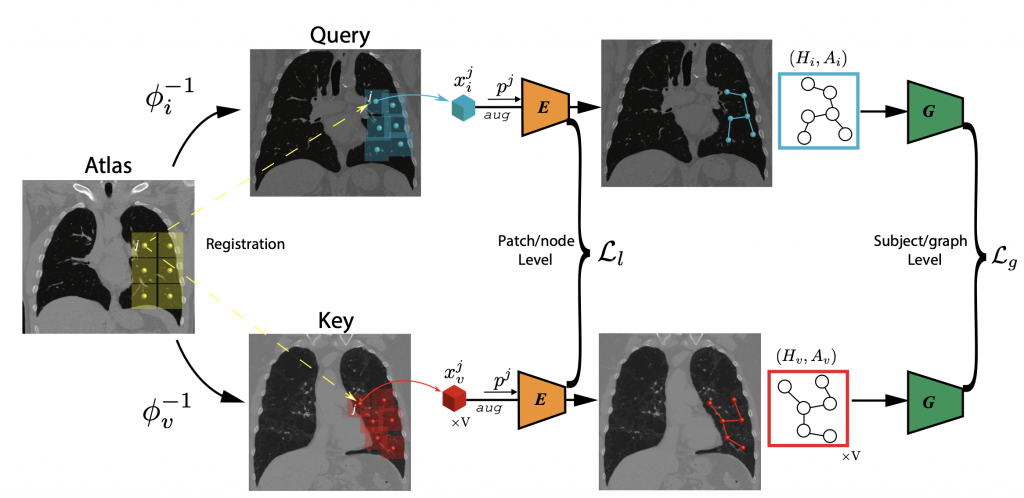

Based on the above analysis we propose a simple method to select images for SSL from a large, generic pool of unlabeled images in a dataset dependent manner. We use a “domain weighted” model to select the top images based on a domain classifier, in our case a binary logistic regression model trained with images from the source domain \\(\\mathcal{D}_

Part-3 Quick overview of different self-supervised learning approaches.

Few Shot Learning

What is Self Supervised Learning?

|

Boosting Few-Shot Visual Learning with Self-Supervision - Spyros

Few-shot learning and self-supervised learning address different facets of the same problem: how to train a model with little or no labeled data. |

|

When Does Self-supervision Improve Few-shot Learning?

Self-supervised tasks such as jigsaw puzzle or rotation prediction act as a data-dependent regularizer for the shared feature backbone. Our work investigates |

| Self-Supervised Learning for Few-Shot Image Classification |

|

Conditional Self-Supervised Learning for Few-Shot Classification

However learning a good representation by traditional self-supervised methods is usually de- pendent on large training samples. In few-shot sce- narios |

|

MaskSplit: Self-Supervised Meta-Learning for Few-Shot Semantic

alleviate this need we propose a self-supervised training approach for learning few-shot segmentation models. We first use unsupervised saliency estimation |

|

A Survey of Self-Supervised and Few-Shot Object Detection

27-Oct-2021 in learning self-supervised representations several (few- shot) detection methods now initialize their backbone from. |

|

COVID-19 Surveillance through Twitter using Self-Supervised and

With the seasonal in- fluenza and novel Coronavirus having many similar symptoms we propose using few shot learning to fine-tune a semi-supervised model built |

|

Improving In-Context Few-Shot Learning via Self-Supervised Training

10-Jul-2022 Self-supervised pretraining has made few-shot learning possible for many NLP tasks. But the pretraining objectives are not typically. |

|

Pareto Self-Supervised Training for Few-Shot Learning

While few-shot learning (FSL) aims for rapid generaliza- tion to new concepts with little supervision self-supervised learning (SSL) constructs supervisory |

|

SELF-SUPERVISED CONTRASTIVE ZERO TO FEW-SHOT

The current prevailing approach to supervised and few-shot learning is to use datasets for pretraining or just more (broader) self-supervised learning ... |

|

Boosting Few-Shot Visual Learning With Self-Supervision

self-supervision as an auxiliary task in a few-shot learning pipeline, enabling feature extractors to learn richer and more transferable visual representations while |

|

When Does Self-supervision Improve Few-shot Learning? - ECVA

We investigate the role of self-supervised learning (SSL) in the context of few- shot learning Although recent research has shown the benefits of SSL on large |

|

Self-Supervised Few-Shot Learning on Point Clouds - NeurIPS

Self-Supervised Few-Shot Learning on Point Clouds Charu Sharma and Manohar Kaul Department of Computer Science Engineering Indian Institute of |

|

Self-Supervised Meta-Learning for Few-Shot Natural Language

16 nov 2020 · Self-Supervised Meta-Learning for Few-Shot Natural Language Classification Tasks Trapit Bansal ♢∗ and Rishikesh Jha† and Tsendsuren |

|

Self-Supervised Tuning for Few-Shot Segmentation - IJCAI

One solution for solving few-shot segmentation [Shaban et al , 2017] is meta- learning [Munkhdalai and Yu, 2017], whose general idea is to utilize a large number |

|

Self-Supervised Generalisation with Meta Auxiliary Learning - NIPS

[34, 31] realised few shot learning in the instance space via a differentiable nearest-neighbour approach Related to meta learning, our framework is designed to |

|

Unsupervised Meta-Learning for Few-Shot Image - NeurIPS

On the Omniglot and Mini-Imagenet few-shot learning benchmarks, UMTRA Compared to supervised model-agnostic meta-learning approaches, UMTRA trades Evolutionary principles in self-referential learning, or on learning how to |

![PDF] Unsupervised Few-shot Learning via Self-supervised Training PDF] Unsupervised Few-shot Learning via Self-supervised Training](https://images.deepai.org/publication-preview/when-does-self-supervision-improve-few-shot-learning-page-3-medium.jpg)

![PDF] Unsupervised Few-shot Learning via Self-supervised Training PDF] Unsupervised Few-shot Learning via Self-supervised Training](https://d3i71xaburhd42.cloudfront.net/db4162c77bbbfc346ffa973985569124adea6861/7-Table1-1.png)

![PDF] When Does Self-supervision Improve Few-shot Learning PDF] When Does Self-supervision Improve Few-shot Learning](https://people.cs.umass.edu/~jcsu/papers/fsl_ssl/overview5.png)

![PDF] Self-Supervised Prototypical Transfer Learning for Few-Shot PDF] Self-Supervised Prototypical Transfer Learning for Few-Shot](https://media.arxiv-vanity.com/render-output/4723940/x1.png)

![PDF] Unsupervised Few-shot Learning via Self-supervised Training PDF] Unsupervised Few-shot Learning via Self-supervised Training](https://images.deepai.org/publication-preview/when-does-self-supervision-improve-few-shot-learning-page-12-thumb.jpg)

![PDF] Self-Supervised Prototypical Transfer Learning for Few-Shot PDF] Self-Supervised Prototypical Transfer Learning for Few-Shot](https://miro.medium.com/max/5148/1*S0JgcwtuQ90wXve9OIyrew.png)

![PDF] Self-Supervised Prototypical Transfer Learning for Few-Shot PDF] Self-Supervised Prototypical Transfer Learning for Few-Shot](https://miro.medium.com/max/4080/1*CUaqlPxr29UDqaVJ3WEm9Q.png)

![PDF] Unsupervised Few-shot Learning via Self-supervised Training PDF] Unsupervised Few-shot Learning via Self-supervised Training](https://paperswithcode.com/media/thumbnails/task/task-0000000154-e322d1df.jpg)