xgboost stanford

|

Exploring Autoencoders and XGBoost for Predictive Maintenance in

Stanford University Stanford California February 12-14 2024 SGP-TR-227 1 Exploring Autoencoders and XGBoost for Predictive Maintenance in Geothermal |

|

Learning to Rank (with GBDTs)

16 mai 2019 · So maybe they're a little better on big data? Page 45 Introduction to Information Retrieval Raw example of xgboost for ranking ▫ git clone |

|

CS246

1 jui 2023 · XGBoost: A Scalable Tree Boosting System T Chen etal KDD2016 Page ▫ XGBoost: eXtreme Gradient Boosting ▫ A highly scalable |

|

Boosting CS229

wins most Kaggle competitions • great systems (e g XGBoost) ©2021 Carlos Guestrin Page 5 CS229: Machine Learning Ensemble classifier ©2021 Carlos |

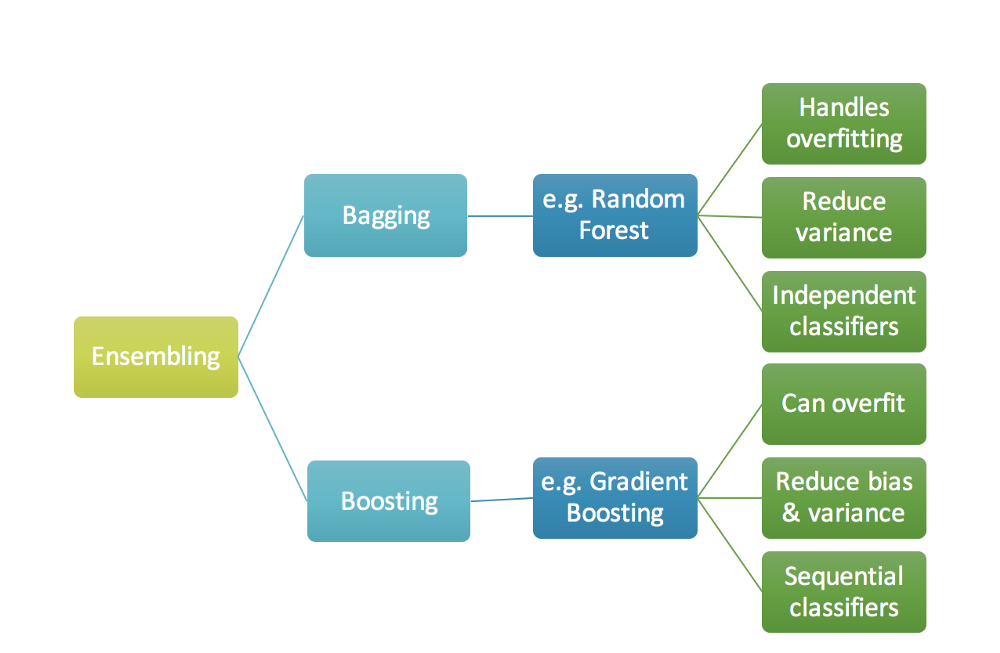

What is the difference between XGBoost and AdaBoost?

XGBoost is an optimized version of Gradient Boosting that includes several improvements and extensions, while AdaBoost uses a different approach to build the sequence of models.

The choice of algorithm depends on the specific problem and the requirements of the application.Use Random Forests when you need a better balance between interpretability and accuracy.

Random Forests are also good when you have large datasets with many features.

Use XGBoost when your primary concern is performance and you have the resources to tune the model properly.

|

Boosting

Trevor Hastie Stanford University. 1. Boosting. Trevor Hastie. Statistics Department. Stanford University. Collaborators: Brad Efron |

|

XGBoost: A Scalable Tree Boosting System

By combining these insights XGBoost scales beyond billions of examples using far fewer resources than existing systems. Keywords. Large-scale Machine Learning. |

|

Fine-Grained Sentiment Analysis of Restaurant Customer Reviews

1Department of East Asian Languages and Cultures Stanford. University. (Long Short-Term Memory) |

|

Http://cs246.stanford.edu

Feb 22 2022 Jure Leskovec & Mina Ghashami |

|

Geothermal Oil and Gas Well Subsurface Temperature Prediction

Stanford University Stanford |

|

An Efficient Learning Framework For Federated XGBoost Using

May 12 2021 focuses on vertical federated learning for XGBoost. Our goal is to build a federated XGBoost model ... Stanford university Stanford |

|

XGMix: Local-Ancestry Inference with Stacked XGBoost

Apr 24 2020 Accepted as a workshop paper at AI4AH |

|

Imputing chromatin landscape from a single assay

DNase-seq using XGBoost Dense Neural Network |

|

Cryptocurrency Alpha Models via Intraday Technical Trading

NeuralProphet XGBoost and recurrent neural networks. Generally |

|

Boosting - Stanford University

Trevor Hastie, Stanford University 1 Boosting Trevor Hastie Statistics Rosset, Rob Tibshirani, Ji Zhu http://www-stat stanford edu/∼hastie/TALKS/boost pdf |

|

Boosting Algorithms - Stanford University

We present a statistical perspective on boosting Special empha- sis is given to estimating potentially complex parametric or nonpara- metric models, including |

|

32 Algorithme XGBoost - Institut des actuaires

La première méthode est l'algorithme de gradient boosting XGBoost La Adaptive Splines, Stanford university, Department of Statistics, Laboratory for |

|

Trees, Bagging, Random Forests and Boosting

The techniques discussed here enhance their performance considerably Page 2 Boosting Trevor Hastie, Stanford University 2 Two- |

|

Tree Boosting With XGBoost

for why tree boosting, and in particular XGBoost, seems to be such a highly ef- fective and versatile approach PhD thesis, Stanford university Rumelhart, D E |

|

XGMix: Local-Ancestry Inference with Stacked XGBoost - bioRxiv

24 avr 2020 · STACKED XGBOOST Arvind Kumar Stanford University Daniel Mas Montserrat ∗ Purdue University Carlos Bustamante Stanford University |

|

Evaluating XGBoost for User Classification by using - DiVA portal

tinuous authentication, and investigates Extreme Gradient Boosting (XGBoost) for user URL: https : / / web stanford edu/class/cs75n/Sensors pdf [17] Jerome |