a method for stochastic optimization kingma

What are stochastic methods for algorithms?

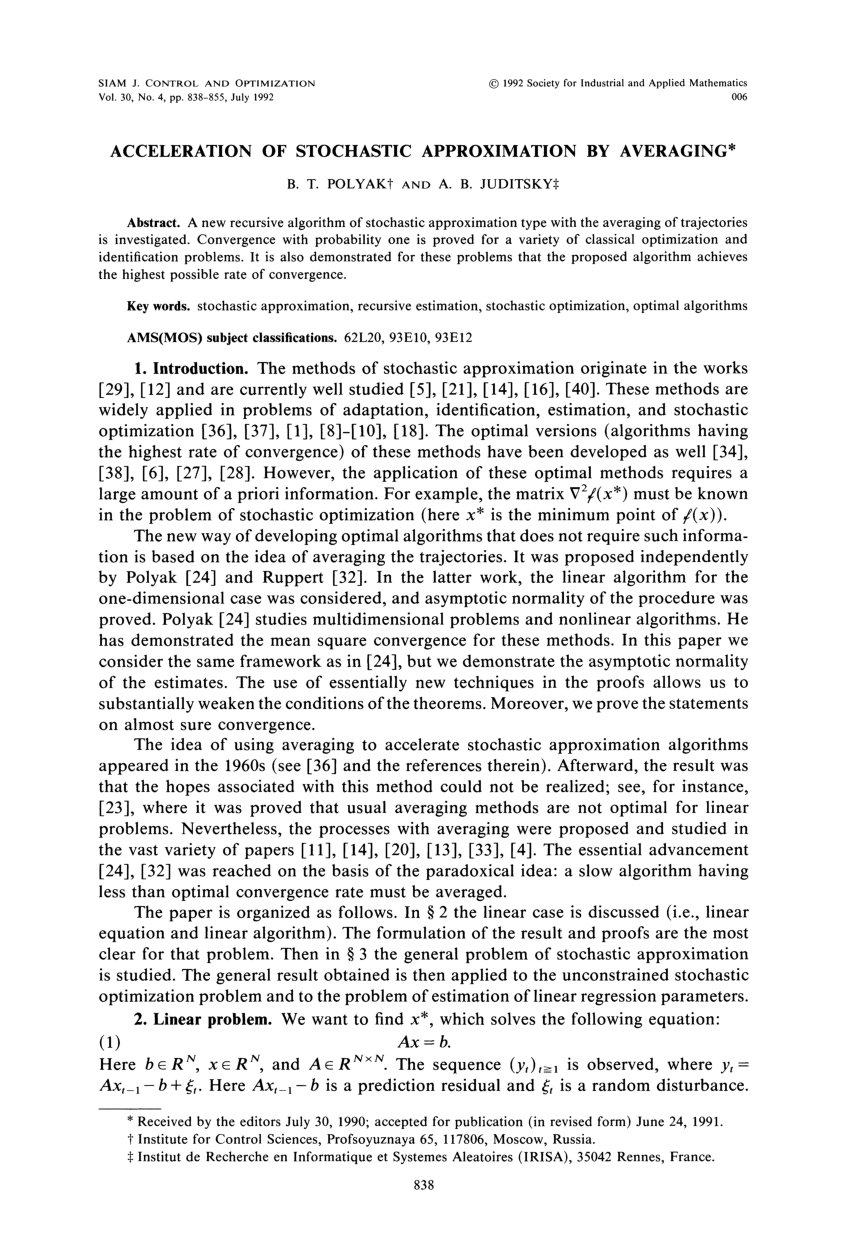

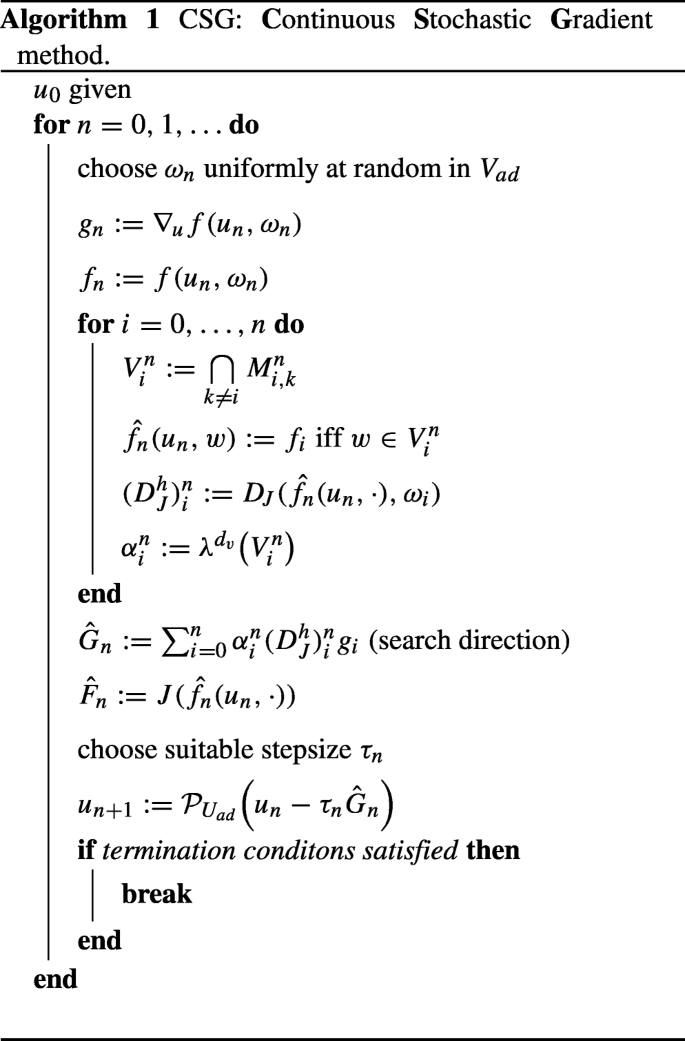

Stochastic optimization (SO) methods are optimization methods that generate and use random variables.

For stochastic problems, the random variables appear in the formulation of the optimization problem itself, which involves random objective functions or random constraints.What are the methods of stochastic optimization?

Common methods of stochastic optimization include direct search methods (such as the Nelder-Mead method), stochastic approximation, stochastic programming, and miscellaneous methods such as simulated annealing and genetic algorithms.

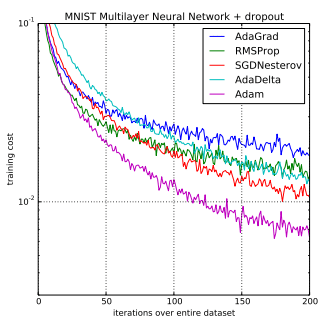

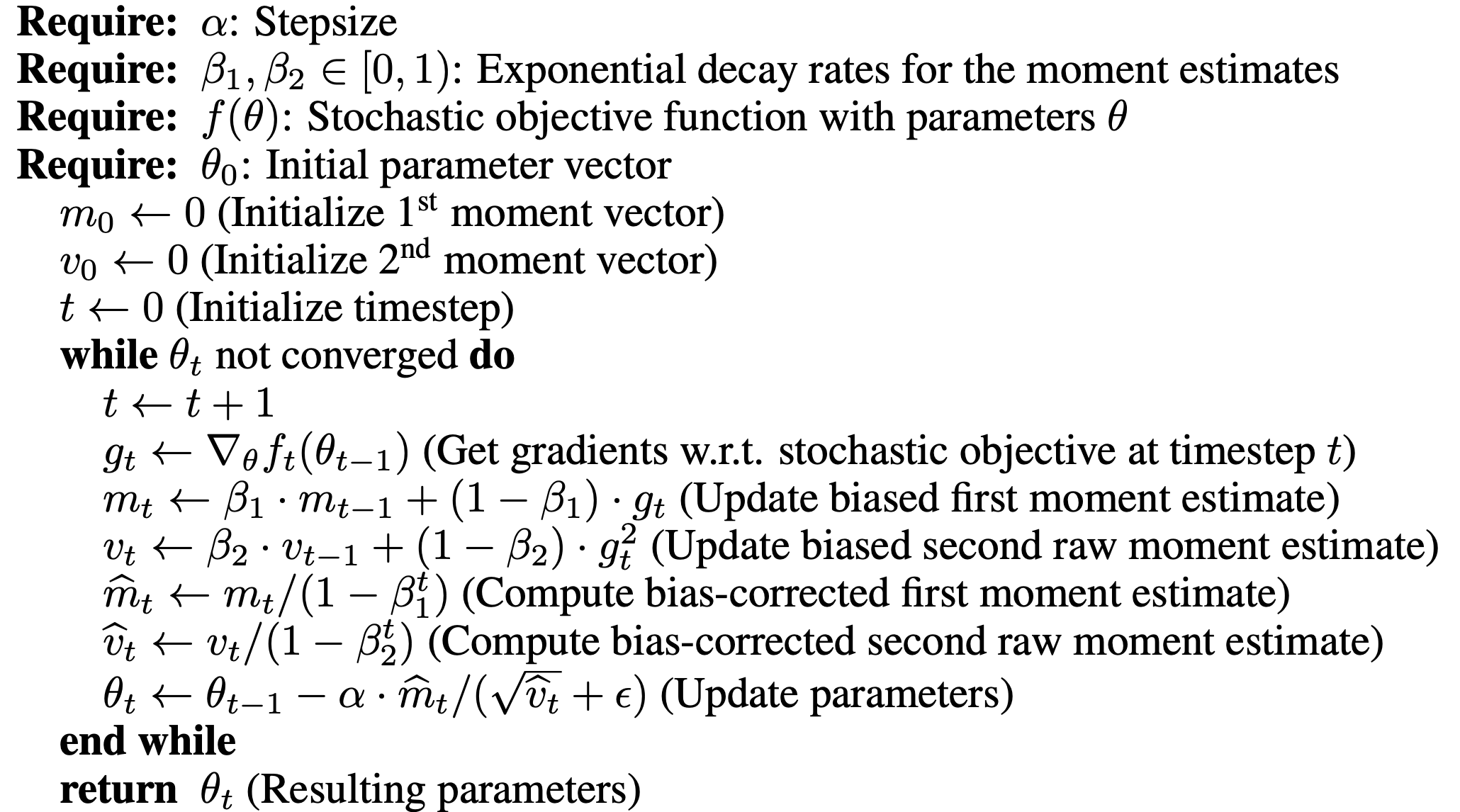

What is the Adam method for Stochastic Gradient Descent?

Adam is a replacement optimization algorithm for stochastic gradient descent for training deep learning models.

Adam combines the best properties of the AdaGrad and RMSProp algorithms to provide an optimization algorithm that can handle sparse gradients on noisy problems.

|

Adam: A Method for Stochastic Optimization

Jan 30 2017 Published as a conference paper at ICLR 2015. ADAM:AMETHOD FOR STOCHASTIC OPTIMIZATION. Diederik P. Kingma*. University of Amsterdam |

|

Adam: A Method for Stochastic Optimization - Diederik P. Kingma

Oct 18 2015 Adam: A Method for Stochastic Optimization. Diederik P. Kingma and Jimmy Lei Ba. Nadav Cohen. The Hebrew University of Jerusalem. |

|

A Unified Framework for Stochastic Optimization

Jul 25 2017 (2011)) |

|

Adam: A Method for Stochastic Optimization - Diederick P. Kingma

Adam: A Method for Stochastic Optimization. Diederick P. Kingma Jimmy Lei Bai. Jaya Narasimhan. February 10 |

|

Adam - A Method for Stochastic Optimization v2.1

Adam: A Method for Stochastic. Optimization. Diederik P. Kingma Jimmy Ba. Presented by Dor Ringel down to solving the following optimization problem:. |

|

Improved Binary Forward Exploration: Learning Rate Scheduling

Jul 9 2022 Learning Rate Scheduling Method for Stochastic Optimization. Xin Cao†?. Abstract ... and Adam (Kingma & Ba |

|

CoolMomentum: a method for stochastic optimization by Langevin

CoolMomentum: a method for stochastic optimization by Langevin dynamics with simulated annealing. Oleksandr Borysenko13? & Maksym Byshkin2 |

|

ACMo: Angle-Calibrated Moment Methods for Stochastic Optimization

method (ACMo) a novel stochastic optimization method. It enjoying both of their benefits (Kingma and Ba 2015). Cur-. |

|

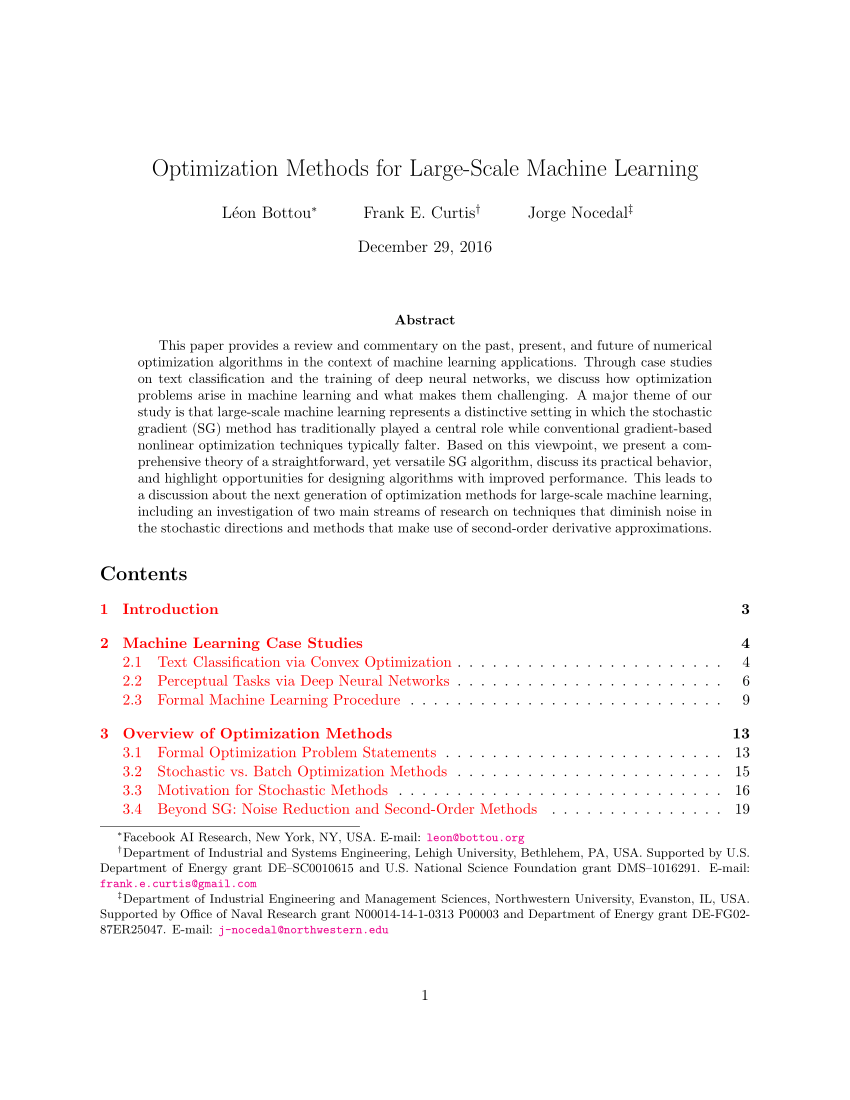

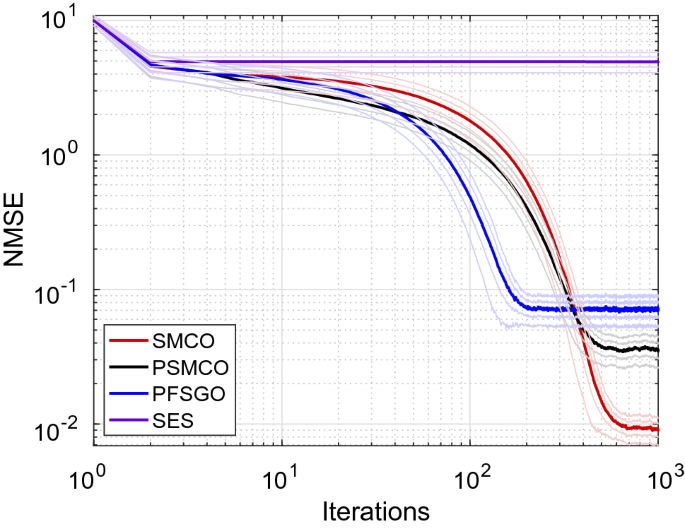

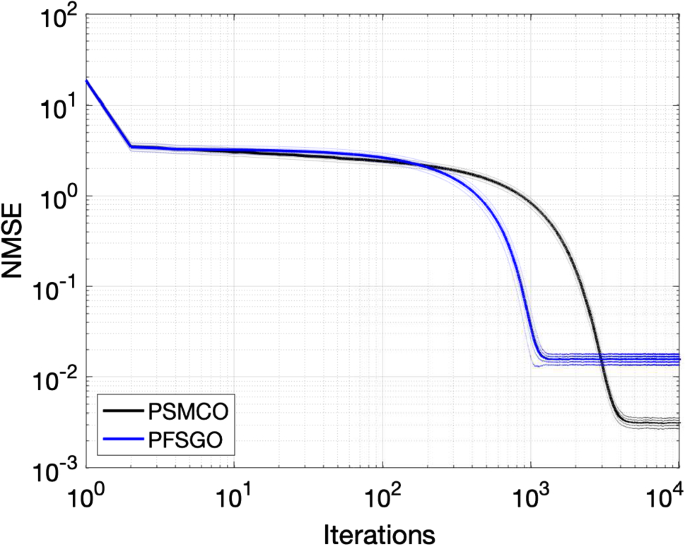

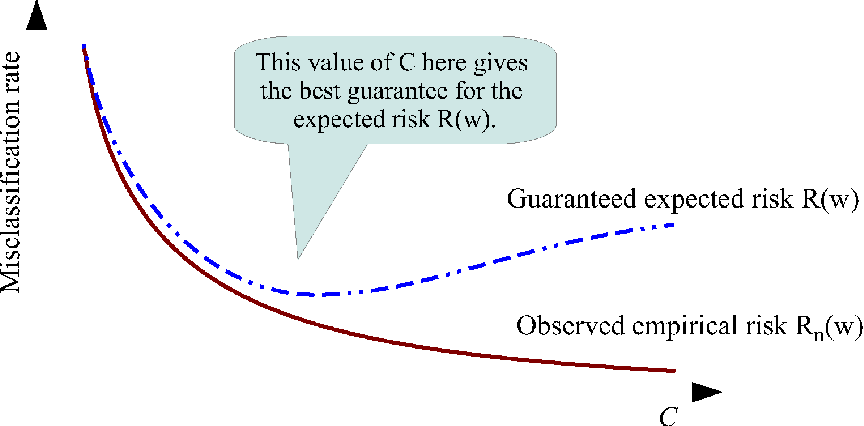

Deep Learning

For a xed computational budget stochastic optimization algorithms reach a lower [1]: Kingma and Ba |

|

Adam: A Method for Stochastic Optimization - HUJI moodle

18 oct 2015 · Disadvantage in non-stationary settings: All gradients (recent and old) weighted equally Nadav Cohen Adam (Kingma Ba) October 18, 2015 |

|

PbSGD: Powered Stochastic Gradient Descent Methods for - IJCAI

[Kingma and Ba, 2015] are proposed to train DNN more effi- ciently Despite the methods for stochastic optimization with and without momen- tum; 2) conduct |

|

Stochastic Optimization for Machine Learning - Shuai Zheng

This survey provides a review and summary on the stochastic optimization algorithms in the integrate the stochastic gradients into the alternating direction method of multipliers (ADMM), which [40] D Kingma and J Ba Adam: A method |

|

Deep Learning - Optimization - Erwan Scornet

Dedicated optimization algorithms can solve this on a large scale very efficiently [“Adam: A method for stochastic optimization”, Kingma and Ba 2014] General |

|

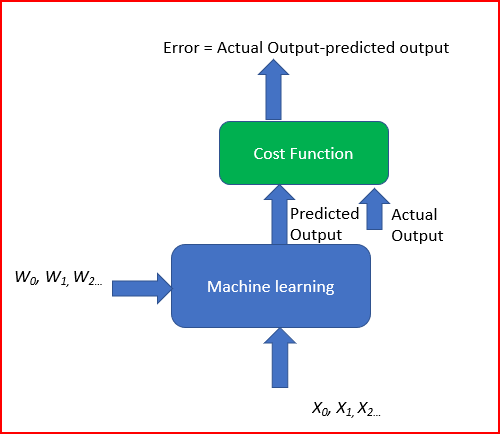

Optimizers

Cost function: How good is your neural network? Then: Stochastic gradient descent Adam: A Method for Stochastic Optimization (Kingma Ba, 2015) ○ |

|

Using Statistics to Automate Stochastic Optimization - NIPS

rate of stochastic gradient methods remains a major roadblock to obtaining good 2011; Tieleman and Hinton, 2012; Kingma and Ba, 2014, and other variants), |

|

Effective neural network training with a new weighting - IEEE Xplore

INDEX TERMS Deep learning, Optimization algorithm, Learning rate, Neural [ 23] D P Kingma and J Ba, “Adam: A method for stochastic optimization,” |

![PDF] Adaptive Gradient Descent for Convex and Non-Convex PDF] Adaptive Gradient Descent for Convex and Non-Convex](https://i1.rgstatic.net/publication/328979238_Double_Adaptive_Stochastic_Gradient_Optimization/links/5bee397792851c6b27c25c56/largepreview.png)

![DL輪読会]DeepLearningと曲がったパラメータ空間 (Scalable trust DL輪読会]DeepLearningと曲がったパラメータ空間 (Scalable trust](https://pdf4pro.com/cache/preview/f/a/e/a/d/a/8/7/thumb-faeada872ba879e5ebd81fd9c002d860.jpg)

![PDF] An overview of gradient descent optimization algorithms PDF] An overview of gradient descent optimization algorithms](https://i1.rgstatic.net/publication/342881746_Spatio-Temporal_Stochastic_Optimization_Theory_and_Applications_to_Optimal_Control_and_Co-Design/links/5f170fd845851515ef3bfe62/largepreview.png)

![PDF] Optimization Methods for Large-Scale Machine Learning PDF] Optimization Methods for Large-Scale Machine Learning](https://raw.githubusercontent.com/jliphard/DeepEvolve/726aaf3dfdc8d6d2c6bc64d3a55e3ab3023b29c7/Images/Optimizer.png)

![PDF] Optimization Methods for Large-Scale Machine Learning PDF] Optimization Methods for Large-Scale Machine Learning](https://icml.cc/Conferences/2014/icml2014keywords/image/suzuki14.png)