backdoor adversarial attack

|

On the Trade-off between Adversarial and Backdoor Robustness

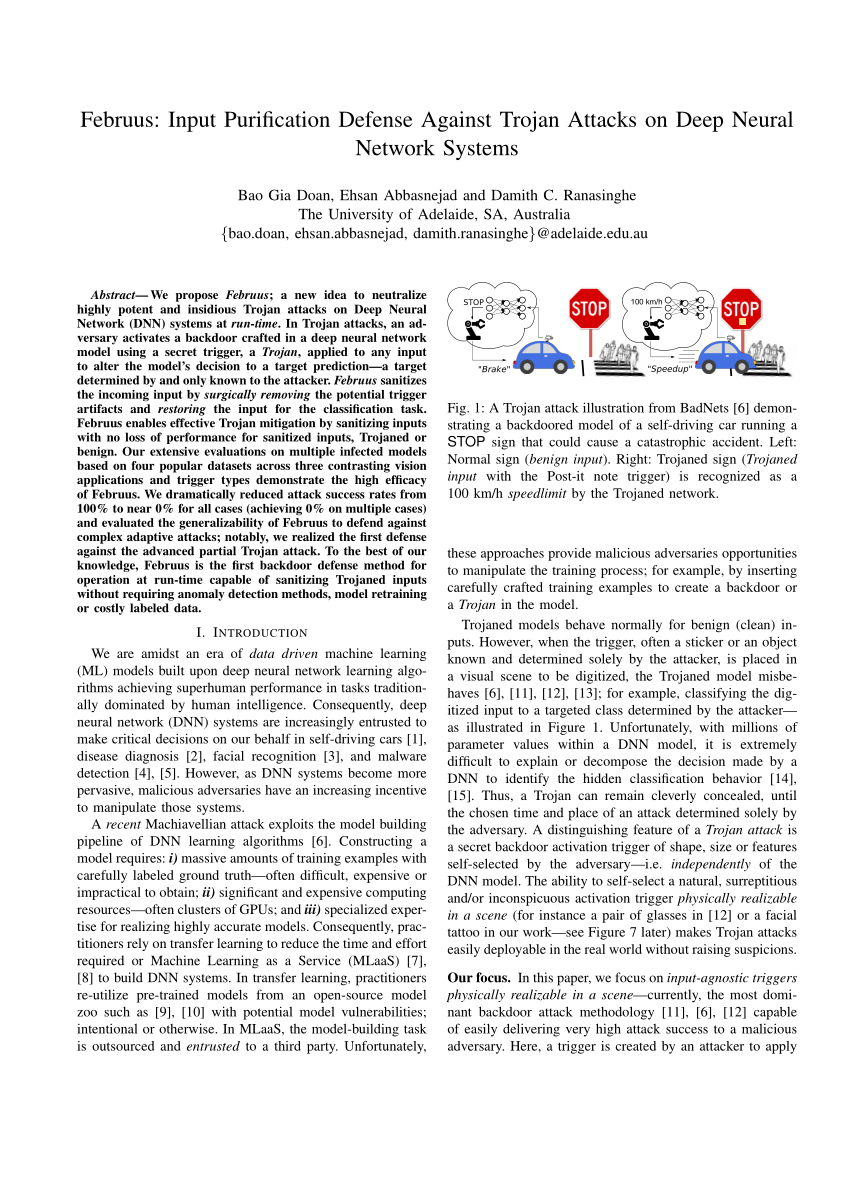

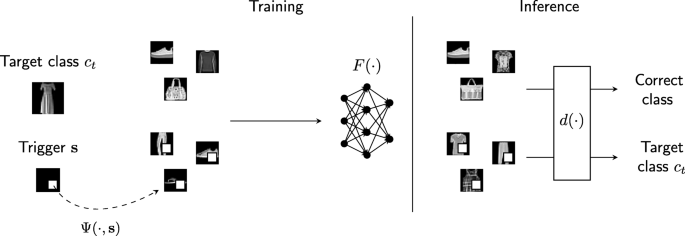

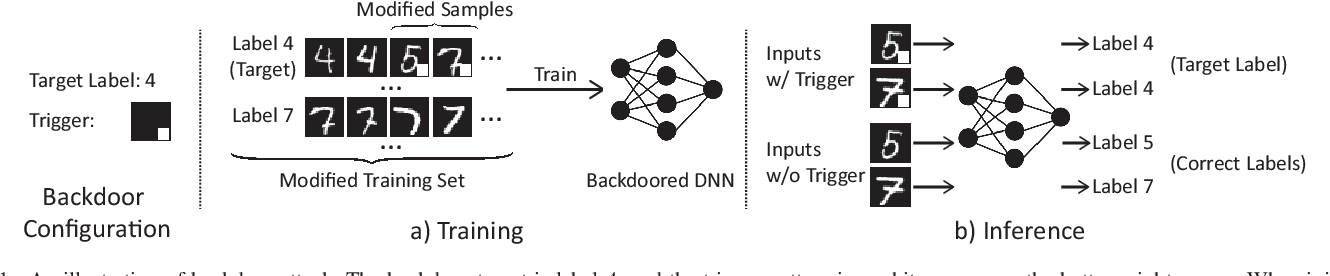

On the other hand backdoor attacks aim at fooling the model with pre-mediated inputs An attacker can “poison” training data by adding crafted triggers in some |

How is backdoor attack different from adversarial attack?

An adversarial example can be generated by slightly perturbing the input of a regular example in directions where the output of the model gives the highest loss.

On the other hand, backdoor attacks aim at fooling the model with pre-mediated inputs.What is a backdoor attack in machine learning?

A backdoor attack is when an attacker subtly alters AI models during training, causing unintended behavior under certain triggers.

This form of attack is particularly challenging because it remains hidden within the model's learning mechanism, making detection difficult.What is backdoor attack?

A backdoor attack is a clandestine method of sidestepping normal authentication procedures to gain unauthorized access to a system.

Typically, executing a backdoor attack involves exploiting system weaknesses or installing malicious software that creates an entry point for the attacker.A backdoor is a malware type that negates normal authentication procedures to access a system.

As a result, remote access is granted to resources within an application, such as databases and file servers, giving perpetrators the ability to remotely issue system commands and update malware.

|

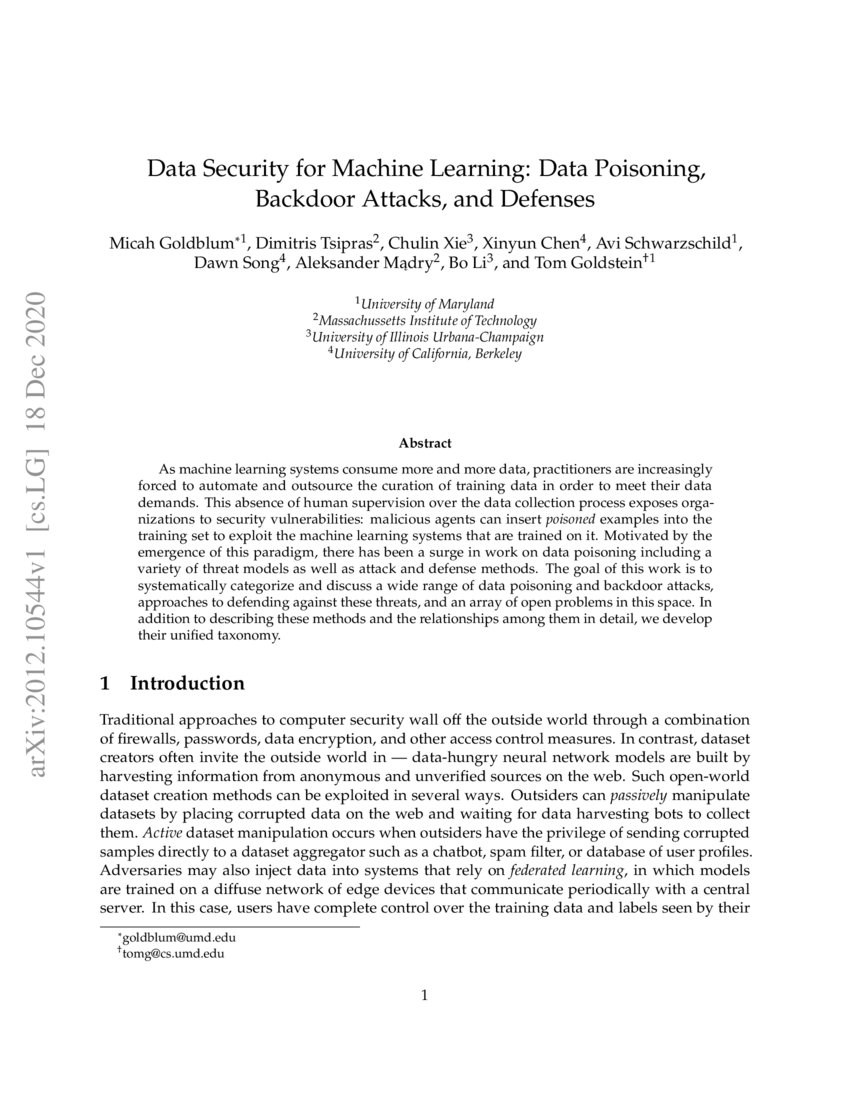

Hidden Trigger Backdoor Attacks

With the success of deep learning algorithms in various do- mains studying adversarial attacks to secure deep models in real world applications has become an |

|

On the Trade-off between Adversarial and Backdoor Robustness

Deep neural networks are shown to be susceptible to both adversarial attacks and backdoor attacks. Although many defenses against an individual type of the. |

|

DeHiB: Deep Hidden Backdoor Attack on Semi-supervised Learning

secure SSL under adversarial attack scenarios. Recently the security of deep learning |

|

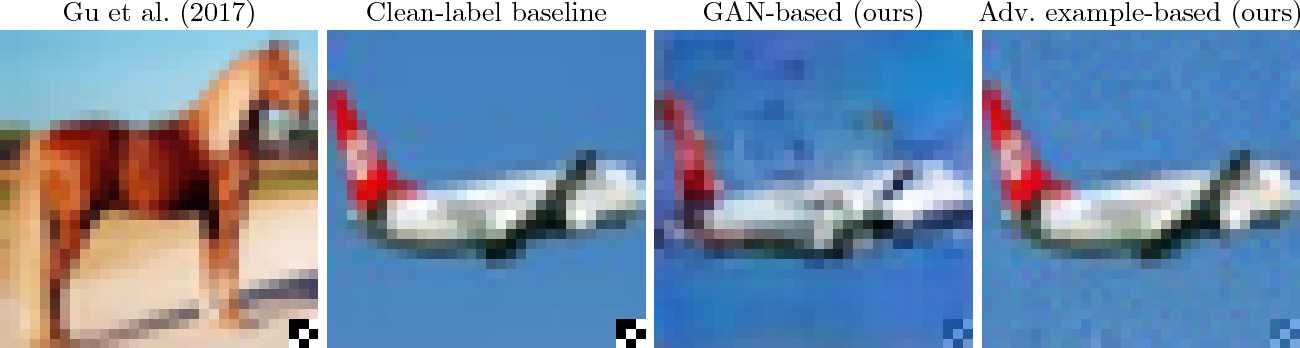

Mind the Style of Text! Adversarial and Backdoor Attacks Based on

7 nov. 2021 Adversarial attacks and backdoor attacks are two common security threats that hang over deep learning. Both of them harness task-. |

|

AdvDoor: Adversarial Backdoor Attack of Deep Learning System

11 juil. 2021 Quan Zhang Yifeng Ding |

|

Invisible Backdoor Attacks on Deep Neural Networks via

We introduce two novel definitions of invisibility for human perception; one is conceptualized by the Perceptual Adversarial Similarity Score (PASS) [1] and the |

|

Targeted Forgetting and False Memory Formation in Continual

17 févr. 2020 Adversarial Backdoor Attacks. Muhammad Umer ... neural networks (ANNs) to adversarial false memory ... sarial backdoor poisoning attacks. |

|

A Synergetic Attack against Neural Network Classifiers combining

3 sept. 2021 Although we have one-sided defenses against adversarial perturbation or Trojan backdoor these defenses may give a false sense of security to ... |

|

Exploring adversarial attacks against malware classifiers in the

3 mars 2021 We research the vulnerability of ML malware classifications to backdoor poisoning attack for the first time concentrating explicitly on. |

|

Targeted Backdoor Attacks on Deep Learning Systems Using Data

15 déc. 2017 that a backdoor adversary can inject only around 50 poisoning samples while achieving an attack success rate of above 90%. We. |

|

Detecting Backdoor Attacks on Deep Neural - CEUR-WSorg

Thus, a backdoor attack enables the adversary to choose whatever perturbation is most convenient for triggering mis- classifications (e g placing a sticker on a |

|

Identifying and Mitigating Backdoor Attacks in Neural Networks

We consider this type of attack a variant of known attacks (adversarial poisoning), and not a backdoor attack We define a DNN backdoor to be a hidden pattern |

|

Neural Backdoors in NLP - Stanford University

An adversary's goal in a backdoor attack is to create a backdoored model that is indistinguishable from a normal model on natural inputs, but has targeted outputs |

|

On the Trade-off between Adversarial and Backdoor Robustness

Deep neural networks are shown to be susceptible to both adversarial attacks and backdoor attacks Although many defenses against an individual type of the |

|

A Natural Backdoor Attack on Deep Neural Networks - ECVA

at different stages of the development pipeline: adversarial examples crafted at the test stage, and data poisoning attacks and backdoor attacks crafted at |

![PDF] Targeted Forgetting and False Memory Formation in Continual PDF] Targeted Forgetting and False Memory Formation in Continual](https://d3i71xaburhd42.cloudfront.net/cb4c2a2d7e50667914d1a648f1a9134056724780/4-TableI-1.png)

![PDF] Targeted Backdoor Attacks on Deep Learning Systems Using Data PDF] Targeted Backdoor Attacks on Deep Learning Systems Using Data](https://storage.googleapis.com/groundai-web-prod/media/users/user_14/project_410387/images/x1.png)

![PDF] Neural Cleanse: Identifying and Mitigating Backdoor Attacks PDF] Neural Cleanse: Identifying and Mitigating Backdoor Attacks](https://dl.acm.org/cms/asset/5c01b7f6-8358-4aeb-94d3-f28229110700/3340531.3412130.key.jpg)

![PDF] Targeted Forgetting and False Memory Formation in Continual PDF] Targeted Forgetting and False Memory Formation in Continual](https://assets.researchsquare.com/files/rs-108085/v1/70e6ded799bcbcfb7514c245.png)

![PDF] Trojan Attacks on Wireless Signal Classification with PDF] Trojan Attacks on Wireless Signal Classification with](https://media.springernature.com/lw785/springer-static/image/chp%3A10.1007%2F978-3-030-29726-8_18/MediaObjects/485369_1_En_18_Fig5_HTML.png)