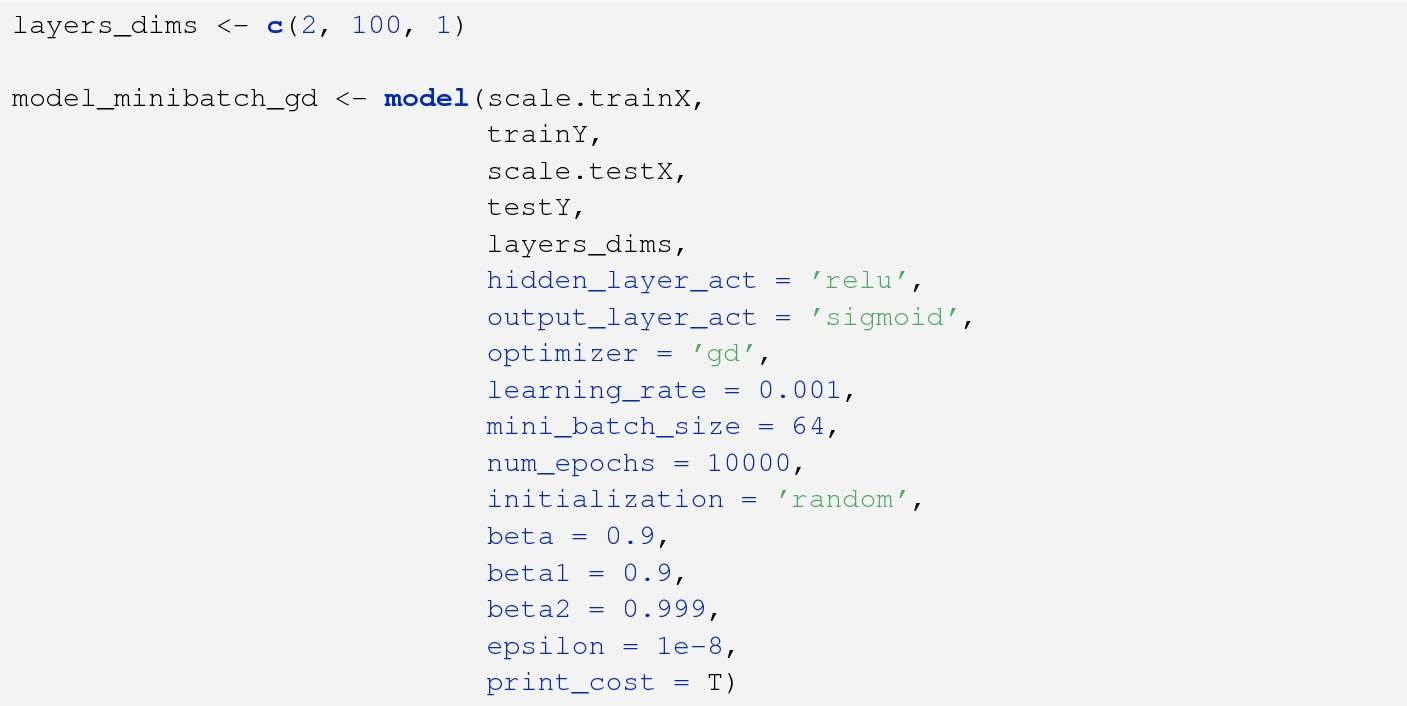

batch size vs learning rate

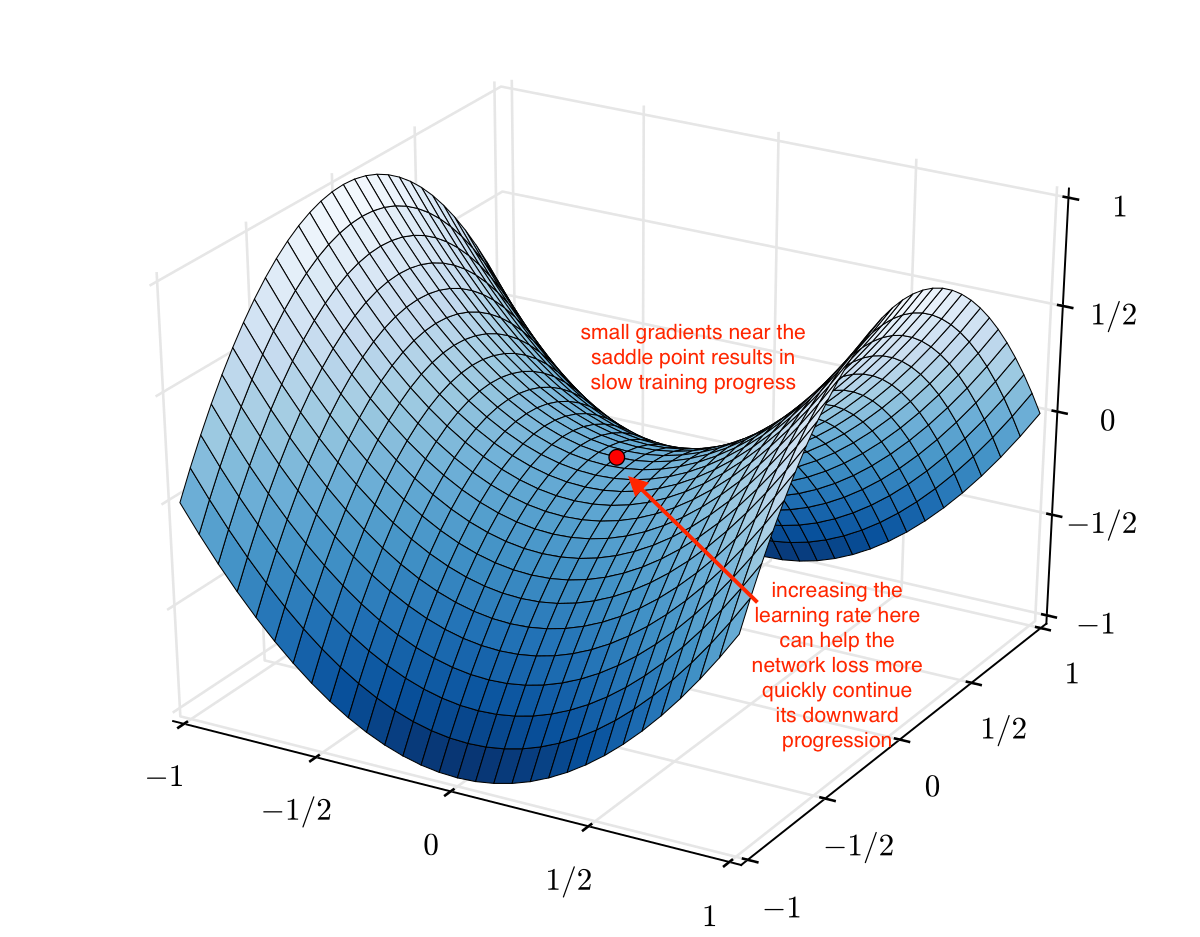

Why do we increase batch size during training?

instead of decaying the learning rate, we increase the batch size during training. This strategy achieves near-identical model performance on the test set with the same number of training epochs but significantly fewer parameter updates.

Should batch size be multiplied by K?

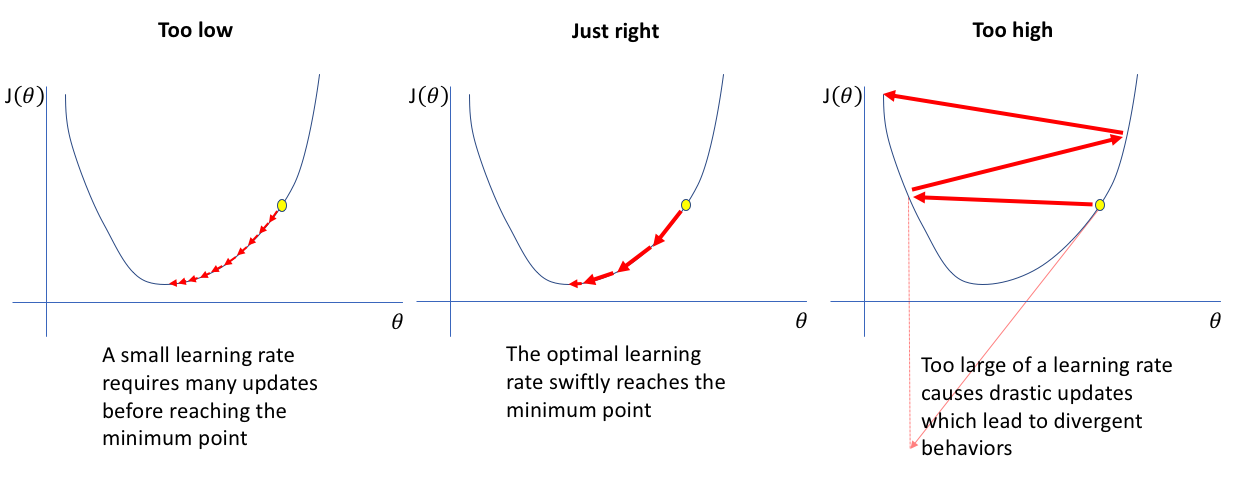

Theory suggests that when multiplying the batch size by k, one should multiply the learning rate by sqrt (k) to keep the variance in the gradient expectation constant. See page 5 at A. Krizhevsky.

Why is a small batch better than a large batch?

To conclude, and answer your question, a smaller mini-batch size (not too small) usually leads not only to a smaller number of iterations of a training algorithm, than a large batch size, but also to a higher accuracy overall, i.e, a neural network that performs better, in the same amount of training time, or less.

Does batch size affect learning rate?

For reference, I was discussing with someone, and it was said that, when batch size is increased, the learning rate should be decreased by some extent. My understanding is when I increase batch size, computed average gradient will be less noisy and so I either keep same learning rate or increase it.

|

DONT DECAY THE LEARNING RATE INCREASE THE BATCH SIZE

(2017) exploited a linear scaling rule between batch size and learning rate to train ResNet-50 on ImageNet in one hour with batches of 8192 images. These |

|

An Empirical Model of Large-Batch Training

14-Dec-2018 learning: in reinforcement learning batch sizes of over a million ... period or an unusual learning rate schedule) |

|

AdaBatch: Adaptive Batch Sizes for Training Deep Neural Networks

14-Feb-2018 requires careful choice of both learning rate and batch size. ... faster running times compared with training using fixed batch sizes. |

|

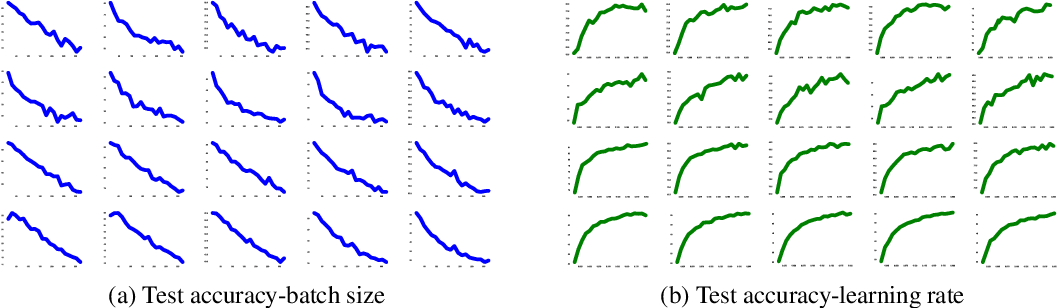

Control Batch Size and Learning Rate to Generalize Well

This paper reports both theoretical and empirical evidence of a training strategy that we should control the ratio of batch size to learning rate not too large |

|

On Adversarial Robustness of Small vs Large Batch Training

current workhorse for training neural network models. Hy- perparameters like learning rate batch size and momen- tum play an important role in SGD for |

|

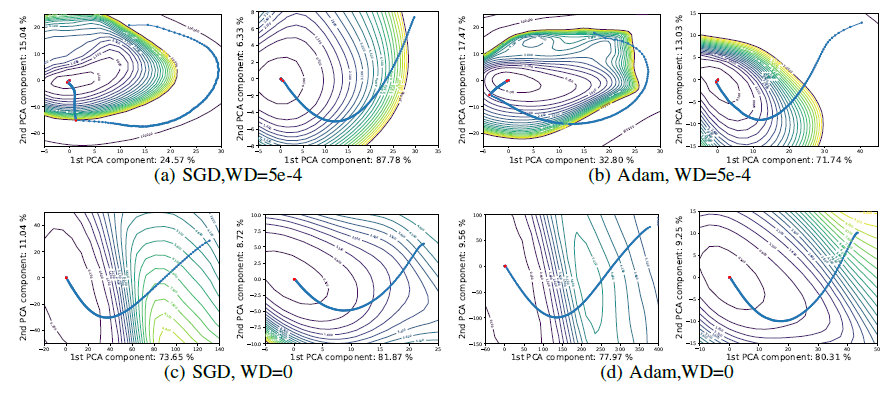

Three Factors Influencing Minima in SGD

13-Sept-2018 the ratio of learning rate to batch size is a key determinant of SGD ... which behave similarly in terms of predictions compared with wide ... |

|

CSC 2541: Neural Net Training Dynamics - Lecture 7 - Stochasticity

In stochastic training the learning rate also influences the The mini-batch size S is a hyperparameter that needs to be set. Large batches: converge in ... |

|

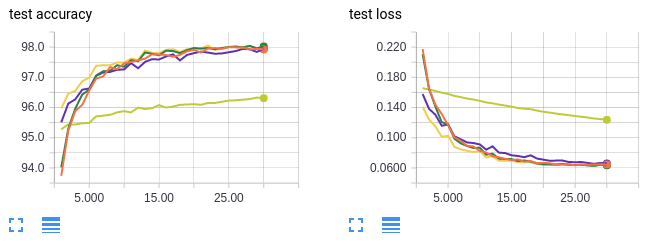

ADABATCH: ADAPTIVE BATCH SIZES FOR TRAINING DEEP

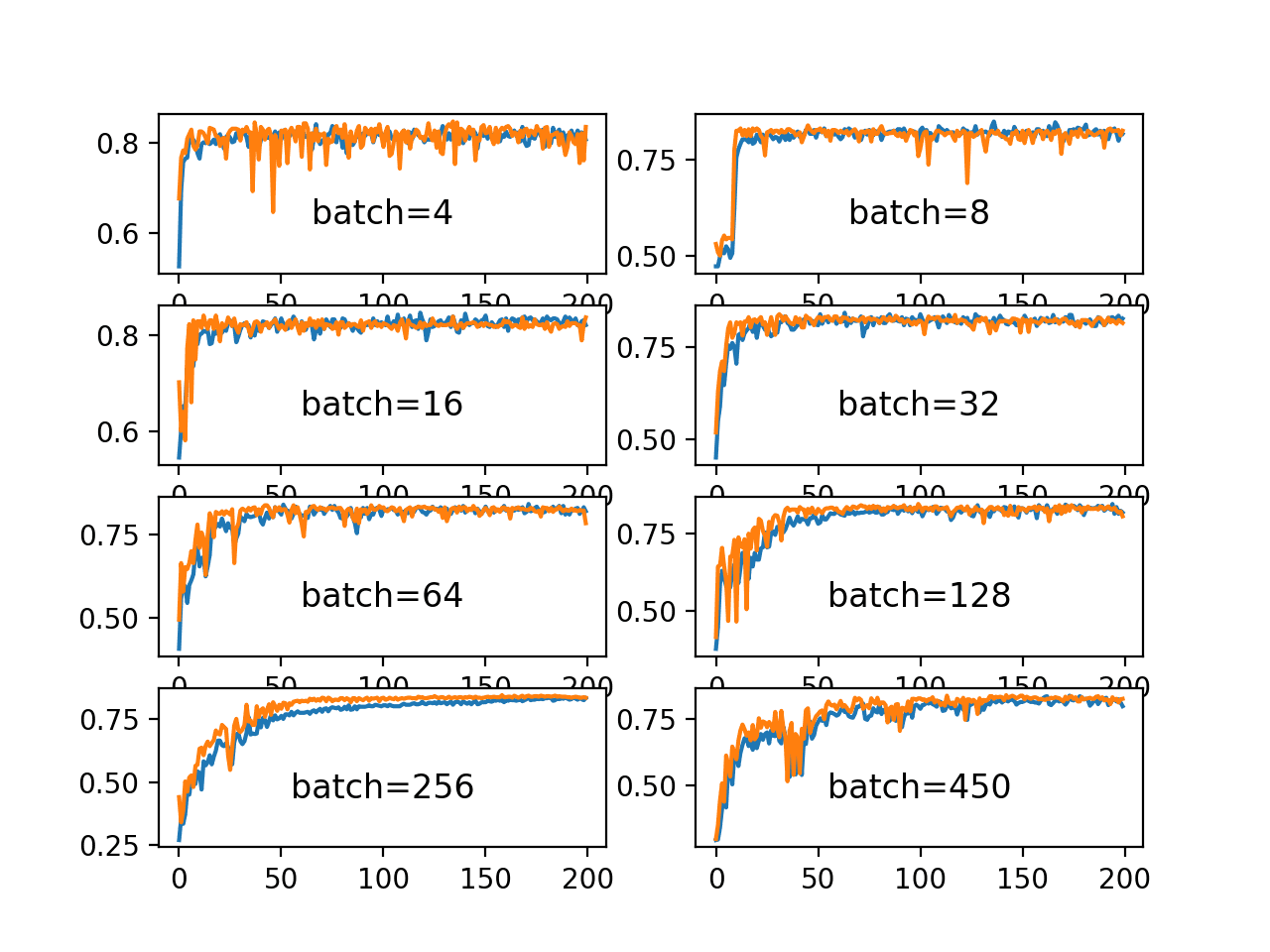

red) vs. fixed batch sizes (in blue) where “LR” uses learning rate warmup for the first 5 epochs. 3 EXPERIMENTAL RESULTS. We test our adaptive batch size |

|

Analyzing Performance of Deep Learning Techniques for Web

hidden units number of layers |

| Dynamically Adjusting Transformer Batch Size by Monitoring |

|

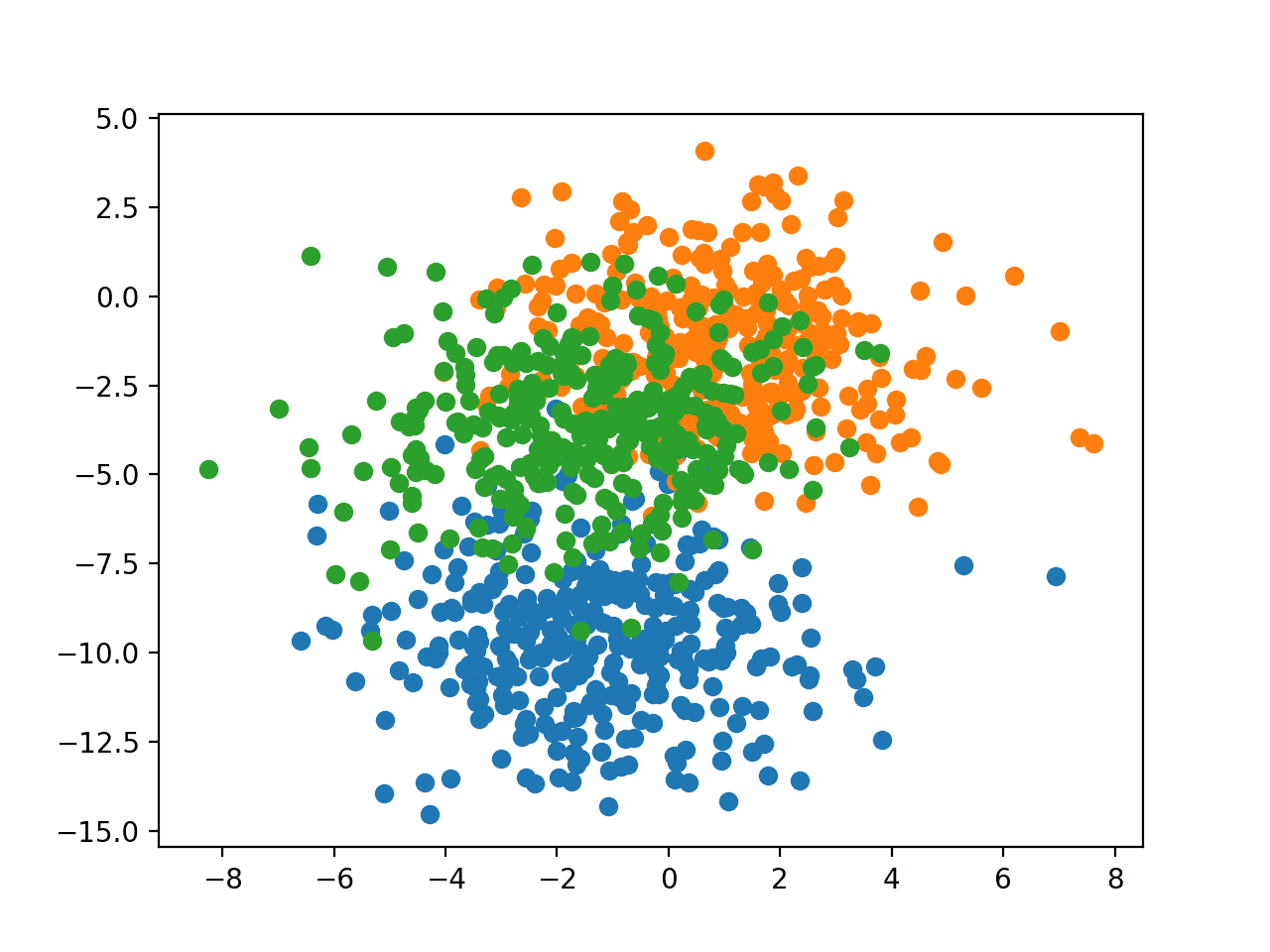

Control Batch Size and Learning Rate to Generalize Well - NeurIPS

Each point represents a model Totally 1,600 points are plotted has a positive correlation with the ratio of batch size to learning rate, which suggests a negative correlation between the generalization ability of neural networks and the ratio This result builds the theoretical foundation of the training strategy |

|

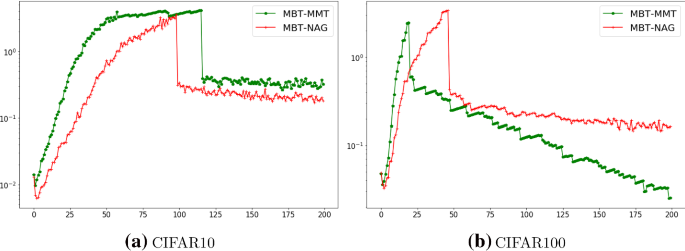

Which Algorithmic Choices Matter at Which Batch Sizes? - NIPS

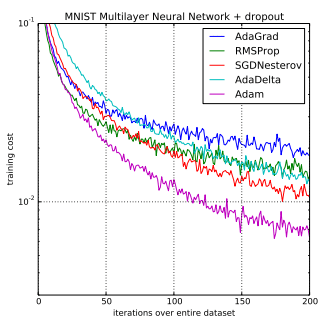

much larger critical batch sizes than stochastic gradient descent with optimal learning rates and large batch training, making it a useful tool to generate |

|

Training ImageNet in 1 Hour - Facebook Research

batch size and develop a new warmup scheme that over- comes optimization batch ∪jBj of size kn and learning rate ˆη yields: ˆwt+1 = wt − ˆη 1 kn ∑ j |

|

Train Deep Neural Networks with Small Batch Sizes - IJCAI

to better training performance We prove that our algorithm achieves comparable convergence rate as vanilla SGD even with small batch size Our framework is |

|

CROSSBOW: Scaling Deep Learning with Small Batch Sizes on

To fully utilise all GPUs, systems must increase the batch size, which hinders statistical efficiency Users tune hyper-parameters such as the learning rate to |

|

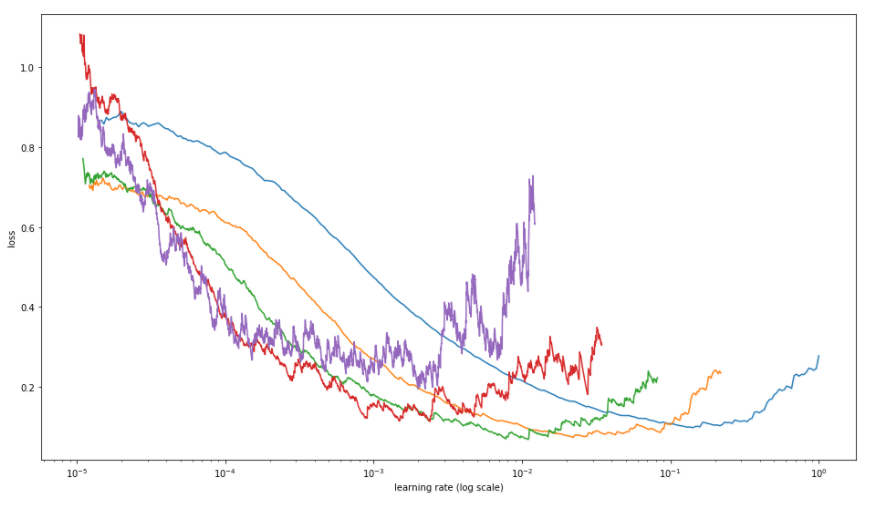

On the Generalization Benefit of Noise in Stochastic Gradient Descent

performance at a range of learning rates for two batch sizes, 64 and 1024 (the When the batch size is large, the optimal learning rate is large, and SGD with |

|

On the Generalization Benefit of Noise in Stochastic Gradient Descent

(2017) found that learning rate warmup enables us to scale training efficiently to larger batch sizes, and Shallue et al (2018) emphasized that the optimal scaling |

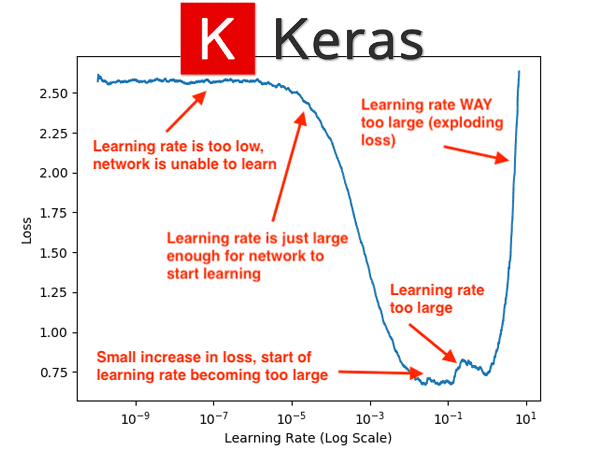

![PDF] A disciplined approach to neural network hyper-parameters PDF] A disciplined approach to neural network hyper-parameters](https://i1.rgstatic.net/publication/3907199_The_need_for_small_learning_rates_on_large_problems/links/54a6ddab0cf267bdb909f9e5/largepreview.png)

![PDF] A disciplined approach to neural network hyper-parameters PDF] A disciplined approach to neural network hyper-parameters](https://www.jeremyjordan.me/content/images/2018/02/Screen-Shot-2018-02-25-at-8.44.49-PM.png)

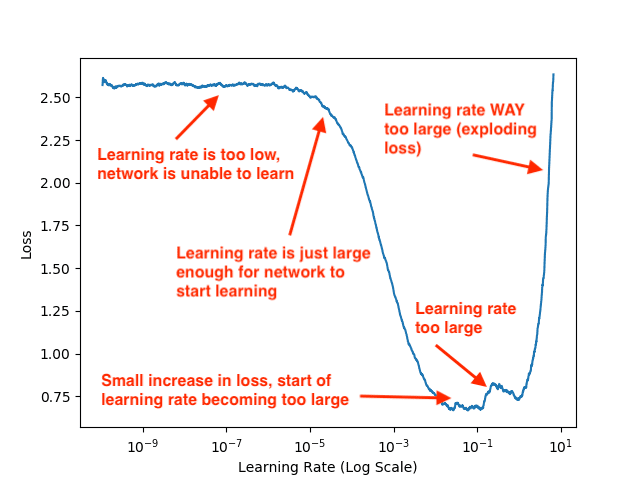

![PDF] A disciplined approach to neural network hyper-parameters PDF] A disciplined approach to neural network hyper-parameters](https://miguel-data-sc.github.io/img/Captura2.PNG)

![PDF] Control Batch Size and Learning Rate to Generalize Well PDF] Control Batch Size and Learning Rate to Generalize Well](https://petuum.com/wp-content/uploads/2020/09/Expected-Training-Time.png)