bias and variance

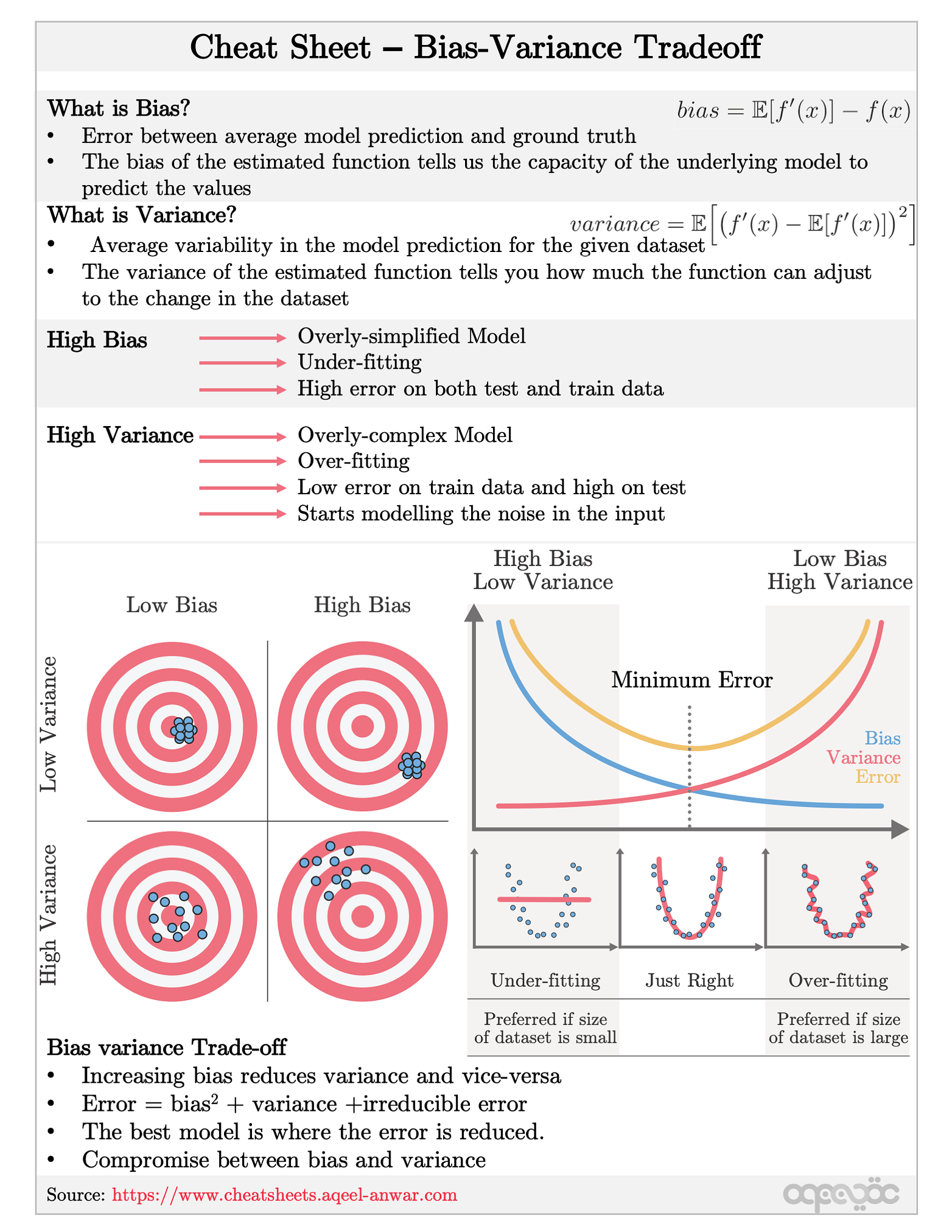

What is bias variance tradeoff?

This is called Bias-Variance Tradeoff. It helps optimize the error in our model and keeps it as low as possible. An optimized model will be sensitive to the patterns in our data, but at the same time will be able to generalize to new data. In this, both the bias and variance should be low so as to prevent overfitting and underfitting.

What are bias and variance in machine learning?

You have likely heard about bias and variance before. They are two fundamental terms in machine learning and often used to explain overfitting and underfitting. If you're working with machine learning methods, it's crucial to understand these concepts well so that you can make optimal decisions in your own projects.

Why is it important to understand bias and variance?

An optimal balance of bias and variance would never overfit or underfit the model. Therefore understanding bias and variance is critical for understanding the behavior of prediction models. Thank you for reading! Whenever we discuss model prediction, it’s important to understand prediction errors (bias and variance).

What is the difference between low bias and high bias?

Low Bias: Low bias value means fewer assumptions are taken to build the target function. In this case, the model will closely match the training dataset. High Bias: High bias value means more assumptions are taken to build the target function. In this case, the model will not match the training dataset closely.

|

A Unified Bias-Variance Decomposition and its Applications

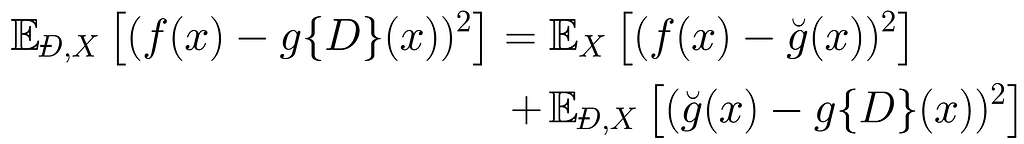

Bias-variance decompositions de- compose the expected loss into three terms: bias vari- ance and noise. A standard such decomposition exists for squared loss |

|

Bias and Variance in Value Function Estimation

Markov Processes Reinforcement Learning |

|

A Bias and Variance Analysis for Multi-Step-Ahead Time Series

A notable theoretical study on bias and variance analysis for multi-step forecasting for linear models was presented by [35]. He proved that standard model. |

|

Rethinking Bias-Variance Trade-off for Generalization of Neural

8 déc. 2020 tion for this by measuring the bias and variance of neural networks: while the bias is monoton- ically decreasing as in the classical theory ... |

|

ECE595 / STAT598: Machine Learning I Lecture 29 Bias and Variance

From VC Analysis to Bias-Variance Bias and variance is another decomposition. ... In Bias-variance analysis we define the out-sample error as. |

|

Bias and Variance in Machine Learning

A short discussion about classification. • Parameters that influence bias and variance. • Variance reduction techniques. • Decision tree induction |

|

Bias and variance of the Bayesian-mean decoder

Moreover we recover Wei and Stocker's “law of human perception” [1] |

|

Measures of Interviewer Bias and Variance

Measures of Interviewer Bias and Variance. INTRODUCTION. One of the possible sources of error in survey statistics is the interviewer who collects the data. |

|

Parameter estimation Bias & Variance

Bias and variance (1). Page 7. Bias and variance (2). Page 8. Bias-Variance in Regression which decomposes into “squared bias”+“variance”+ “noise”:. |

|

VIEW - Neural Networks and the Bias/Variance Dilemma

Neural Networks and the Bias/Variance Dilemma. Stuart Geman. Division of Applied Mathematics. Brown University |

|

Bias-Variance in Machine Learning

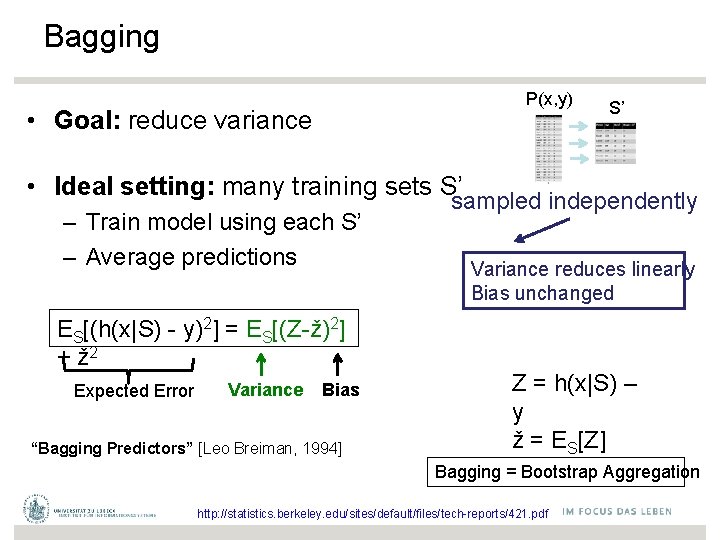

– small bias/high variance: many features, less regularization, unpruned trees, small-k k-NN Page 16 Bias-Variance Decomposition: Classification Page 17 |

|

Bias-Variance Analysis - CS229

The Bias and Variance are, as before, just the (centered) first and second moments of ˆfn (skipping x∗ in the notation) Bias of ˆfn at input x∗ is defined as E[ ˆfn − |

|

Bias-Variance Decomposition

Bias-Variance Decomposition for Classification Bias-Variance Analysis of Learning Algorithms Effect of Bagging on Bias and Variance Effect of Boosting on |

|

Bias and Variance of the Estimator

the parameter θ; how good is an estimator d(X) as an estimate for θ? variance bias sq variance bias sq inherent in the random variable t size N y(x; D) |

|

Bias and Variance in Machine Learning

Usually, the bias is a decreasing function of the complexity, while variance is an increasing function of the complexity Complexity of the Bayes model: – At fixed |

|

Bias-Variance - CEDAR

Model Complexity in Linear Regression 2 Point estimate Bias-Variance in Statistics 3 Bias-Variance in Regression – Choosing λ in maximum likelihood/ least |

|

Bias-‐Variance Tradeoff and Model selecIon

Bias – Variance Tradeoff Regression: Excess Risk = = variance + bias^2 No_ce: Op_mal predictor does not have zero error variance bias^2 Noise var |

|

Parameter estimation, Bias & Variance

Bias-Variance in Regression ▫ True function is y = f(x) + ε, where ε ~ N(0,σ 2) ▫ Given a set of examples X={(x i , y i )} we fit an hypothesis h(x) to X to |