audio adversarial examples targeted attacks on speech to text

|

Audio Adversarial Examples: Targeted Attacks on Speech-to-Text

With these definitions we can now define the probability of a given phrase p under the distribution y = f(x) as Pr(pjy) = |

Can audio adversarial attacks fool state-of-the-art speech-to-text systems?

The feasibility of this audio adversarial attack introduces a new domain to study machine and human perception of speech and shows that these adversarial examples fool State-Of-The-Art Speech-To-Text systems, yet humans are able to consistently pick out the speech.

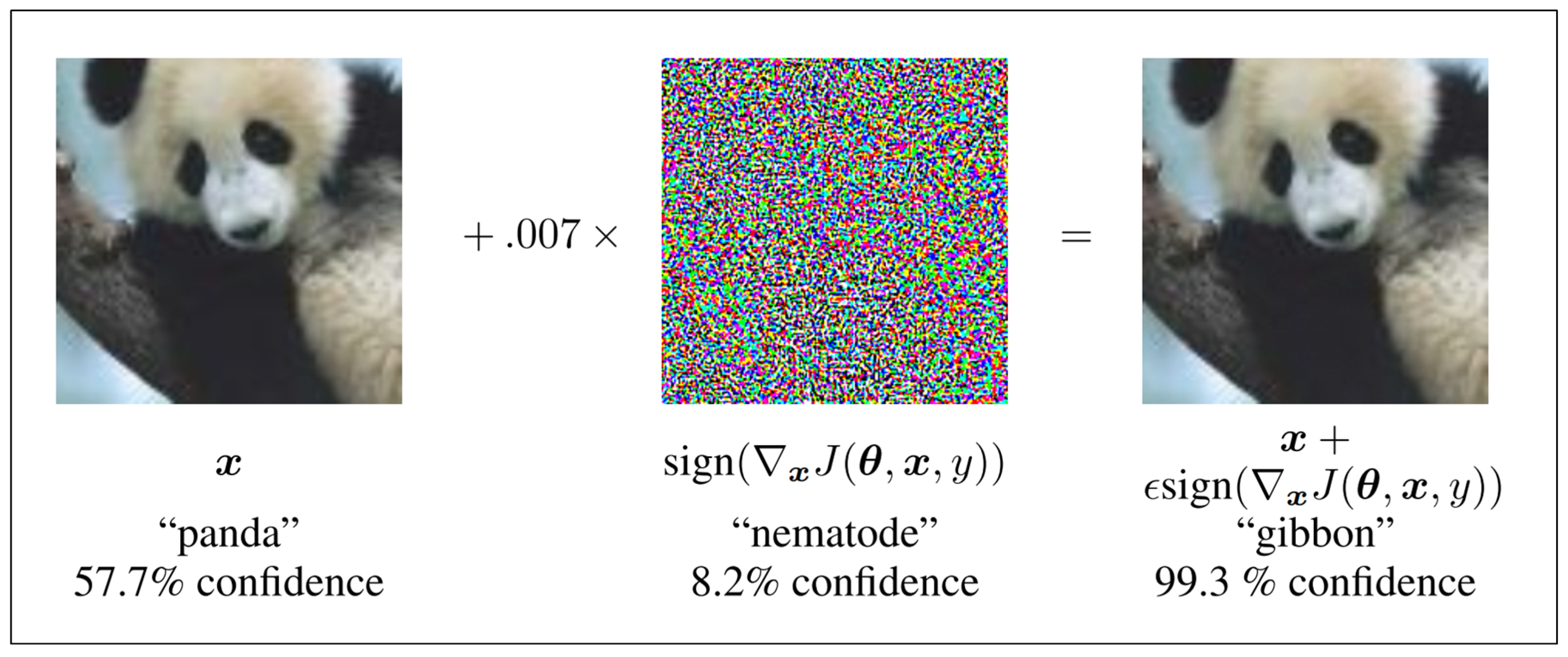

What are examples of adversarial attacks?

Another example of adversarial attacks is the concept of deepfakes. As reported by the Financial Times (paywall), AI-powered deepfakes are already being used in everyday attacks such as fraud, as well as to manipulate videos. Other attacks include attackers manipulating AI to carry out more authentic and devastating socially engineered attacks.

What is weighted-sampling audio adversarial example attack?

Towards Weighted-Sampling Audio Adversarial Example Attack. Experiments show that this method is the first in the field to generate audio adversarial examples with low noise and high audio robustness at the minute time-consuming level.

Are targeted audio adversarial examples based on automatic speech recognition?

Abstract: We construct targeted audio adversarial examples on automatic speech recognition. Given any audio waveform, we can produce another that is over 99.9% similar, but transcribes as any phrase we choose (recognizing up to 50 characters per second of audio).

Pr( jy) = yi

With these definitions, we can now define the probability of a given phrase p under the distribution y = f(x) as Pr(pjy) = people.eecs.berkeley.edu

C. Improved Loss Function

Carlini & Wagner [8] demonstrate that the choice of loss function impacts the final distortion of generated adversarial examples by a factor of 3 or more. We now show the same holds in the audio domain. While CTC loss is highly useful for training the neural network, we show that a careful design of the loss function allows generating better lower-

max f(x)i t0; 0 : t06=

We can make one further improvement on this loss function. The constant c used in the minimization formulation deter-mines the relative importance of being close to the original symbol versus being adversarial. A larger value of c allows the optimizer to place more emphasis on reducing `( ). In audio, consistent with prior work [9] we observe that

2 + ci

`i(x + ; t) i such that dBx( ) < where `i(x; ) = max f(x)i people.eecs.berkeley.edu

Adversarial Machine Learning explained! With examples.

Adversarial Attacks in Machine Learning Demystified

Audio Adversarial Examples: Targeted Attacks on Speech-to-Text

|

Audio Adversarial Examples: Targeted Attacks on Speech-to-Text

The current state-of-the-art targeted attack on automatic speech recognition is Houdini [10] which can only construct audio adversarial examples targeting |

|

Audio Adversarial Examples: Targeted Attacks on Speech-to-Text

30 mars 2018 Audio Adversarial Examples: Targeted Attacks on Speech-to-Text. Nicholas Carlini. David Wagner. University of California Berkeley. |

|

Audio Adversarial Examples: Targeted Attacks on Speech-to-Text

Abstract—We construct targeted audio adversarial examples on automatic speech recognition. Given any audio waveform we can produce another that is over |

|

Audio Adversarial Examples: Targeted Attacks on Speech-to-Text

Audio Adversarial Examples: Targeted Attacks on Speech-to-Text. Nicholas Carlini. David Wagner. University of California Berkeley. |

|

Generating Robust Audio Adversarial Examples with Temporal

and machine learning automatic speech recognition (ASR) systems have been integrated into ial examples to launch targeted attacks towards ASR systems. |

|

WaveGuard: Understanding and Mitigating Audio Adversarial

Prior works [12 26] demonstrate successful attack algorithms targeting traditional speech recognition models based on HMMs and GMMs [27–32]. For example |

|

Audio Adversarial Examples: Targeted Attacks on Speech-to-Text

Abstract—We construct targeted audio adversarial examples on automatic speech recognition. Given any audio waveform we can produce another that is over |

|

CHARACTERIZING AUDIO ADVERSARIAL EXAMPLES USING

audio adversarial examples and is resistant to adaptive attacks considered in et al. 2018) is a speech-to-text targeted attack which can attack audio ... |

|

Audio Adversarial Examples: Targeted Attacks on Speech-To-Text

</background>. Page 15. Page 16. Can we construct targeted adversarial examples for automatic speech recognition? This Talk: Page 17. Can we make a neural |

|

Audio Adversarial Examples: Targeted Attacks on Speech-To-Text

Targeted Attacks on Speech-To-Text Nicholas Carlini We construct targeted audio adversarial examples on automatic speech recognition Given any audio |

|

Generating Robust Audio Adversarial Examples with - IJCAI

and machine learning, automatic speech recognition (ASR) systems have been ial examples to launch targeted attacks towards ASR systems The goal of the |

|

TOWARDS MITIGATING AUDIO ADVERSARIAL - OpenReview

Audio adversarial examples targeting automatic speech recognition systems have recently to-text attack, and we consider the targeted attack for both cases |

|

Adversarial Examples Against Automatic Speech Recognition

deep learning based accurate automatic speech recognition to enable natural inter- Our algorithm performs targeted attacks with 87 success Adversarial Attacks on Audio: Adversarial examples refer to inputs that are maliciously crafted |

|

Imperceptible, Robust, and Targeted Adversarial Examples for

Adversarial Examples for Automatic Speech Recognition Yao Qin 1 Nicholas Carlini tively imperceptible audio adversarial examples (verified through a human line of work succeeded at generating targeted attacks in practice, even when |

|

Targeted Speech Adversarial Example Generation - IEEE Xplore

examples Specifically, we integrate the target speech recognition network with [8] N Carlini and D Wagner, “Audio adversarial examples: Targeted attacks |

![PDF] Audio Adversarial Examples: Targeted Attacks on Speech-to PDF] Audio Adversarial Examples: Targeted Attacks on Speech-to](https://i1.rgstatic.net/publication/330726614_Adversarial_attack_on_Speech-to-Text_Recognition_Models/links/5c512df1299bf12be3ed073a/largepreview.png)

![Paper Review] Audio adversarial examples Paper Review] Audio adversarial examples](https://www.programmersought.com/images/991/85ab18f5d3ad99e855b2cb44c9de00cf.JPEG)

![Paper Review] Audio adversarial examples Paper Review] Audio adversarial examples](https://d3i71xaburhd42.cloudfront.net/7fcf0272025045a9e4a2a3462fd8e128b4ceb120/2-Figure1-1.png)

![PDF] Towards Weighted-Sampling Audio Adversarial Example Attack PDF] Towards Weighted-Sampling Audio Adversarial Example Attack](https://images.deepai.org/publication-preview/imperceptible-robust-and-targeted-adversarial-examples-for-automatic-speech-recognition-page-3-thumb.jpg)

![Paper Review] Audio adversarial examples Paper Review] Audio adversarial examples](https://dl.acm.org/cms/asset/af89fc5b-3581-4641-b176-640b7b110173/3327962.3331456.key.jpg)