1/n logn loglogn

|

An O(log n= loglog n -approximation Algorithm for the

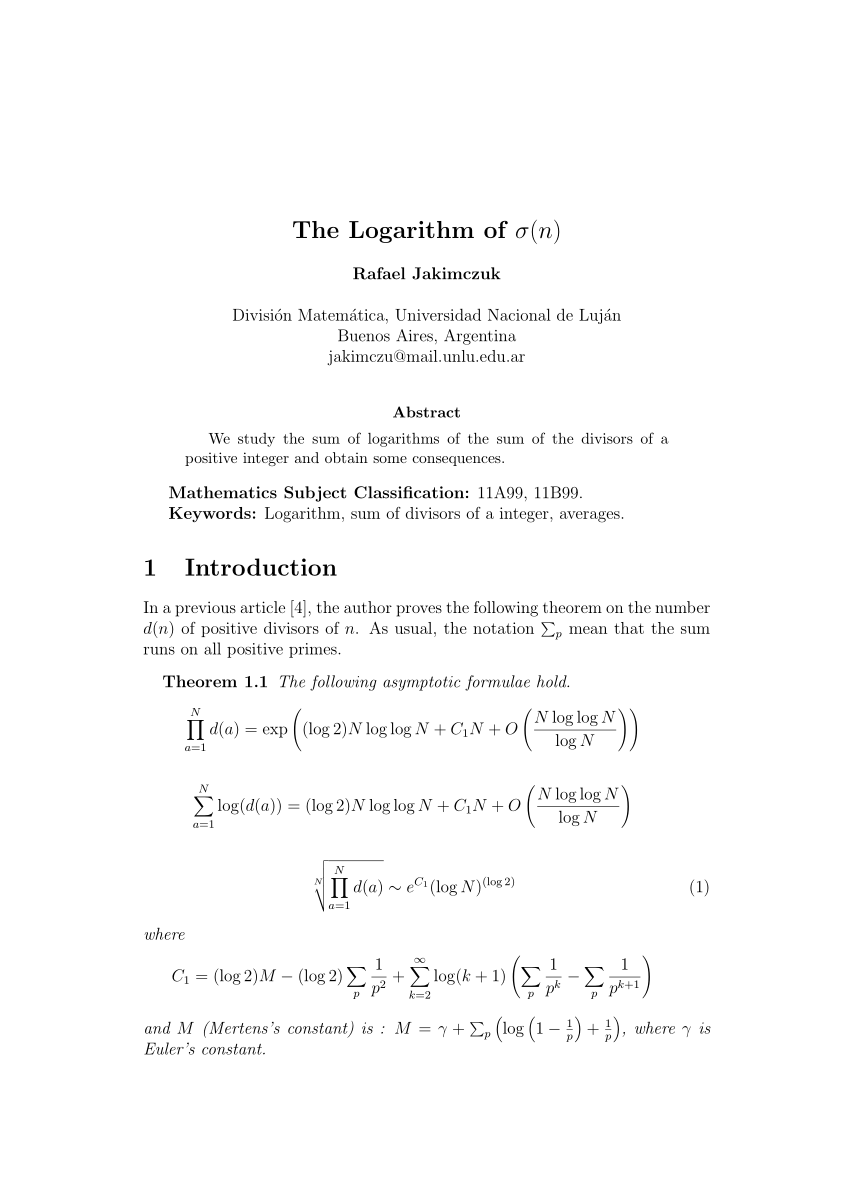

We present a randomized O(logn=loglogn)-approximation algorithm for the asymmetric travel-ing salesman problem (ATSP) This provides the first asymptotic improvement over the long-standing (log n) approximation bound stemming from the work of Frieze et al [17] |

|

1 Balls and Bins

Feb 2 2011 · In fact (logn loglogn) is indeed the right answer for the max-load with nballs and nbins Theorem 2 The max-loaded bin has (logn loglogn) balls with probability at least 1 1=n1=3 Here is one way to show this via the second moment method To begin let us now lower bound the probability that bin ihas at least kballs: n k 1 n k 1 1 n n k n k k |

What is the inverse function of log*n?

The inverse function of log*n is a tower of 2 to power of 2's which increases extremely fast hence log*n grows very slowly. For example log* (2^65536) = 5. In comparsion loglogn grows faster than log*n as an example log (log (2^65536)) = log (65536) = 32.

How do you prove N loglogN?

Proof:- log*n < loglogn for sufficiently large n log*n = log* (log (n)) + 1 log* (log (n)) + 1 < log (logn) Can easily prove that log* (n) + 1 < logn replacing n by logn log* (logn) + 1 < log (logn) log* (n) < log (logn) By clicking “Post Your Answer”, you agree to our terms of service and acknowledge you have read our privacy policy.

What is O(nlogn) space?

Space complexities of O (nlogn) are extremely rare to the point that you don’t need to keep an eye out for it. Any algorithm that uses O (nlogn) space will likely be awkward enough that it will be apparent. Function that creates an array of binary trees made from input array.

Is O (log* n) recursive?

O (log* n) is faster than O (log log n) after some threshold. log* n says how many times you need to do log* (log n) before it reaches < 1. So it will be 1 + log* of what is left from running log (log n) until log N < 1 So the calculation of this value is recursive. can you please explain how? Sorry, i think it's true for all numbers.

Types of Big O Notations

There are seven common types of big O notations. These include: 1. O(1):Constant complexity. 2. O(logn):Logarithmic complexity. 3. O(n):Linear complexity. 4. O(nlogn):Loglinear complexity. 5. O(n^x):Polynomial complexity. 6. O(X^n):Exponential time. 7. O(n):Factorial complexity. Let’s examine each one. More on Software Engineering: Tackling Jump G

O(1): Constant Complexity

O(1) is known as constant complexity. This implies that the amount of time or memory does not scale with n. For time complexity, this means that n is not iterated on or recursed. Generally, a value will be selected and returned, or a value will be operated on and returned. For space, no data structurescan be created that are multiples of the size o

O(Logn): Logarithmic Complexity

O(logn) is known as logarithmic complexity. The logarithm in O(logn) has a base of two. The best way to wrap your head around this is to remember the concept of halving. Every time n increases by an amount k, the time or space increases by k/2. There are several common algorithms that are O(logn) a vast majority of the time, including: binary searc

O(N): Linear Complexity

O(n), or linear complexity, is perhaps the most straightforward complexity to understand. O(n) means that the time/space scales 1:1 with changes to the size of n. If a new operation or iteration is needed every time n increases by one, then the algorithm will run in O(n) time. When using data structures, if one more element is needed every time n i

O(Nlogn): Loglinear Complexity

O(nlogn) is known as loglinear complexity.O(nlogn) implies that logn operations will occur n times. O(nlogn) time is common in recursive sorting algorithms, binary tree sorting algorithms and most other types of sorts. Space complexities of O(nlogn) are extremely rare to the point that you don’t need to keep an eye out for it. Any algorithm that us

O(Nˣ): Polynomial Complexity

O(nˣ), or polynomial complexity, covers a larger range of complexities depending on the value of x. X represents the number of times allof n will be processed for every n. Polynomial complexity is where we enter the danger zone. It’s extremely inefficient, and while it is the only way to create some algorithms, polynomial complexity should be regar

O(Xⁿ): Exponential Time

O(Xⁿ), known as exponential time, means that time or space will be raised to the power of n. Exponential time is extremely inefficient and should be avoided unless absolutely necessary. Often O(Xⁿ) results from having a recursive algorithm that calls X number of algorithms with n-1. Towers of Hanoiis a famous problem that has a recursive solution r

O(N): Factorial Complexity

O(n), or factorial complexity, is the “worst” standard complexity that exists. To illustrate how fast factorial solutions will blow up in size, a standard deck of cards has 52 cards, with 52 possible orderings of cards after shuffling. This number is larger than the number of atoms on Earth. If someone were to shuffle a deck of cards every second

Why Big O Notations Are Important to Know

The first four complexities discussed here, and to some extent the fifth, O(1), O(logn), O(n), O(nlogn) and O(nˣ), can be used to describe the vast majority of algorithms you’ll encounter. The final two will be exceedingly rare but are important to understand to grasp the whole of Big O notation and what it’s used to represent. There are also other

Convergence of the series (1/(log n)^(log n ))

Series 1/log(n) or 1/ln(n) converges or diverges? (W/Text Explanation) Maths Mad Teacher

Test the convergence of series 1/n log n

|

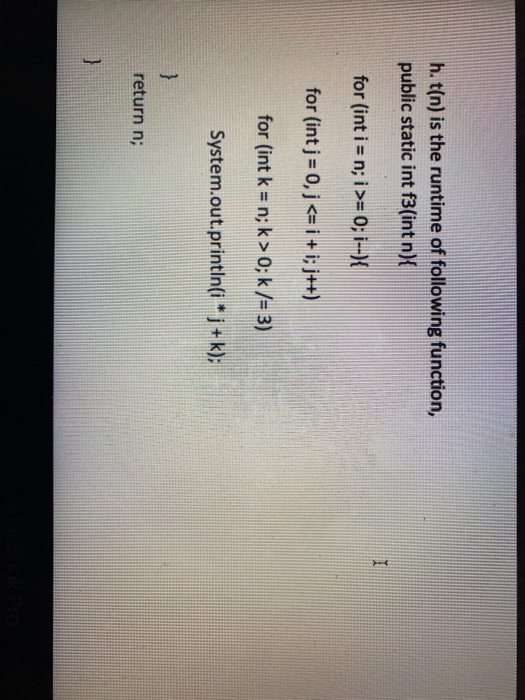

1 n T(i) + cn siendo T(0) = 0. T(n) = cnk si 1? n? b aT(n ? b)+ cnk

complejidad de las siguientes funciones: nlogn n2logn |

|

Integer multiplication in time O(n log n) - Archive ouverte HAL

28 nov 2020 We present an algorithm that computes the product of two n-bit integers in O(n log n) bit operations thus confirming a conjecture of Schönhage. |

|

Capítulo 1 LA COMPLEJIDAD DE LOS ALGORITMOS

T(n) = 2c1n +c2 +c3 log n. Para calcular las constantes necesitamos las condiciones iniciales. Como sólo disponemos de una y tenemos tres incógnitas usamos la |

|

An O(log n/log log n)-approximation Algorithm for the Asymmetric

Traveling salesman problem is one of the most celebrated and intensively studied problems in combinato- rial optimization [30 2 |

|

An O(logn/log logn)-approximation Algorithm for the Asymmetric

O(log n/ log log n) of the optimum with high probability. 1 Introduction. In the Asymmetric Traveling Salesman problem (ATSP) we are given a set V of n |

|

CS1102S Data Structures and Algorithms - Assignment 01

1. Exercise 2.1 on page 50: Order the following functions by growth rate: N ?N |

|

Solutions to Tutorial 5 (Week 6) Material covered Outcomes

n=3. 1 nlog n(log(log n))p. ;. Solution: We again use the Cauchy condensation test and observe that it is sufficient to prove the convergence properties of. |

|

PROBLEMAS DE ANÁLISIS DE VARIABLE REAL. HOJA 3. 1

? n=1. 1 n log n(log(log n))2 ? 15. Demostrar que existe una aplicación biyectiva f : N ? Z {0} tal que ?. ?. |

|

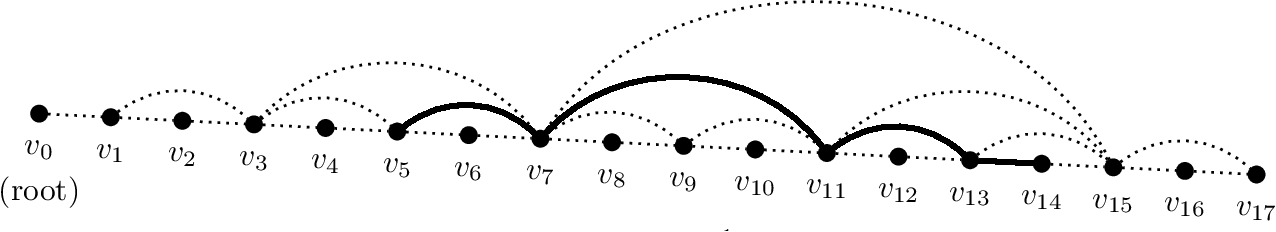

Optimizing Integer Sorting in O(n log log n) Expected Time in Linear

Andersson has shown that if we pass down integers in exponential tree one by one than the insertion takes O( log n) for each integer i.e. total complexity will |

|

The Weighted Euclidean 1-Center Problem

We present an O(n(logn)3(loglog n)2) algorithm for the problem of finding a point (x the plane that minimizes the maximal weighted distance to a point in a |

|

Week 6 - School of Mathematics and Statistics, University of Sydney

n=3 1 nlog n(log(log n))p ; Solution: We again use the Cauchy condensation test and observe that it is sufficient to prove the convergence properties of ∞ ∑ |

|

HOMEWORK 6 - UCLA Math

so ∑n≥4 1/n log n log log n diverges by the integral test Alternatively, by the Cauchy condensation test, ∑n≥4 1/n log n log log n converges if and only |

|

Convergence de séries à termes positifs

1 n log n = 1 2 log 2 + 1 3 log 3 + ··· + 1 N log N Utiliser le résultat de la question précédente pour obtenir une minoration de cette somme : ∫ N 2 |

|

Analysis 1

says, for n ≥ 6: pn < n log(n) + n log(log(n)) Use this to prove the divergence of the prime harmonic series: ∞ ∑ n=1 1 pn Note: Isn't this a little bit impressive? |

|

An O(logn/log logn)-approximation Algorithm for - MIT Mathematics

O(log n/ log log n) of the optimum with high probability 1 Introduction In the Asymmetric Traveling Salesman problem (ATSP) we are given a set V of n points |

|

An O(log n/log log n)-approximation Algorithm for the Asymmetric

Traveling salesman problem is one of the most celebrated and intensively studied problems in combinato- rial optimization [30, 2, 13] It has found applications in |

|

Also called Cauchys condensation test - mathchalmersse

3 mai 2018 · to integral test, but in many cases the condensation test requires less work than the integral n log n diverges (c) With an = 1/n2, we have ∞ ∑ n=1 2na2n = ∞ ∑ n=1 2n 1 n=16 1 n log 2(log n log 2)(log log n log 2)p |

|

MATH 370: Homework 5

where M > 0 exists because the sequence log n n has a limit (= 0); hence, the original series converges (e) ∞ ∑ n=4 1 n(log n)(log log n) diverges b/c ∫ ∞ |

![PDF] Fully Dynamic Connectivity in O(log n(log log n)2) Amortized PDF] Fully Dynamic Connectivity in O(log n(log log n)2) Amortized](https://d3i71xaburhd42.cloudfront.net/157e6626e2c89cd1a004b2dec6def86068833b40/2-Figure1-1.png)

![PDF] Optimizing Integer Sorting in O ( n log log n ) Expected Time PDF] Optimizing Integer Sorting in O ( n log log n ) Expected Time](https://i1.rgstatic.net/publication/326412442_An_Olog15n_loglog_n_Approximation_Algorithm_for_Mean_Isoperimetry_and_Robust_k-means/links/5cc41ec2299bf1209782c105/largepreview.png)

![PDF] Optimizing Integer Sorting in O ( n log log n ) Expected Time PDF] Optimizing Integer Sorting in O ( n log log n ) Expected Time](https://data01.123doks.com/thumb/qm/3o/kg8y/Pki4ZiPT5VIqTD1so/cover.webp)

![PDF] Fully Dynamic Connectivity in O(log n(log log n)2) Amortized PDF] Fully Dynamic Connectivity in O(log n(log log n)2) Amortized](https://0.academia-photos.com/attachment_thumbnails/57794826/mini_magick20190110-17889-rpu1ul.png?1547136721)

![PDF] Optimizing Integer Sorting in O ( n log log n ) Expected Time PDF] Optimizing Integer Sorting in O ( n log log n ) Expected Time](https://i1.rgstatic.net/publication/269027863_An_observation_on_the_Cipolla's_expansion/links/5a2b530e0f7e9b63e538d2fe/largepreview.png)