cityscapes dataset pytorch

What PyTorch image datasets do I need to build a custom dataset?

Before building a custom dataset, it is useful to be aware of the built-in PyTorch image datasets. PyTorch provides many built-in/pre-prepared/pre-baked image datasets through torchvision, including: LSUN, ImageNet, CIFAR, STL10, SVHN, PhotoTour, SBU, Flickr, VOC, Cityscapes, SBD, USPS, Kinetics-400, HMDB51, UCF101, and CelebA.

Who can use the cityscapes dataset?

This Cityscapes Dataset is made freely available to academic and non-academic entities for non-commercial purposes such as academic research, teaching, scientific publications, or personal experimentation. Permission is granted to use the data given that you agree to our license terms.

How PyTorch provides iterabledataset class?

To do this pytorch provides IterableDataset class as a replacement of the Dataset class. Unlike Dataset class which stores the data and provides a method to return the data at a specified index, the IterableDataset class provides an __iter__ method which returns an iterator for looping over the dataset instead.

Overview

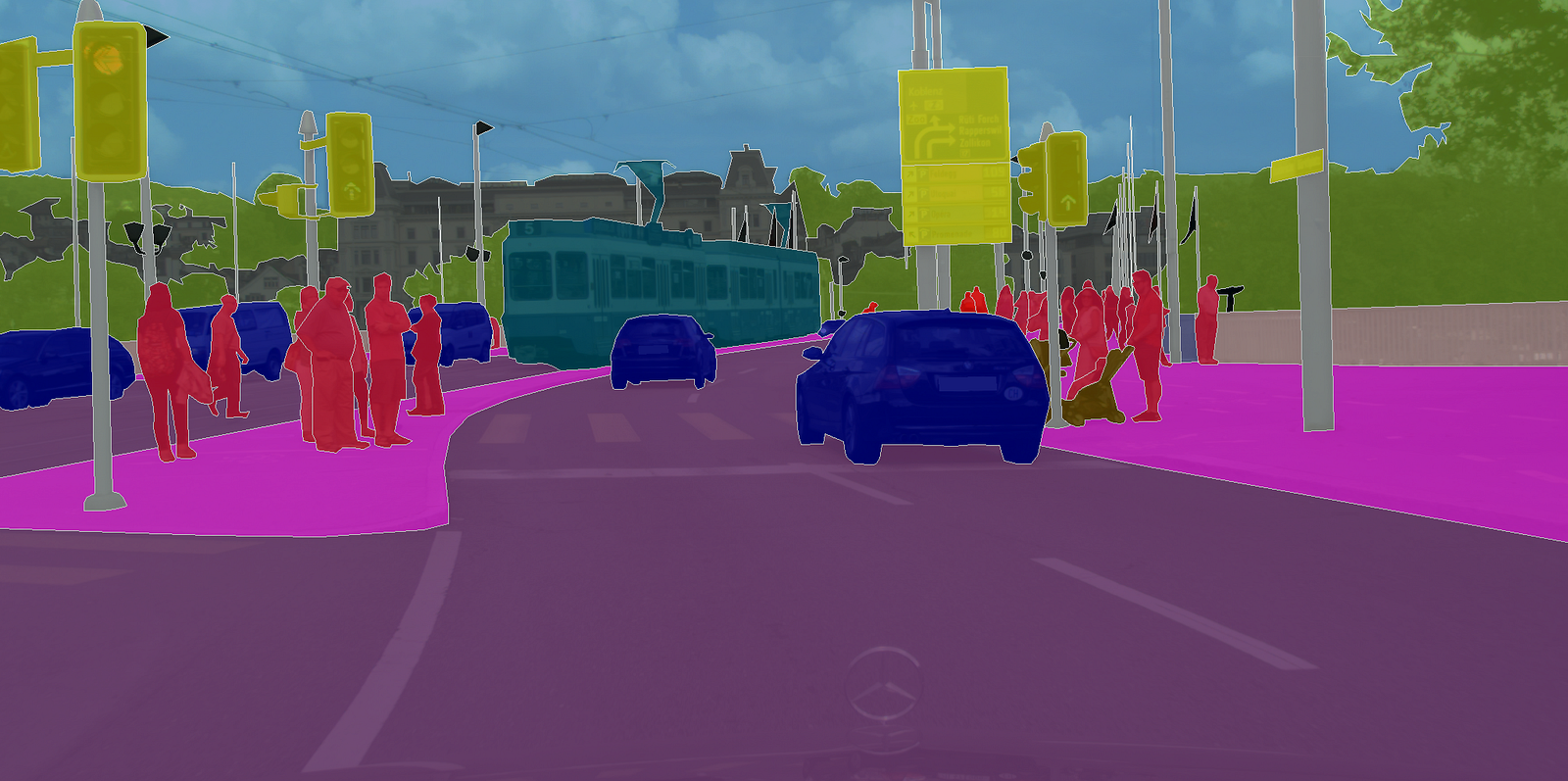

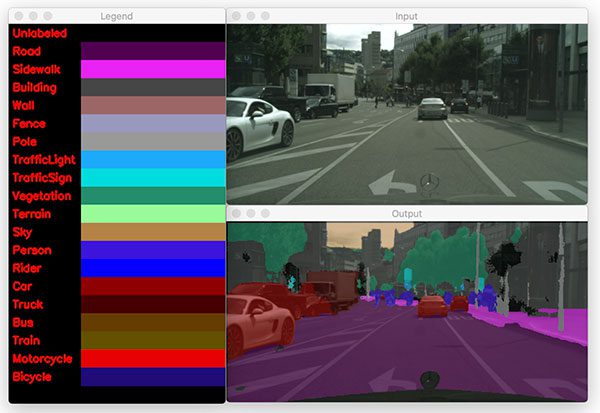

PyTorch implementation of DeepLabV3, trained on the Cityscapes dataset. •Youtube video of results: github.com

Index

Using a VM on PaperspacePretrained modelTraining a model on CityscapesEvaluationVisualizationDocumentation of remaining code github.com

Paperspace:

To train models and to run pretrained models (with small batch sizes), you can use an Ubuntu 16.04 P4000 VM with 250 GB SSD on Paperspace. Below I have listed what I needed to do in order to get started, and some things I found useful. •Install docker-ce: •$ curl -fsSL https://download.docker.com/linux/ubuntu/gpg sudo apt-key add - •$ sudo add-apt-repository "deb [arch=amd64] https://download.docker.com/linux/ubuntu $(lsb_release -cs) stable" •$ sudo apt-get update •$ sudo apt-get install -y docker-ce github.com

Pretrained model:

Train model on Cityscapes: •SSH into the paperspace server. •$ sudo sh start_docker_image.sh •$ cd -- •$ python deeplabv3/utils/preprocess_data.py (ONLY NEED TO DO THIS ONCE) •$ python deeplabv3/train.py github.com

Evaluation

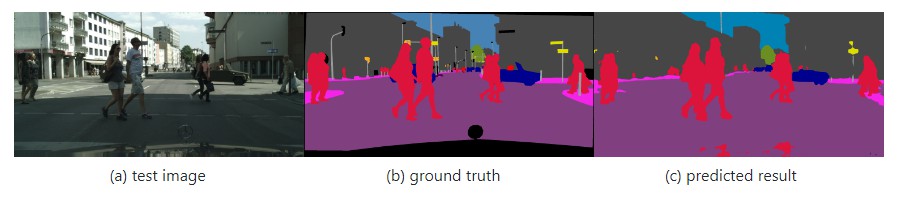

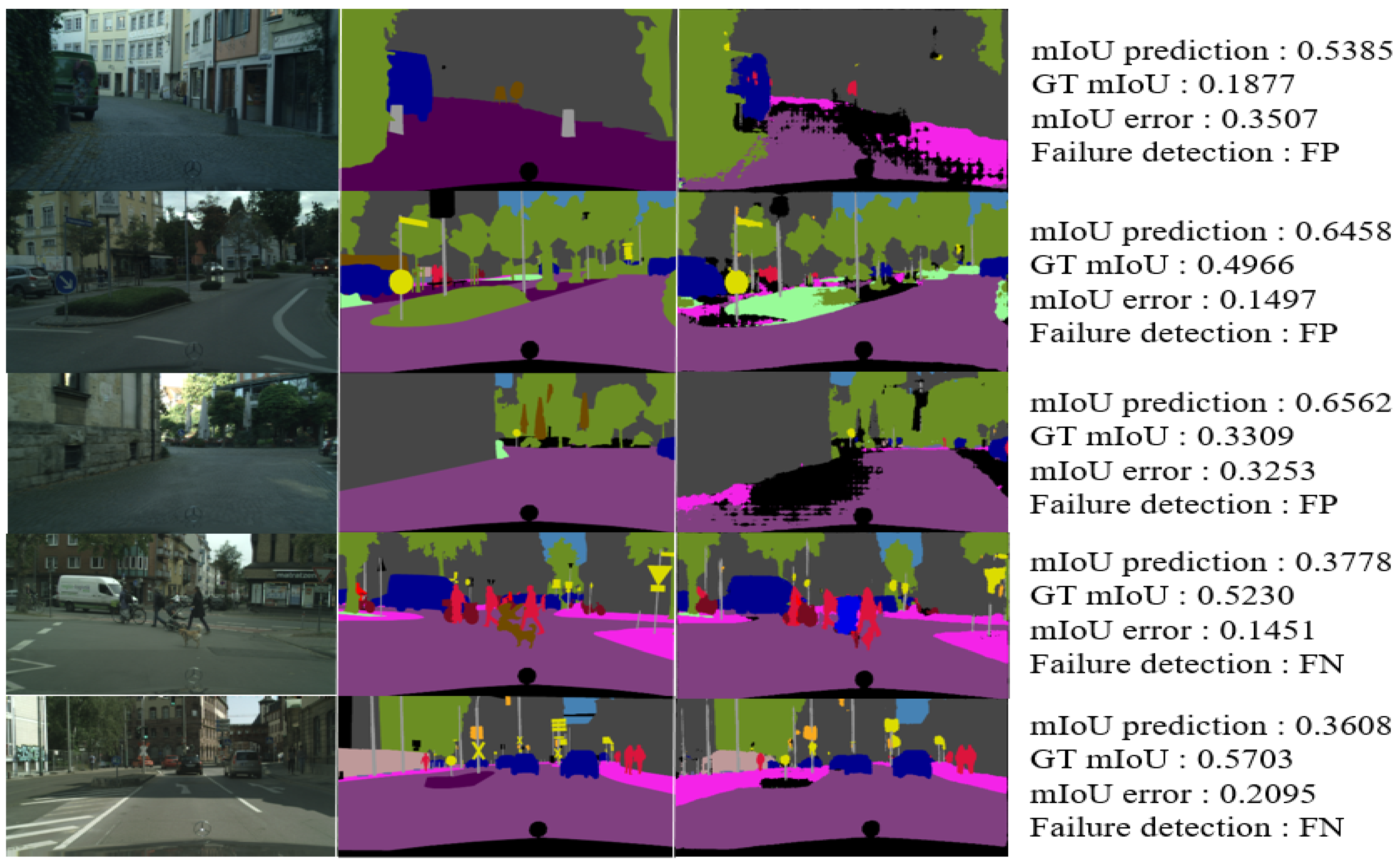

evaluation/eval_on_val.py: •SSH into the paperspace server. •$ sudo sh start_docker_image.sh •$ cd -- •$ python deeplabv3/utils/preprocess_data.py (ONLY NEED TO DO THIS ONCE) •$ python deeplabv3/evaluation/eval_on_val.py •This will run the pretrained model (set on line 31 in eval_on_val.py) on all images in Cityscapes val, compute and print the loss, and save the predicted segmentation images in deeplabv3/training_logs/model_eval_val. evaluation/eval_on_val_for_metrics.py: •SSH into the paperspace server. •$ sudo sh start_docker_image.sh •$ cd -- •$ python deeplabv3/utils/preprocess_data.py (ONLY NEED TO DO THIS ONCE) •$ python deeplabv3/evaluation/eval_on_val_for_metrics.py •$ cd deeplabv3/cityscapesScripts •$ pip install . (ONLY NEED TO DO THIS ONCE) •$ python setup.py build_ext --inplace (ONLY NEED TO DO THIS ONCE) (this enables cython, which makes the cityscapes evaluation script run A LOT faster) •$ export CITYSCAPES_RESULTS="/root/deeplabv3/training_logs/model_eval_val_for_metrics" •$ export CITYSCAPES_DATASET="/root/deeplabv3/data/cityscapes" •$ python cityscapesscripts/evaluation/evalPixelLevelSemanticLabeling.py •This will run the pretrained model (set on line 55 in eval_on_val_for_metrics.py) on all images in Cityscapes val, upsample the predicted segmentation images to the original Cityscapes image size (1024, 2048), and compute and print performance metrics: github.com

Visualization

visualization/run_on_seq.py: •SSH into the paperspace server. •$ sudo sh start_docker_image.sh •$ cd -- •$ python deeplabv3/utils/preprocess_data.py (ONLY NEED TO DO THIS ONCE) •$ python deeplabv3/visualization/run_on_seq.py •This will run the pretrained model (set on line 33 in run_on_seq.py) on all images in the Cityscapes demo sequences (stuttgart_00, stuttgart_01 and stuttgart_02) and create a visualization video for each sequence, which is saved to deeplabv3/training_logs/model_eval_seq. See Youtube video from the top of the page. visualization/run_on_thn_seq.py: •SSH into the paperspace server. •$ sudo sh start_docker_image.sh •$ cd -- •$ python deeplabv3/utils/preprocess_data.py (ONLY NEED TO DO THIS ONCE) •$ python deeplabv3/visualization/run_on_thn_seq.py •This will run the pretrained model (set on line 31 in run_on_thn_seq.py) on all images in the Thn sequence (real-life sequence collected with a standard dash cam) and create a visualization video, which is saved to deeplabv3/training_logs/model_eval_seq_thn. See Youtube video from the top of the page. github.com

Documentation of remaining code

•model/resnet.py: •Definition of the custom Resnet model (output stride = 8 or 16) which is the backbone of DeepLabV3. •model/aspp.py: •Definition of the Atrous Spatial Pyramid Pooling (ASPP) module. •model/deeplabv3.py: •Definition of the complete DeepLabV3 model. github.com

Cityscapes semantic segmentation with augmentation tutorial Pytorch (part1)

Cityscapes semantic segmentation with augmentation tutorial Pytorch (part2)

PyTorch Image Segmentation Tutorial with U-NET: everything from scratch baby

|

Street View Segmentation using FCN models

Cityscapes dataset [2] collected 20000 images taken in many cities of Germany with a fixed camera 1Pytorch-FCN: https://github.com/wkentaro/pytorch-fcn. |

| Active Boundary Loss for Semantic Segmentation |

|

DABNet: Depth-wise Asymmetric Bottleneck for Real-time Semantic

2019?10?1? Cityscapes and CamVid datasets demonstrate that the proposed DABNet achieves a bal- ... 9.0 and cuDNN V7 on the Pytorch platform. |

|

MEAL: Manifold Embedding-based Active Learning

[7] and Cityscapes [10] datasets for autonomous driving. In summary our contributions are: geNet dataset [28] and implemented using PyTorch [27]. |

|

Generalizing to the Open World: Deep Visual Odometry with Online

2021?3?29? including Cityscapes to KITTI and outdoor KITTI to indoor ... depth has a strong reliance on the training dataset. During ... pytorch 2017. |

|

Zero-shot Adversarial Quantization

2021?3?30? We evaluate our approach on the following six datasets: CIFAR10 CIFAR100 |

|

Benchmarking Robustness in Object Detection: Autonomous Driving

2020?3?31? The three resulting benchmark datasets termed. Pascal-C |

|

A PyTorch Semantic Segmentation Toolbox

The cityscapes dataset for semantic urban scene understanding. In CVPR pages 3213–3223 |

|

Accelerating Neural Network Training with Distributed

However the training of large networks requires large datasets. As the sizes work using the Cityscapes dataset. Data Loader. PyTorch. Local Optimizer. |

|

Unsupervised Domain Adaptation for Semantic Segmentation by

2020?12?23? source domain and real-world dataset (Cityscapes) without ... We implement with PyTorch (Paszke et al. 2017) and all. |

|

A PyTorch Semantic Segmentation Toolbox - Xinggang Wang

In this work, we provide an introduction of PyTorch im- plementations for the cityscapes dataset for semantic urban scene understanding In CVPR, pages |

|

Street View Segmentation using FCN models - CS231n

Cityscapes dataset [2] collected 20,000 images taken in many cities of Germany model into Torch 1Pytorch-FCN: https://github com/wkentaro/pytorch-fcn 4 |

|

DEEP SEMANTIC SEGMENTATION FOR THE OFF-ROAD

We also demonstrate our off-road dataset and simulated dataset for semantic segmentation Moreover, we achieve 75 6 mIoU on the Cityscapes validation set and 85 2 We transformed encoders and decoders, trained using PyTorch, |

|

Temporally Distributed Networks for Fast Video - Federico Perazzi

Experiments on Cityscapes, CamVid, and NYUD-v2 three challenging datasets including Cityscapes, Camvid, Titan Xp in the Pytorch framework We found |

|

A Dataset for Semantic Rail Scene Understanding - CVF Open Access

The baseline for this experiment is an FRRNB model [18] pretrained on Cityscapes which is fine-tuned on RailSem19 The model is part of the pytorch- semseg |

|

Efficient Fast Semantic Segmentation Using - IEEE Xplore

28 avr 2020 · Our experiments on the CamVid and Cityscapes datasets show that the The proposed method is implemented using PyTorch 1 0 1 [33] |

|

An open-source project for real-time image semantic segmentation

28 oct 2019 · vironment with at least PyTorch 0 4 1, Cuda 9 0, Cudnn 7 1, and Python 3 6 If one wants to train the model, the Cityscapes dataset [7] should |