adam a method for stochastic optimization iclr 2015 bibtex

|

Using BIBTEX to Automatically Generate Labeled Data for Citation

Adam: A method for stochastic optimization. In International. Conference for Learning Representations (ICLR) 2015. John Lafferty |

|

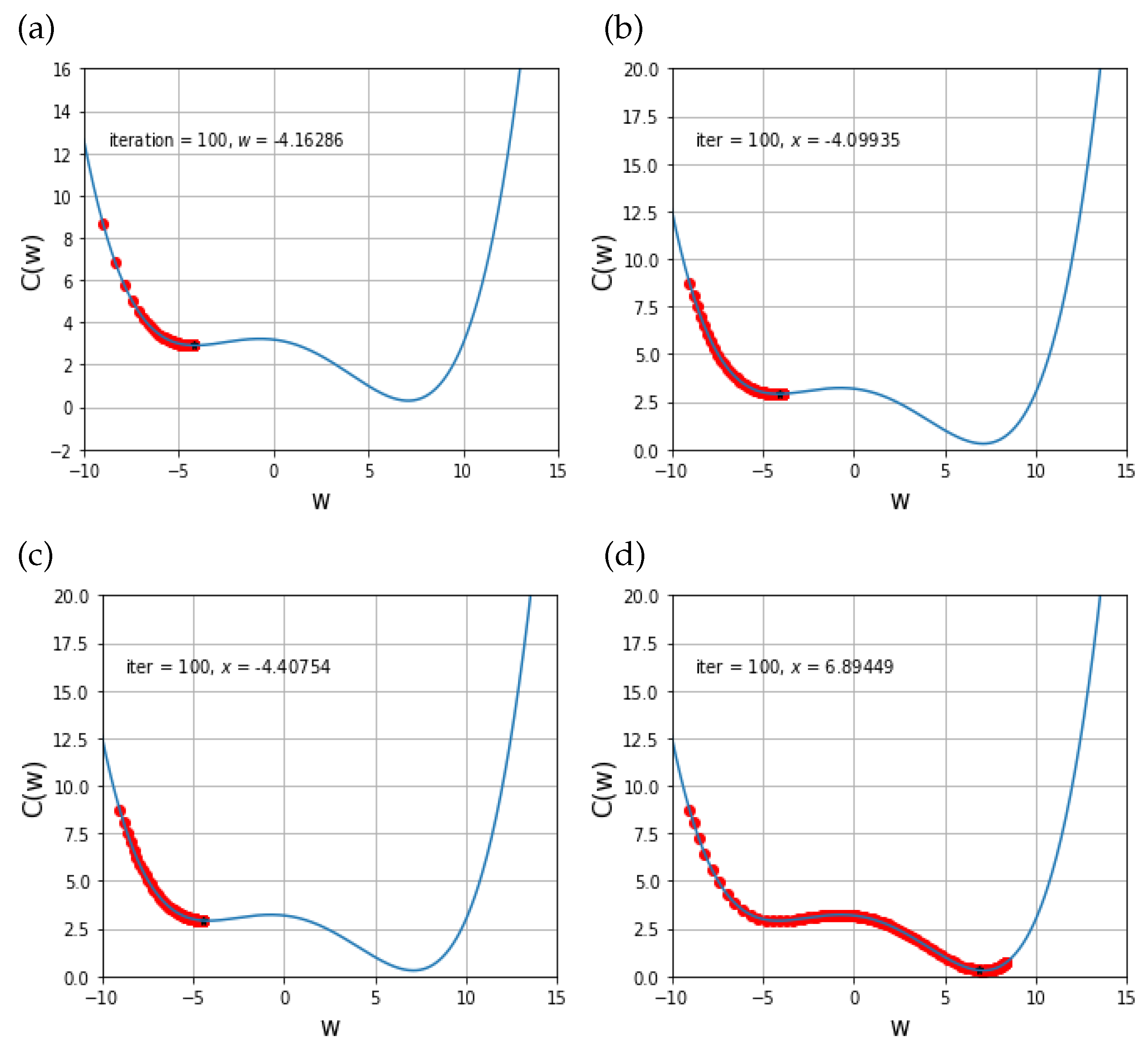

INCORPORATING NESTEROV MOMENTUM INTO ADAM

1504–1512. 2015. John Duchi |

|

SGDR: STOCHASTIC GRADIENT DESCENT WITH WARM

AdaDelta (Zeiler 2012) and. Adam (Kingma & Ba |

|

Using BibTeX to Automatically Generate Labeled Data for Citation

9 jun 2020 Adam: A method for stochastic optimization. In International. Conference for Learning Representations (ICLR) 2015. |

|

TENT: FULLY TEST-TIME ADAPTATION BY ENTROPY MINIMIZATION

Adam: A method for stochastic optimization. In ICLR 2015. A. Krizhevsky |

|

Closing the Generalization Gap of Adaptive Gradient Methods in

work we show that adaptive gradient methods such the stochastic nonconvex optimization setting. Ex- ... Adam [Kingma and Ba |

|

Customized Nonlinear Bandits for Online Response Selection in

son sampling method that is applied to a polynomial feature Adam: A method for stochastic optimization. In ICLR. Kveton B.; Wen |

|

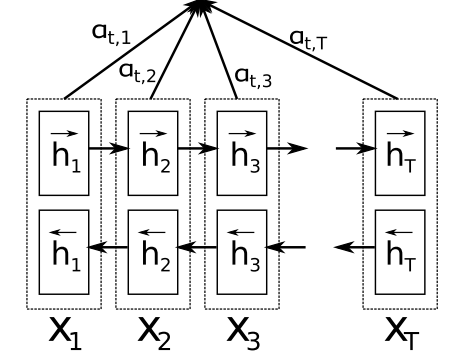

7181-attention-is-all-you-need. pdf

Adam: A method for stochastic optimization. In ICLR 2015. [18] Oleksii Kuchaiev and Boris Ginsburg. Factorization tricks for LSTM networks. arXiv preprint. |

|

Self-Attentive Sequential Recommendation

20 ago 2018 recommender systems” Computer |

|

INCORPORATING NESTEROV MOMENTUM INTO ADAM

Workshop track - ICLR 2016 ized optimization algorithm Adam (Kingma Ba, 2014) Adam has two main has a provably better bound than gradient descent for convex, non-stochastic objectives–can be rewritten as a tional autoencoder (adapted from Jones (2015)) with three conv layers and two dense layers in each |

|

Meta-Learning with Implicit Gradients - NIPS Proceedings - NeurIPS

an approach for optimization-based meta-learning with deep neural networks that removes the need methods, Adam [28], or gradient descent with momentum can also be used without modification Adam: A method for stochastic optimization International Conference on Learning Representations ( ICLR), 2015 |

|

Attention is All you Need - NIPS Proceedings

Adam: A method for stochastic optimization In ICLR, 2015 [18] Oleksii Kuchaiev and Boris Ginsburg Factorization tricks for LSTM networks arXiv preprint arXiv: |

|

Unsupervised Neural Hidden Markov Models - Association for

5 nov 2016 · a generative neural approach to HMMs and demon- strate how this framework 2015 Adam: A method for stochastic optimization The International Conference on Learning Representations (ICLR) Diederik P Kingma and |

|

NewsQA: A Machine Comprehension Dataset - Association for

3 août 2017 · {adam trischler, tong wang, eric yuan, justin harris, alsordon, phbachma a similar approach with machine comprehension (MC) The CNN/Daily Mail corpus (Hermann et al , 2015) consists of news ICLR Rudolf Kadlec, Martin Schmid, Ondrej Bajgar, and method for stochastic optimization ICLR |

|

Chainer: a Next-Generation Open Source Framework for Deep

Adam: A method for stochastic optimization CoRR, abs/1412 6980, 2014 [11] D P Kingma and M Welling Auto-encoding variational bayes ICLR, |

|

Just Jump: Dynamic Neighborhood Aggregation in Graph Neural

We propose a dynamic neighborhood aggregation (DNA) procedure guided Adam: A method for stochastic optimization In ICLR, 2015 tation network datasets where nodes represent documents, and edges represent (undirected) citation |