adam optimizer keras

|

Classifying dog and cat images with Keras - Final project

11 juin 2019 Optimizer: Adam. LR: 0.001. Dropout: 0.25. Slide 8/18 — Cecilie Estrid |

|

Supplementary Materials

Optimizer. Adam with the default parameters of tf.keras.optimizers. Extra Batch Normalization layers. Yes inside the densely connected layer at the top. |

|

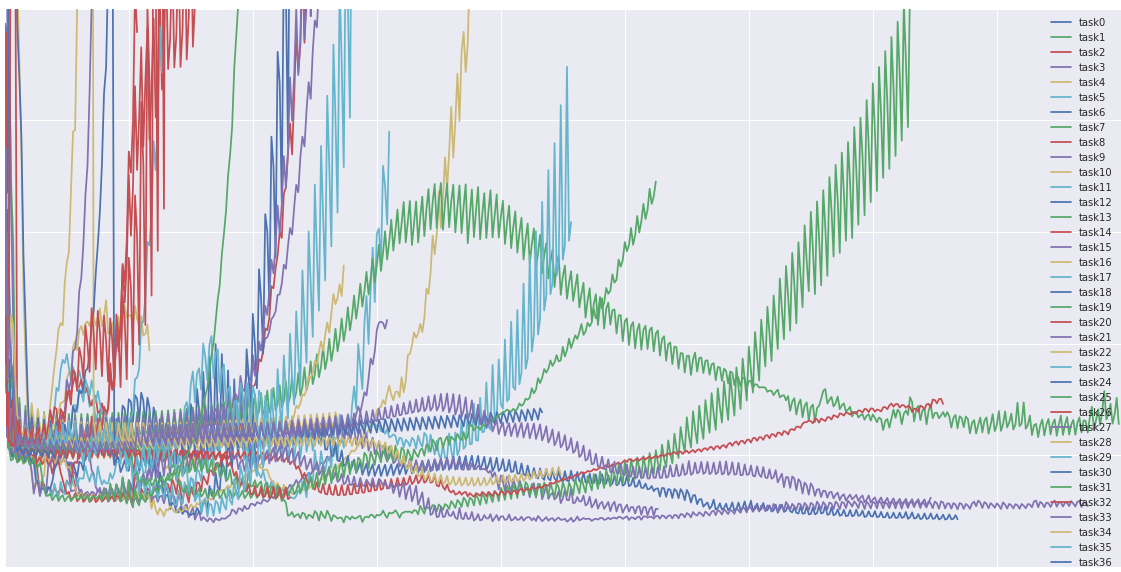

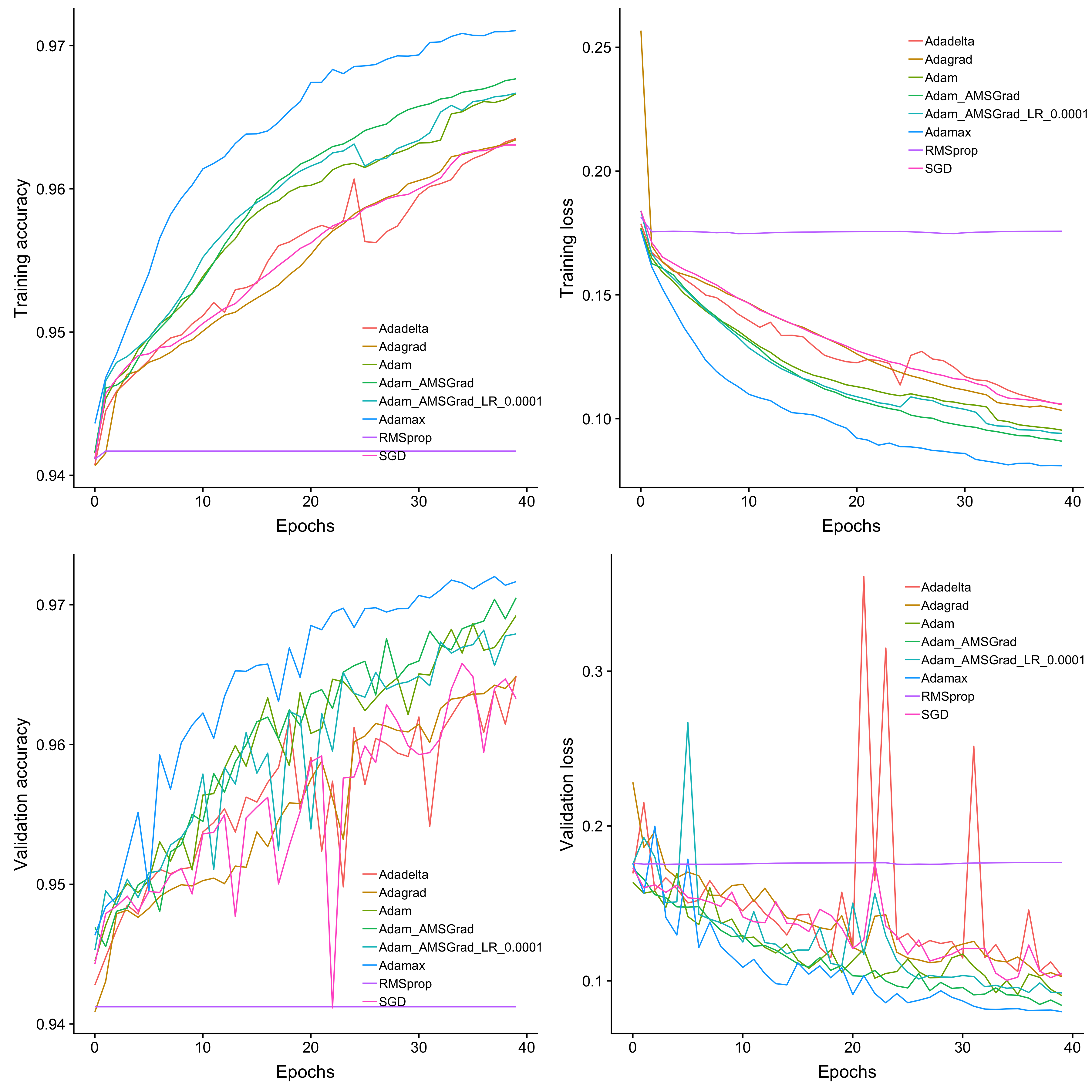

Comparison of Keras Optimizers for Earthquake Signal

gradient descent optimization algorithm found in the Keras li- brary. The optimizers used in this study were Adadelta Adagrad |

|

Intro to Keras

Adam keras.optimizers.Adam(). The Adam optimizer is an algorithm that extends upon SGD and has grown quite popular in deep learning applications in |

|

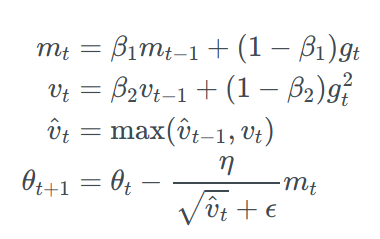

Adam vs. SGD: Closing the generalization gap on image classification

Abstract. Adam is an adaptive deep neural network training optimizer that has been widely used across a variety of applications. |

|

Exploiting the Power of Levenberg-Marquardt Optimizer with

using the LM optimizer performs much better than by the ADAM optimizer. Because there is no built-in implementation of LM in Tensorflow and Keras ... |

|

Dense layers

Define dense layer dense = tf.keras.activations.sigmoid(product+bias) Adaptive moment (adam) optimizer tf.keras.optimizers.Adam() learning_rate. |

|

Stock price forecast with deep learning

21 mars 2021 with 3 different optimizers: SGD RMRprop |

|

The Old and the New: Can Physics-Informed Deep-Learning

5 juil. 2021 without using the Adam optimizer can rapidly converge to a local minimum (for the ... sciANN is a DSL based on and similar to Keras [10]. |

|

Deep Learning avec Tensorflow / Keras

10 avr 2018 · Les étapes suivantes sont usuelles #compilation - algorithme d'apprentissage modelMc compile(loss="binary_crossentropy",optimizer="adam" |

|

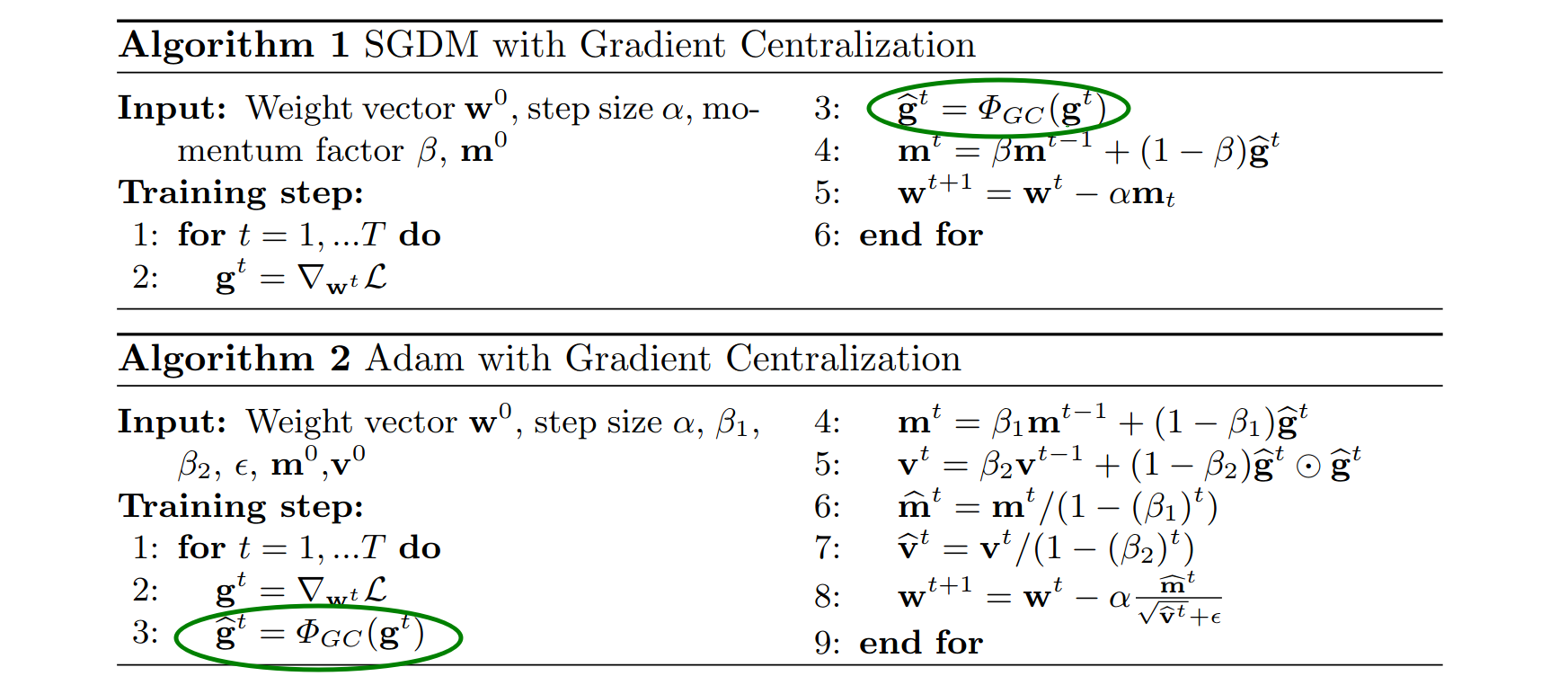

More on Optimization Techniques

momentum is added to a SGD optimizer by adjusting the 'momentum' parameter of the SGD method Adaptive Moments, (Adam): tf keras optimizers Adam() |

|

Feuille 2 : Tensorflow, Keras, premières manipulations - LaBRI

Installer également la bibliothèque keras : pip install keras 2 Exemple 1 : Le of the squares # optimizer optimizer = tf train GradientDescentOptimizer(0 01) |

|

TensorFlow: Deep learning with Keras - PRACE Events

Choose a gradient based optimizer optimizer = tf keras optimizers Adam() # Perform gradient descent for i in range(num_steps): with tf GradientTape() as tape: |

|

Introduction to Keras TensorFlow - EUDAT

hvd init () opt = tf keras optimizers Adam(0 001 * hvd size()) opt = hvd DistributedOptimizer(opt) model compile(loss='categorical_crossentropy', optimizer =opt , |

|

Mnist DB 이용한 keras 동작 확인 프로그램 소스

keras optimizers Adadelta(lr=1 0, rho=0 95, epsilon=None, decay=0 0) # (5) Adam optimizer # lr외의 변수들은 default 값 사용 권장 # reference : A Method for |

|

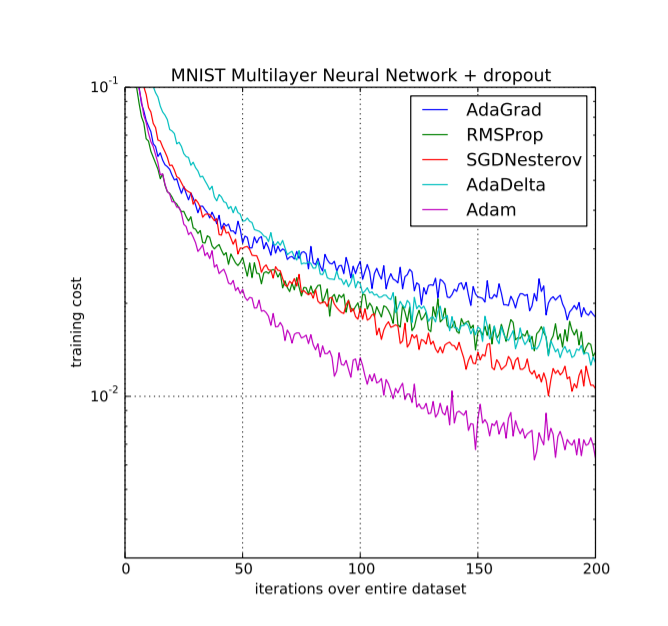

Adaptive Learning Rates - CEDAR

5 Algorithms with adaptive learning rates 1 AdaGrad 2 RMSProp 3 Adam 4 Srihari Keras Adaptive Optimizers • RMSprop • Adagrad • Adadelta • Adam |

![PDF] Keras Deep Learning Cookbook by Rajdeep Dua Manpreet Singh PDF] Keras Deep Learning Cookbook by Rajdeep Dua Manpreet Singh](https://blog.paperspace.com/content/images/2018/06/optimizers7.gif)