l2 regularization

L1 regularization adds the absolute value of the weights, while L2 regularization adds the square of the weights.

L2 regularization is also known as weight decay.

Dropout: This method randomly drops out (sets to zero) a certain proportion of the neurons during tr.

What is L1 loss and L2 loss?

L1 and L2 loss

L1 and L2 are two common loss functions in machine learning/deep learning which are mainly used to minimize the error.

L1 loss function is also known as Least Absolute Deviations in short LAD.

L2 loss function is also known as Least square errors in short LS.

What is L2 regularization?

L2 regularization, known as weight decay in the context of neural networks, is commonly applied to the weights of the neural network layers.

It helps prevent overfitting by shrinking the weights, making the network less sensitive to small changes in input data.26 mai 2023

Why use L2 regularization over L1?

From a practical standpoint, L1 tends to shrink coefficients to zero whereas L2 tends to shrink coefficients evenly.

L1 is therefore useful for feature selection, as we can drop any variables associated with coefficients that go to zero.

L2, on the other hand, is useful when you have collinear/codependent features.

Regularization in Deep Learning

In the context of deep learning models, most regularization strategies revolve around regularizing estimators. So now the question arises what does regularizing an estimator means? Bias vs variance tradeoff graph here sheds a bit more light on the nuances of this topic and demarcation: Regularization of an estimator works by trading increased bias

Parameter Norm Penalties

Under this kind of regularization technique, the capacity of the models like neural networks, linear or logistic regression is limited by adding a parameter norm penalty Ω(θ) to the objective function J. The equation can be represented as the following: where α lies within [0, ∞) is a hyperparameter that weights the relative contribution of a norm

L1 Parameter Regularization

L1 regularization is a method of doing regularization. It tends to be more specific than gradient descent, but it is still a gradient descent optimization problem. Formula and high level meaning over here: Lasso Regression (Least Absolute Shrinkage and Selection Operator) adds “Absolute value of magnitude”of coefficient, as penalty term to the loss

L2 Parameter Regularization

The Regression model that uses L2 regularization is called Ridge Regression. Regularization adds the penalty as model complexity increases. The regularization parameter (lambda) penalizes all the parameters except intercept so that the model generalizes the data and won’t overfit. Ridge regression adds “squared magnitude of the coefficient”as penal

Differences, Usage and Importance

It is important to understand the demarcation between both these methods. In comparison to L2 regularization, L1 regularization results in a solution that is more sparse. The sparsity feature used in L1 regularization has been used extensively as a feature selection mechanism in machine learning. Feature selection is a mechanism which inherently si

Summary Table

The entire post can also be summarized into small bullet points which might be useful during an interview preparation or to skim through the content and just find the right part. Hope this helps: medium.com

Machine Learning Tutorial Python

L2 Regularization neural network in Python from Scratch Explanation with Implementation

Regularization Part 1: Ridge (L2) Regression

|

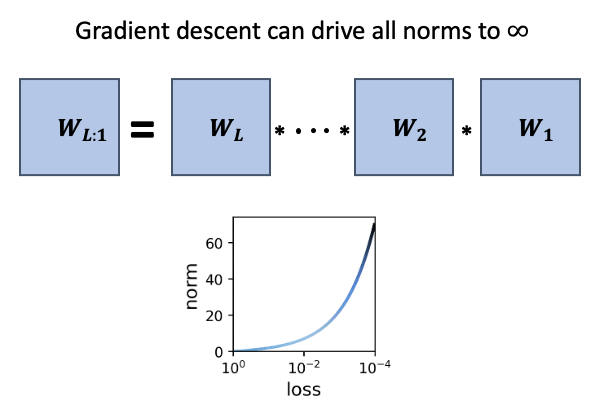

On the training dynamics of deep networks with L2 regularization

We study the role of L2 regularization in deep learning and uncover simple relations between the performance of the model |

|

Adaptive L2 Regularization in Person Re-Identification

L2 regularization imposes constraints on the parameters of neural networks and adds penalties to the objective function during optimization. It is a commonly |

|

L1 and l2 Regularization

Jul 26 2017 l2 “ridge” complexity: ... Ivanov and Tikhonov regularization are equivalent if: ... The Famous Picture for l2 Regularization. |

|

On the Optimal Weighted l2 Regularization in Overparameterized

Weighted l2 Regularization. We consider generalized ridge regression instead of simple isotropic shrinkage. While the generalized formulation has also been |

|

L2 Regularization for Learning Kernels

or L1 regularization. This paper studies the prob- lem of learning kernels with the same family of kernels but with an L2 regularization instead and. |

|

Effect of the regularization hyperparameter on deep learning- based

Demonstrations rely on training U-net on small LGE-. MRI datasets using the arbitrarily selected L2 regularization values. The remaining hyperparameters are to |

|

CPSC 340: Data Mining Machine Learning

L2-regularized least squares is also known as “ridge regression”. RBF basis with L2-regularization and cross-validation to choose and ?. |

|

Regularization - Sebastian Raschka

•L1/L2 regularization (norm penalties) • Dropout Goal: reduce overfitting usually achieved by reducing model capacity and/or reduction of the variance of the |

|

Lecture 2: Overfitting Regularization

Regularization • Generalizing regression • Overfitting • Cross-validation • L2 and L1 regularization for linear estimators • A Bayesian interpretation of |

|

Feature selection, L1 vs L2 regularization, and rotational - ICML

We also give a lower- bound showing that any rotationally invari- ant algorithm— including logistic regression with L2 regularization, SVMs, and neural networks |

|

Tutorial 6 [2]Convexity and Regularization

L2 Regularization ▷ Add the L2 norm of w to the loss function to penalize model complexity ▷ The Loss function becomes f (w) = L(w,X,y) + λ 2 ∗ w2 2 |

|

Regularization for Deep Learning

to the objective function In other academic communities, L2 regularization is also known as ridge regression or Tikhonov regularization We can gain some insight |

|

L1 and l2 Regularization - GitHub Pages

26 juil 2017 · is the square of the l2-norm Ridge Regression (Ivanov Form) The ridge regression solution for complexity parameter r ⩾ 0 is ˆw = arg |

|

Regularization - University of Colorado Boulder

20 sept 2018 · When the regularizer is the squared L2 norm w2, this is called L2 regularization • This is the most common type of regularization • When used |

|

OLS with l1 and l2 regularization - Duke People - Duke University

OLS with l1 and l2 regularization CEE 629 System Identification Duke University, Fall 2017 l1 regularization • The l1 norm of a vector v ∈ Rn is given by v1 |

|

Notes on regularization

L2 Regularized Linear Regression The new objective combines the SSE loss with a quadratic regularizer =1 |

![PDF] Telangana Sada Bainama Regularization Form PDF Download in PDF] Telangana Sada Bainama Regularization Form PDF Download in](https://data01.123dok.com/thumb/y6/e5/5x7z/Pki4ZiPT5VIqTD1so/cover.webp)

![I220Book] PDF Download Regularization of Inverse Problems I220Book] PDF Download Regularization of Inverse Problems](https://www.programmersought.com/images/928/48e763a5f9f6b9bc57e263f486ad1b80.png)