don't decrease the learning rate increase the batch size

Does adding a batch size reduce validation loss?

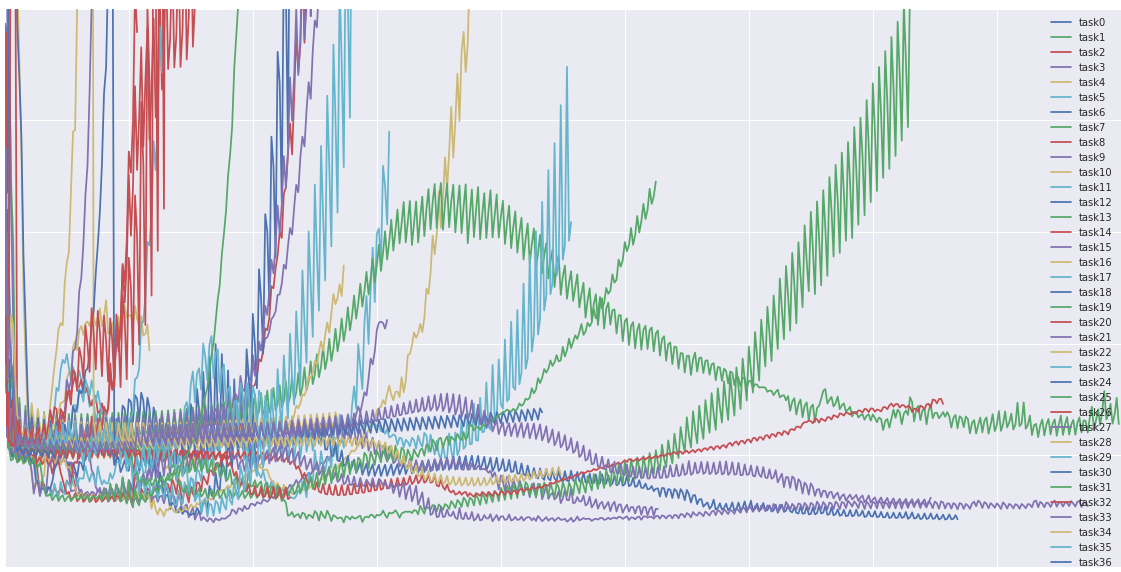

In fact, it seems adding to the batch size reduces the validation loss. However, keep in mind that these performances are close enough where some deviation might be due to sample noise. So it’s not a good idea to read too deeply into this. The authors of, “Don’t Decay the Learning Rate, Increase the Batch Size” add to this.

Does learning rate to batch size influence the generalization capacity of DNN?

Width of Minima Reached by Stochastic Gradient Descent is Influenced by Learning Rate to Batch Size Ratio. The authors give the mathematical and empirical foundation to the idea that the ratio of learning rate to batch size influences the generalization capacity of DNN.

|

DONT DECAY THE LEARNING RATE INCREASE THE BATCH SIZE

One can also increase the momentum coefficient and scale B ? 1/(1 ? m) although this slightly reduces the test accuracy. We train Inception-. ResNet-V2 on |

|

Examining the effect of hyperparameters on the training of a residual

31 janv. 2020 gradient clipping and a decreasing learning rate schedule. • Adapt learning rate ... rate and m as the momentum increasing the batch size. |

|

Dont Decay the Learning Rate Increase the Batch Size

24 févr. 2018 One can also increase the momentum coefficient and scale B ? 1/(1 ? m) although this slightly reduces the test accuracy. We train Inception-. |

|

On the Computational Inefficiency of Large Batch Sizes for

30 nov. 2018 unless increasing the batch size leads to a commensurate decrease in the total ... number of training iterations and the learning rate. |

| Learning-Rate Annealing Methods for Deep Neural Networks |

|

An Empirical Model of Large-Batch Training

14 déc. 2018 The last few years have seen a rapid increase in the amount of computation ... learning: in reinforcement learning batch sizes of over a ... |

|

Which Algorithmic Choices Matter at Which Batch Sizes? Insights

optimal learning rates and large batch training making it a useful tool to generate risk also decreases proportionally to increases in batch size. |

|

The Limit of the Batch Size

15 juin 2020 Don't decay the learning rate increase the batch size. arXiv preprint arXiv:1711.00489 |

|

Which Algorithmic Choices Matter at Which Batch Sizes? Insights

Increasing the batch size is a popular way to speed up neural network training 1992] or decreasing the learning rate (which will harm the rate of ... |

|

Control Batch Size and Learning Rate to Generalize Well

correlation with the ratio of batch size to learning rate. the stochastic gradient gS(?) to iteratively update the parameter ? in order to minimize the. |

|

Control Batch Size and Learning Rate to Generalize Well - NeurIPS

training strategy that we should control the ratio of batch size to learning rate not too large to SGD uses the stochastic gradient gS(θ) to iteratively update the parameter θ in order to minimize the Don't decay the learning rate, increase |

|

Which Algorithmic Choices Matter at Which Batch Sizes? - NIPS

In this work, we study how the critical batch size changes based on properties of 1992] or decreasing the learning rate (which will harm the rate of (The results don't seem to be sensitive to either the initial variance or the target risk; |

|

Towards Explaining the Regularization Effect of Initial Large

before the activations which gets reduced by some constant factor at some particular epoch in training We show large batch size or small learning rate results in sharp local minima We also extend our theorem to other U such as a two descent learns one-hidden-layer CNN: don't be afraid of spurious local minima |

|

Why Does Large Batch Training Result in Poor - HUSCAP

that gradually increases batch size during training We also They adjust the update amount and reduce the step size Don't decay the learning rate, increase |

|

Training Tips for the Transformer Model

proved training regarding batch size, learning rate, warmup steps, maximum sentence length tion speed decreases with increasing batch size because not all operations in GPU are results than BIG, so we don't report it here 53 |

|

The Effect of Network Width on Stochastic Gradient Descent and

noise scale,” which is a function of the batch size, learning rate, and noise scale decreases as the width increases 1 momentum coefficient, and Ntrain is the size of the training set Smith, S L , Kindermans, P , and Le, Q V Don't de- |