large batch size learning rate

|

DONT DECAY THE LEARNING RATE INCREASE THE BATCH SIZE

Crucially our techniques allow us to repurpose existing training schedules for large batch training with no hyper-parameter tuning. We train ResNet-50 on |

|

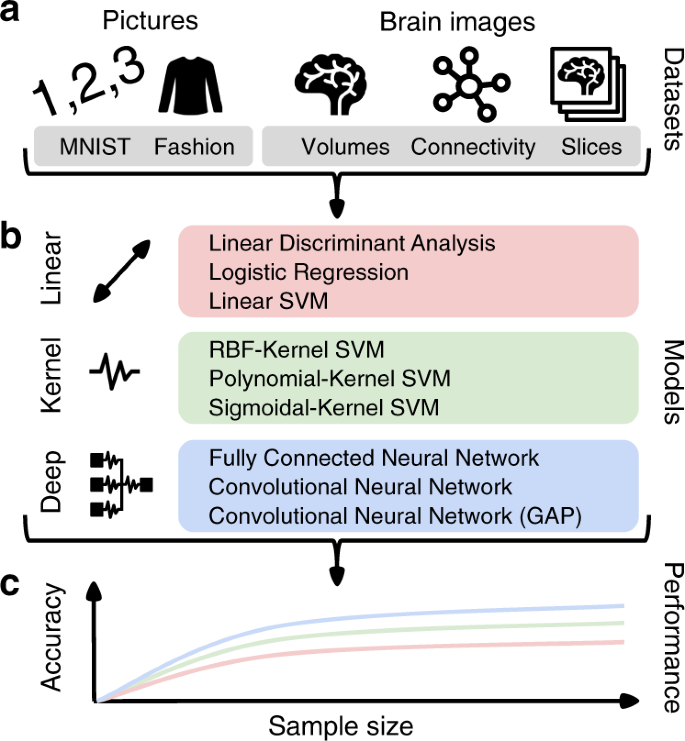

Control Batch Size and Learning Rate to Generalize Well

training strategy that we should control the ratio of batch size to learning rate not too large to achieve a good generalization ability. |

|

Large Batch Training of Convolutional Networks

13 sept 2017 current recipe for large batch training (linear learning rate scaling with ... Using LARS we scaled Alexnet up to a batch size of 8K |

|

AdaBatch: Adaptive Batch Sizes for Training Deep Neural Networks

14 feb 2018 requires careful choice of both learning rate and batch size. While smaller batch sizes generally converge in fewer training epochs larger ... |

|

Large Batch Optimization for Object Detection: Training COCO in 12

A rule of thumb for training neural network is the Linear Scaling Rule (LSR) [10] which sug- gests that when the batch size becomes K times |

| Large Batch Optimization for Deep Learning Using New Complete |

|

On the Computational Inefficiency of Large Batch Sizes for

30 nov 2018 We show that popular training strategies for large batch size optimization ... to select a learning rate for larger batch sizes [9 29]. |

|

An Empirical Model of Large-Batch Training

14 dic 2018 be trained using relatively large batch sizes without sacrificing data ... period or an unusual learning rate schedule) so the fact that it ... |

|

The Limit of the Batch Size

15 jun 2020 Since LARS with learning rate warmup and polynomial decay gave us best performance for large- batch MNIST training we use this scheme for huge- ... |

|

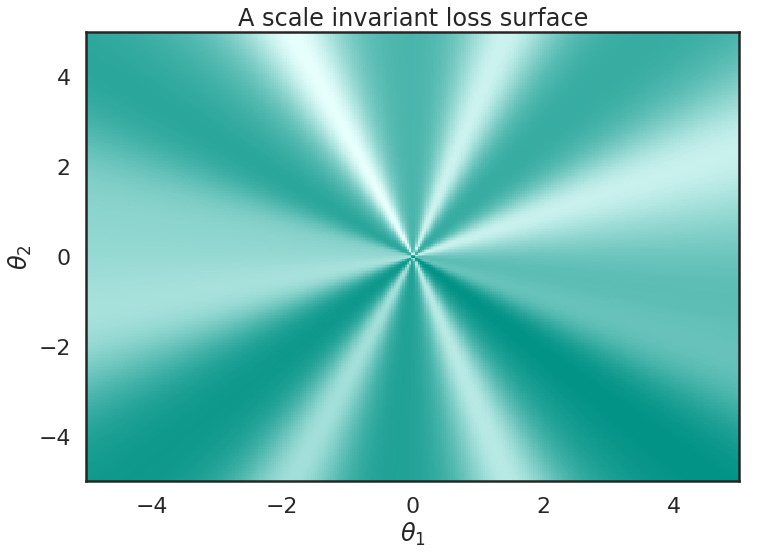

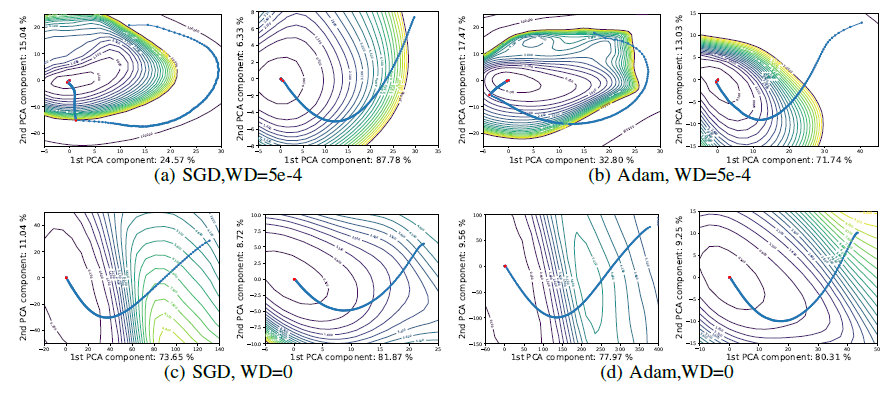

Three Factors Influencing Minima in SGD

13 sept 2018 In particular we investigate changing batch size |

|

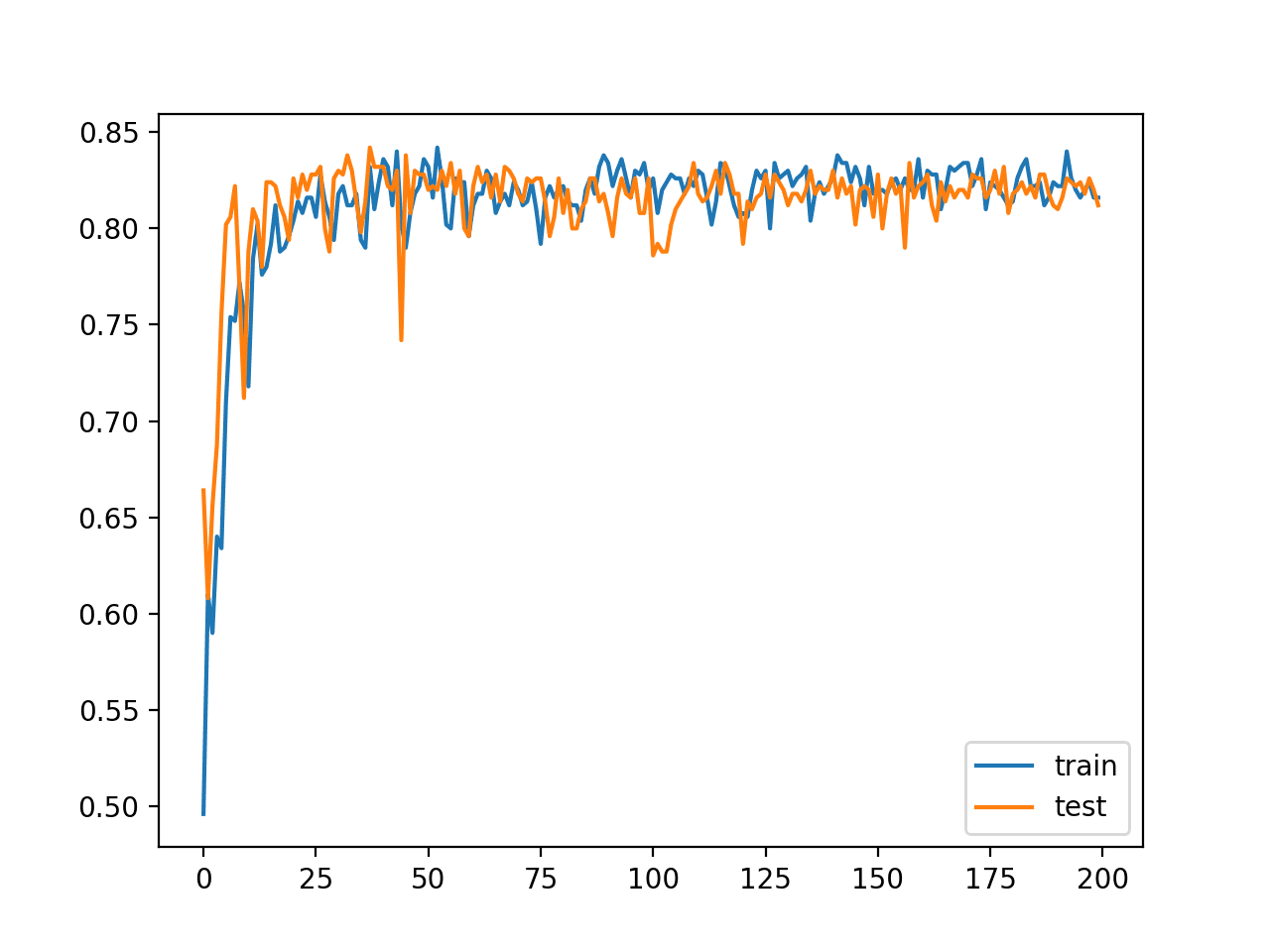

DONT DECAY THE LEARNING RATE INCREASE THE BATCH SIZE

It is common practice to decay the learning rate Here we show one can usually obtain the same learning curve on both training and test sets by instead |

|

Control Batch Size and Learning Rate to Generalize Well

This paper reports both theoretical and empirical evidence of a training strategy that we should control the ratio of batch size to learning rate not too large |

|

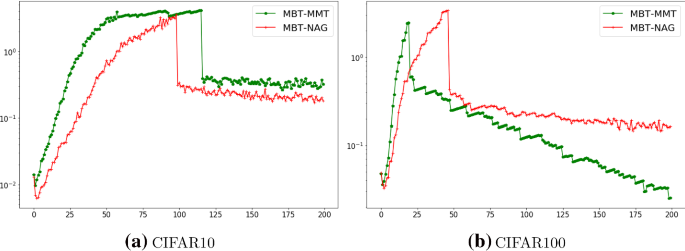

Large Batch Optimization for Deep Learning Using New Complete

In this paper we propose a novel Complete Layer-wise Adaptive Rate Scaling (CLARS) algorithm for large-batch training We prove the convergence of our |

|

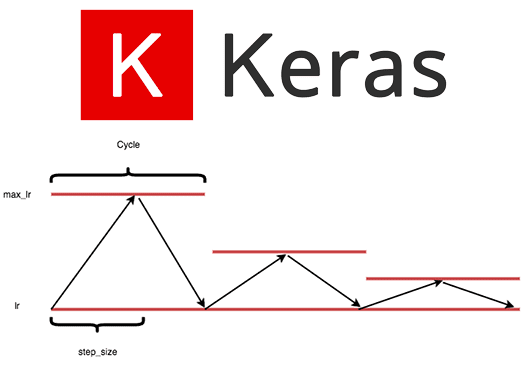

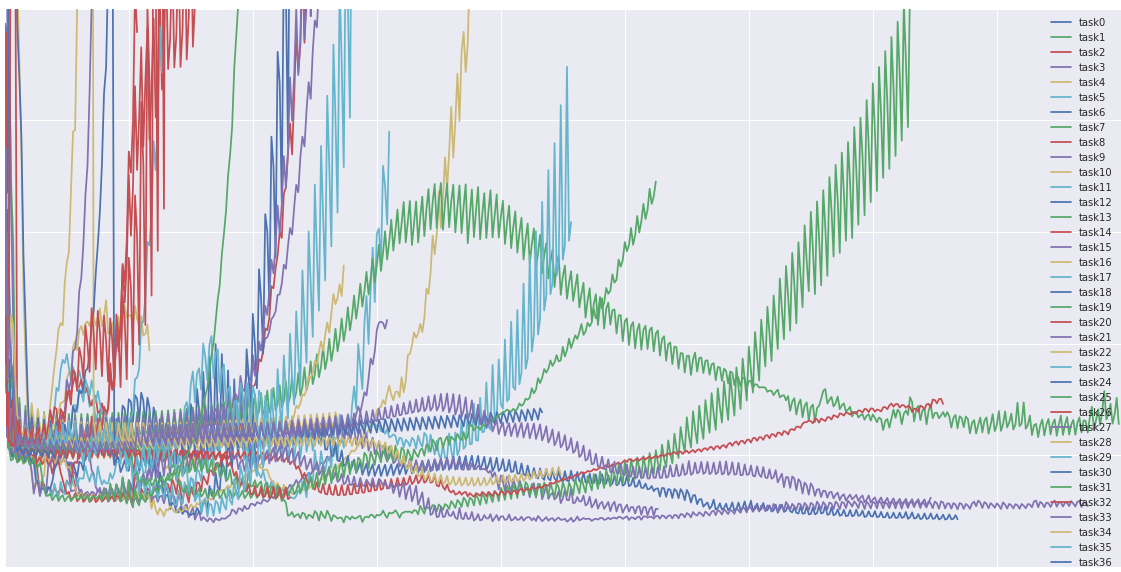

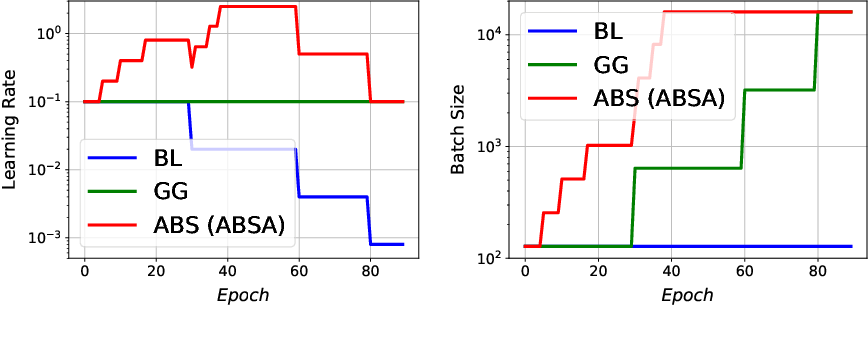

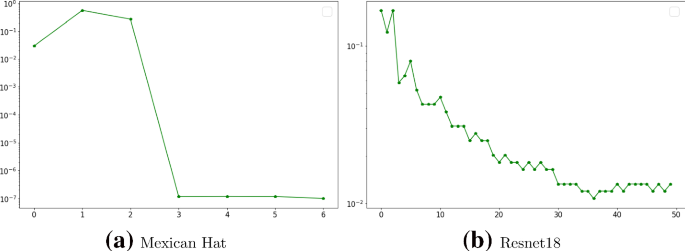

Automated Learning Rate Scheduler for Large-batch Training - arXiv

13 juil 2021 · In this work we propose an automated LR scheduling algorithm which is effective for neural network training with a large batch size under the |

|

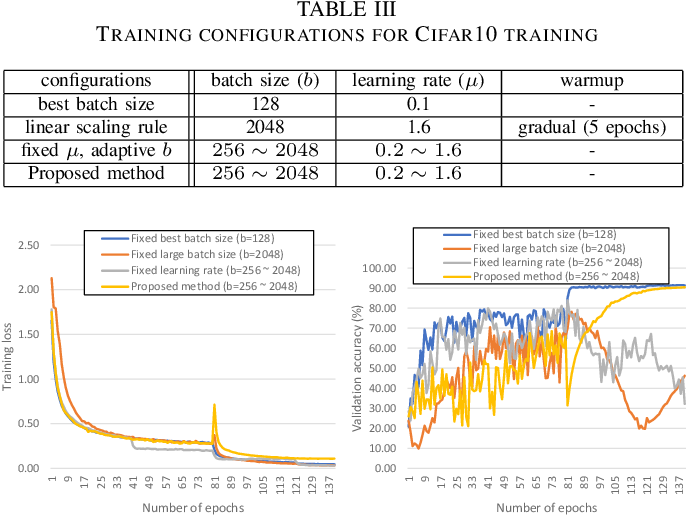

Study on the Large Batch Size Training of Neural Networks - arXiv

16 déc 2020 · A curvature-based learning rate (CBLR) algorithm is proposed to better fit the cur- vature variation a sensitive factor affecting large batch |

|

Enhancing Large Batch Size Training of Deep Models for Remote

17 juil 2021 · PDF A wide variety of Remote Sensing (RS) missions are continuously deal with very large batch sizes use adaptive learning rates |

|

Large Batch Optimization for Object Detection: Training COCO in 12

This algorithm endows each layer a proper learning rate thus making it possible to train a network with a larger batch size For LAMB each update of the |

|

Control Batch Size and Learning Rate to Generalize Well

This work introduces Arbiter as a new hyperparameter optimization algorithm to perform batch size adaptations for learnable scheduling heuristics using |

|

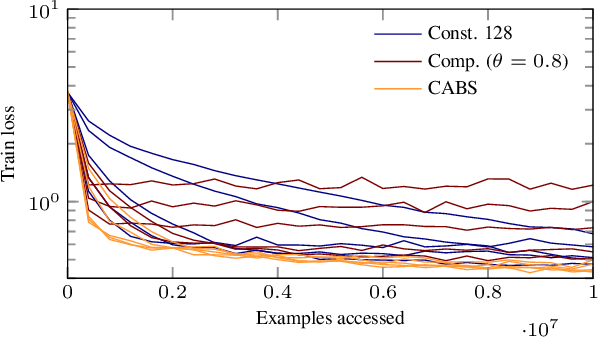

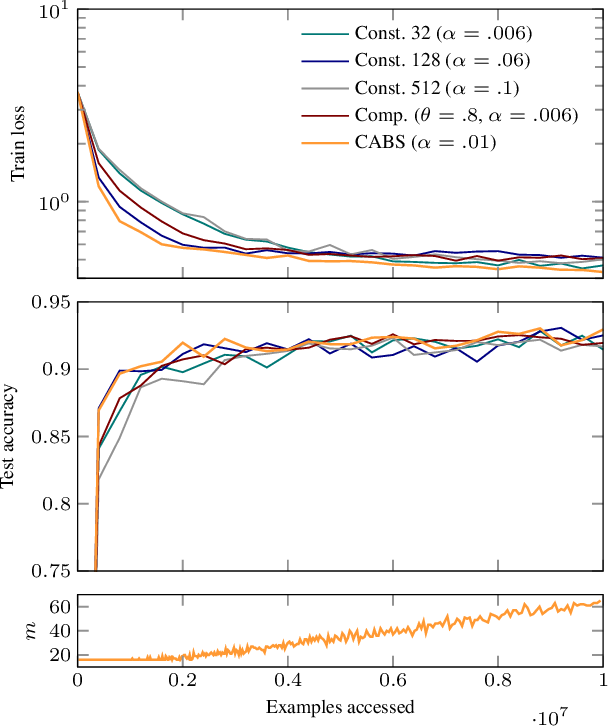

Coupling Adaptive Batch Sizes with Learning Rates

Small batch sizes require a small learning rate while larger batch sizes enable larger steps We will exploit this relationship later on by explicitly coupling |

|

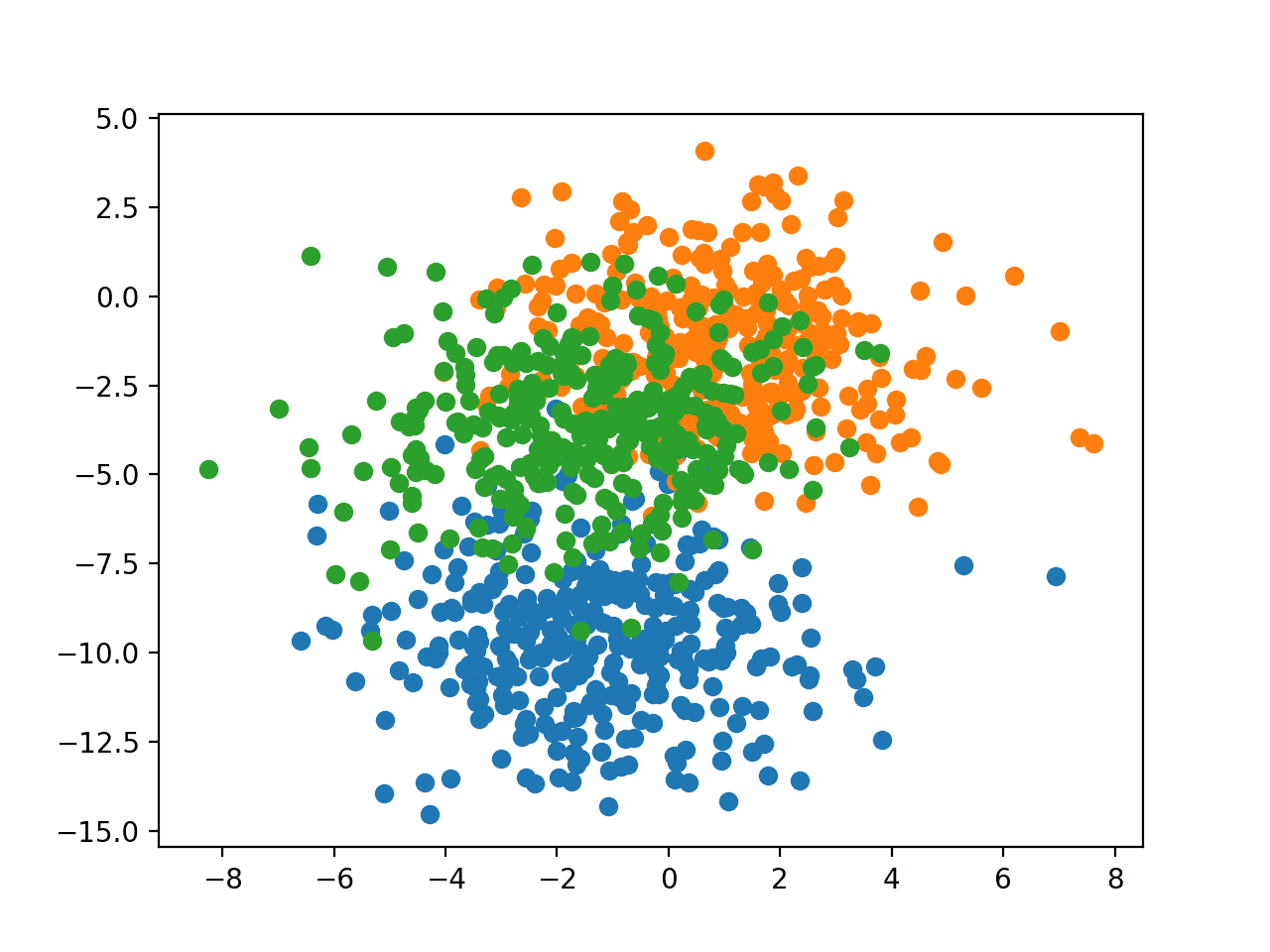

Extrapolation for Large-batch Training in Deep Learning

Adaptive Rate Scaling (LARS) for better optimization and scaling to larger mini-batch sizes; but the generalization gap does not vanish Lin et al |

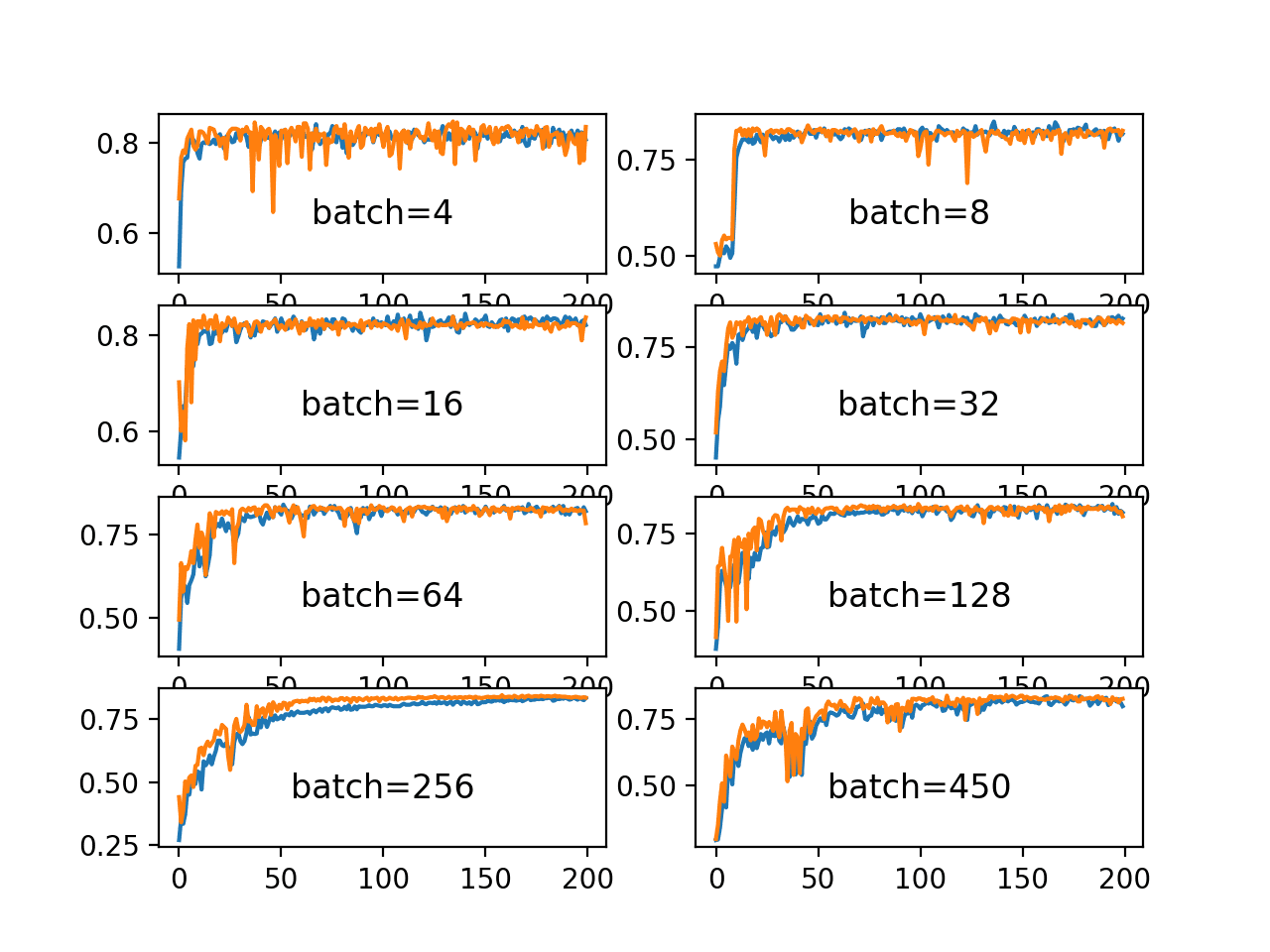

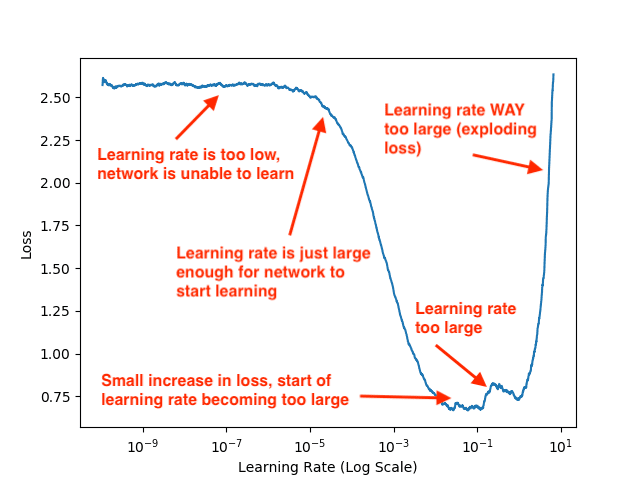

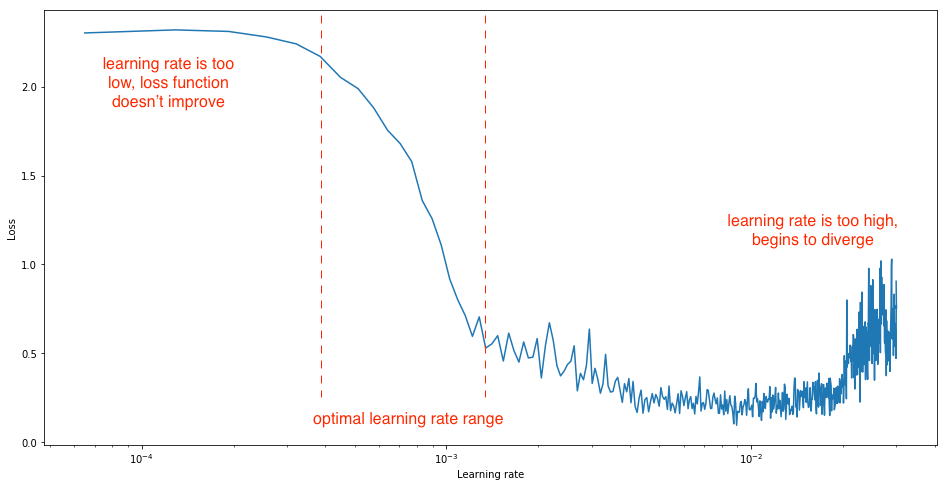

How does batch size affect learning rate?

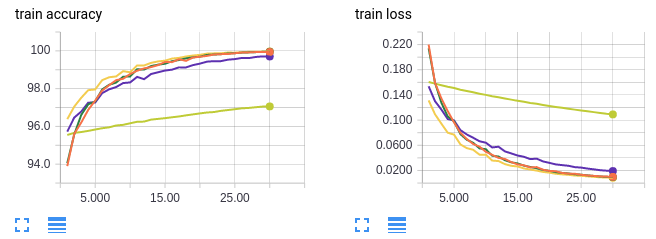

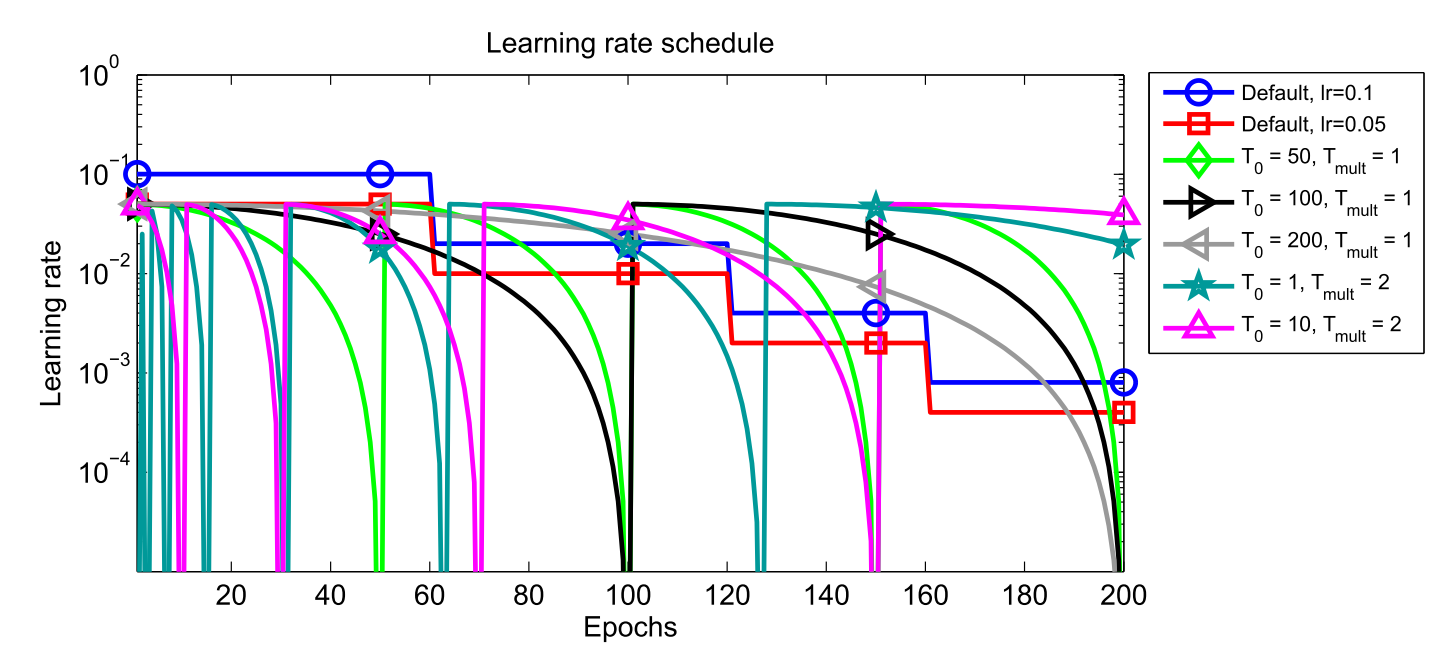

When learning gradient descent, we learn that learning rate and batch size matter. Specifically, increasing the learning rate speeds up the learning of your model, yet risks overshooting its minimum loss. Reducing batch size means your model uses fewer samples to calculate the loss in each iteration of learning.What is the learning rate for 64 batch size?

Using a batch size of 64 (orange) achieves a test accuracy of 98% while using a batch size of 1024 only achieves about 96%.What is the best learning rate for a batch size of 32?

Let's try this out, with batch sizes 32, 64, 128, and 256. We will use a base learning rate of 0.01 for batch size 32, and scale accordingly for the other batch sizes. Indeed, we find that adjusting the learning rate does eliminate most of the performance gap between small and large batch sizes.- Our parallel coordinate plot also makes a key tradeoff very evident: larger batch sizes take less time to train but are less accurate.

|

Control Batch Size and Learning Rate to Generalize Well - NeurIPS

When employing SGD to train deep neural networks, we should control the batch size not too large and learning rate not too small, in order to make the networks |

|

Accurate, Large Minibatch SGD: Training ImageNet in 1 Hour

To achieve this result, we adopt a linear scal- ing rule for adjusting learning rates as a function of mini- batch size and develop a new warmup scheme that over- |

|

Which Algorithmic Choices Matter at Which Batch Sizes? - NIPS

much larger critical batch sizes than stochastic gradient descent with optimal learning rates and large batch training, making it a useful tool to generate |

|

Towards Explaining the Regularization Effect of Initial Large

Stochastic gradient descent with a large initial learning rate is widely used for training large batch size or small learning rate results in sharp local minima |

|

Why Does Large Batch Training Result in Poor - HUSCAP

In section 4, we explain why the training with a large batch size in neural networks learning rate during training can be useful for accelerating the training In |

|

Large Batch Optimization for Object Detection: Training COCO in 12

A rule of thumb for training neural network is the Linear Scaling Rule (LSR) [10], which sug- gests that when the batch size becomes K times, the learning rate |

![PDF] Large batch size training of neural networks with adversarial PDF] Large batch size training of neural networks with adversarial](https://miro.medium.com/max/1400/1*n79s9gvd0E8ALe9dLUEKAw.png)

![PDF] Improving Scalability of Parallel CNN Training by Adjusting PDF] Improving Scalability of Parallel CNN Training by Adjusting](https://miro.medium.com/max/512/1*z5UEgD9eBRWa03uQLj9haA.png)

![PDF] Large batch size training of neural networks with adversarial PDF] Large batch size training of neural networks with adversarial](https://ruder.io/content/images/2017/11/snapshot_ensembles.png)

![PDF] Improving Scalability of Parallel CNN Training by Adjusting PDF] Improving Scalability of Parallel CNN Training by Adjusting](https://miro.medium.com/max/798/1*MEsjh_hWSma9qkaQG5Nsfw.png)

![PDF] Improving Scalability of Parallel CNN Training by Adjusting PDF] Improving Scalability of Parallel CNN Training by Adjusting](https://www.coursehero.com/thumb/38/14/3814f87be38c795faffb1338a1a0c7c292fa5e6b_180.jpg)