lstm google scholar

| Modeling and Forecasting the Volatility of NIFTY 50 Using GARCH |

| Understanding LSTM Networks |

|

Hashtag Recommendation with Topical Attention-Based LSTM

11 déc. 2016 adopt LSTM to learn the representation of a microblog post. ... incorporates topic modeling into the LSTM architecture through an attention ... |

|

Zhiyong CUI

1 oct. 2020 Cui Z Ke R |

| Bidirectional LSTM-CNNs-CRF Models for POS Tagging |

|

Agent Inspired Trading Using Recurrent Reinforcement Learning

23 juil. 2017 Reinforcement Learning and LSTM Neural Networks. David W. Lu ... is implemented in Long Short Term Memory (LSTM) recurrent. |

|

Deep-VFX: Deep Action Recognition Driven VFX for Short Video

22 juil. 2020 Motion Capture LSTM |

|

A survey on long short-term memory networks for time series

The present paper delivers a comprehensive overview of existing LSTM cell derivatives and network architectures for time series prediction A categorization in |

|

Long Short Term Memory Recurrent Neural Network (LSTM-RNN

Friendship based storage allocation for online social networks cloud computing In: 2015 International Conference on Cloud Technologies and Applications ( |

|

A Review on the Long Short-Term Memory Model - ResearchGate

PDF Long Short-Term Memory (LSTM) has transformed both machine learning and neurocomputing fields According to several online sources this model has |

|

LONG SHORT-TERM MEMORY 1 INTRODUCTION

This paper presents \Long Short-Term Memory" (LSTM) a novel recurrent network architecture in conjunction with an appropriate gradient-based learning |

|

COMPARISON STUDY BETWEEN LSTM & CNN Capstone

As an Al Ghurair scholar I feel so lucky and honored to have such an opportunity to study at one of the best universities in Morocco |

|

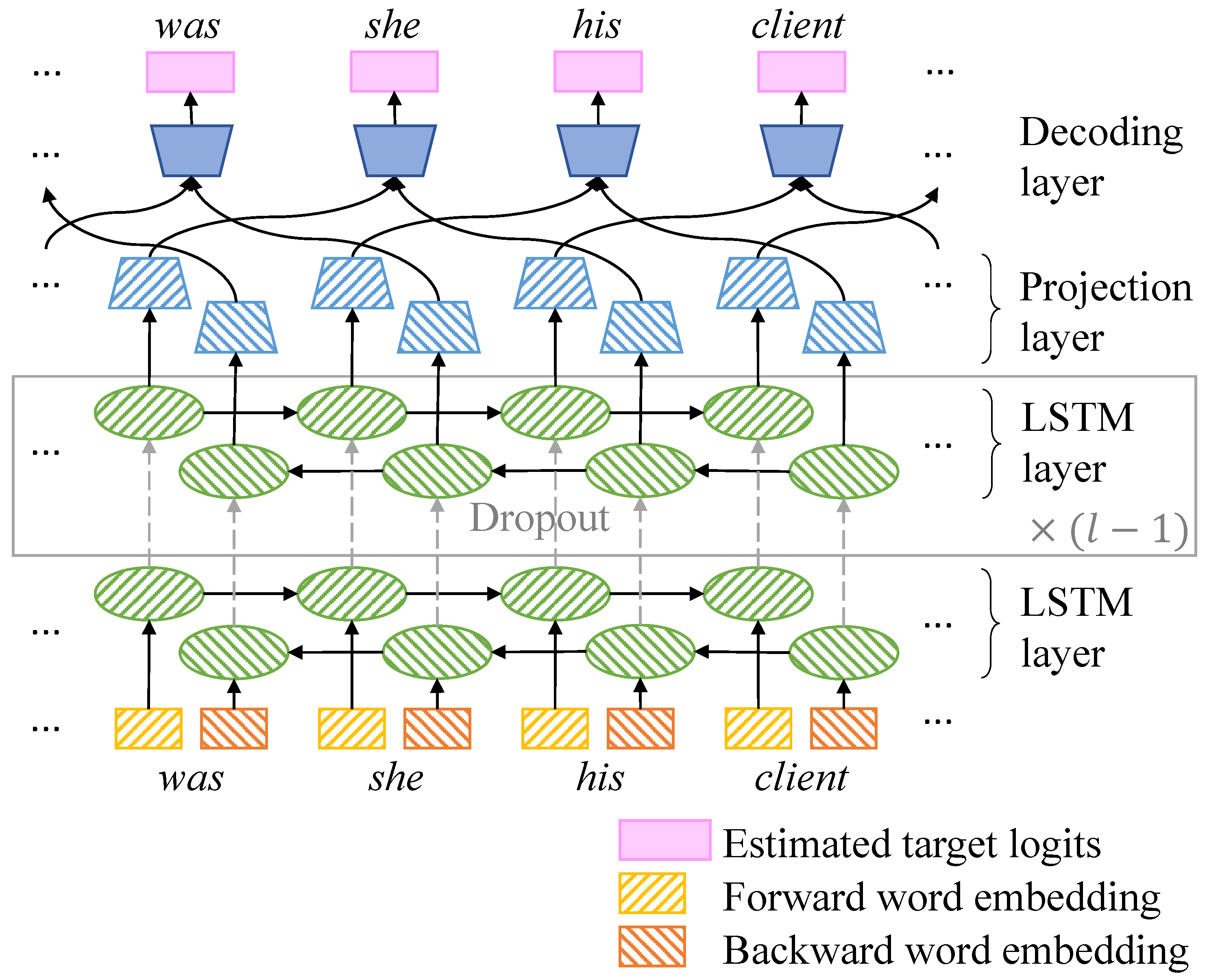

Application of LSTM Neural Networks in Language Modelling

Keywords language modelling; recurrent neural networks; LSTM neural networks Download conference paper PDF Google Scholar Mikolov T Kombrink S |

|

Applying LSTM to Time Series Predictable Through Time-Window

Long Short-Term Memory (LSTM) is able to solve many time series tasks unsolvable by feed-forward networks using fixed size time windows |

|

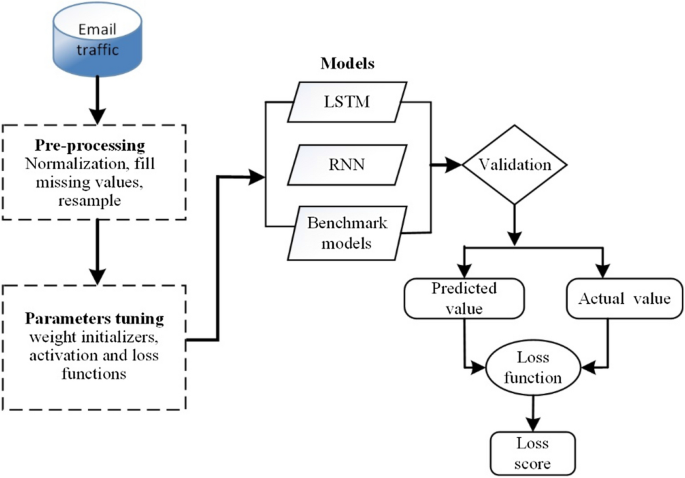

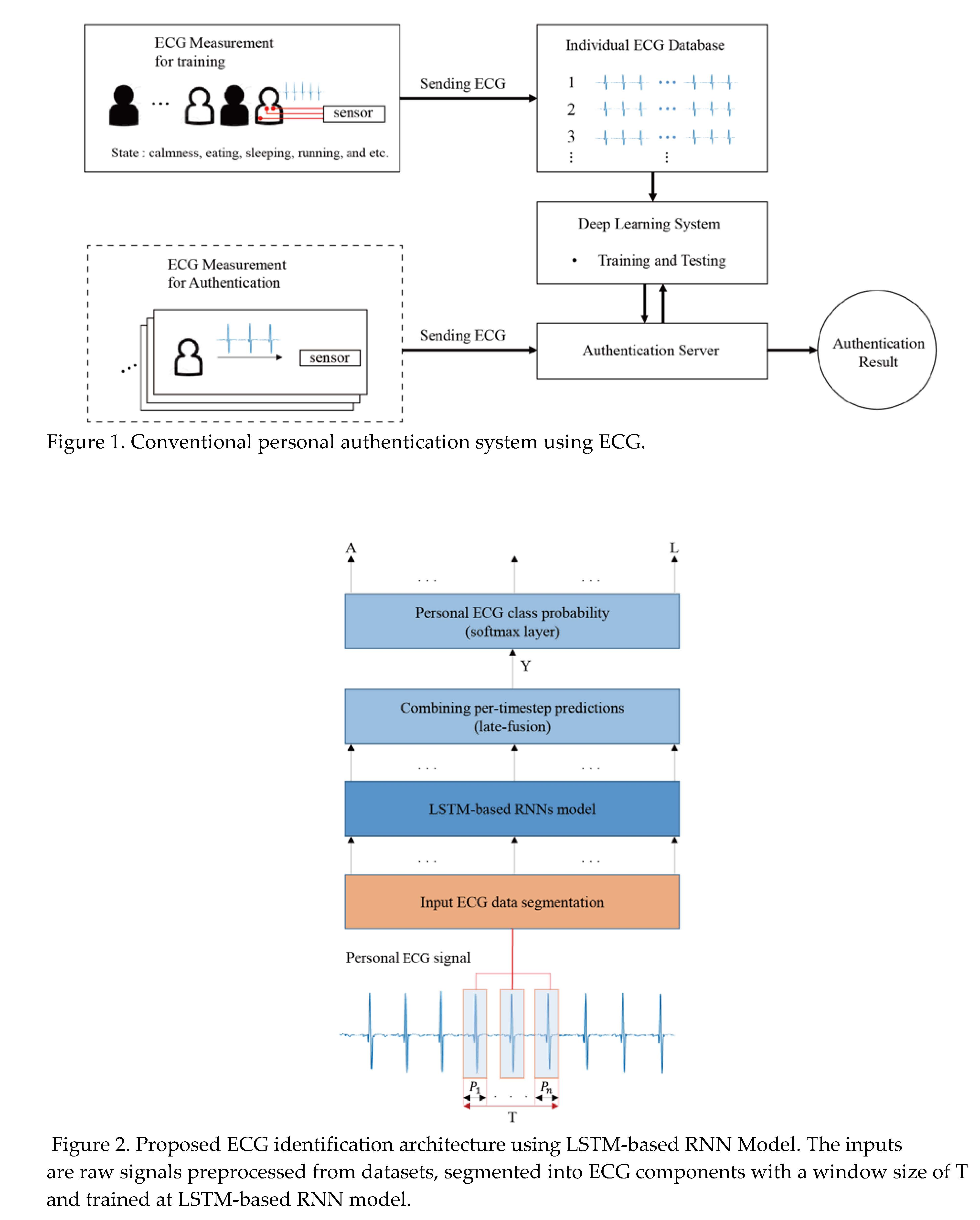

Long short term memory (LSTM) recurrent neural network (RNN) for

We use a Deep Learning algorithm which involves Recurrent Long Short Term Memory (LSTM) Neural Network Daily discharge data at two river gauge stations were |

What is LSTM best explained?

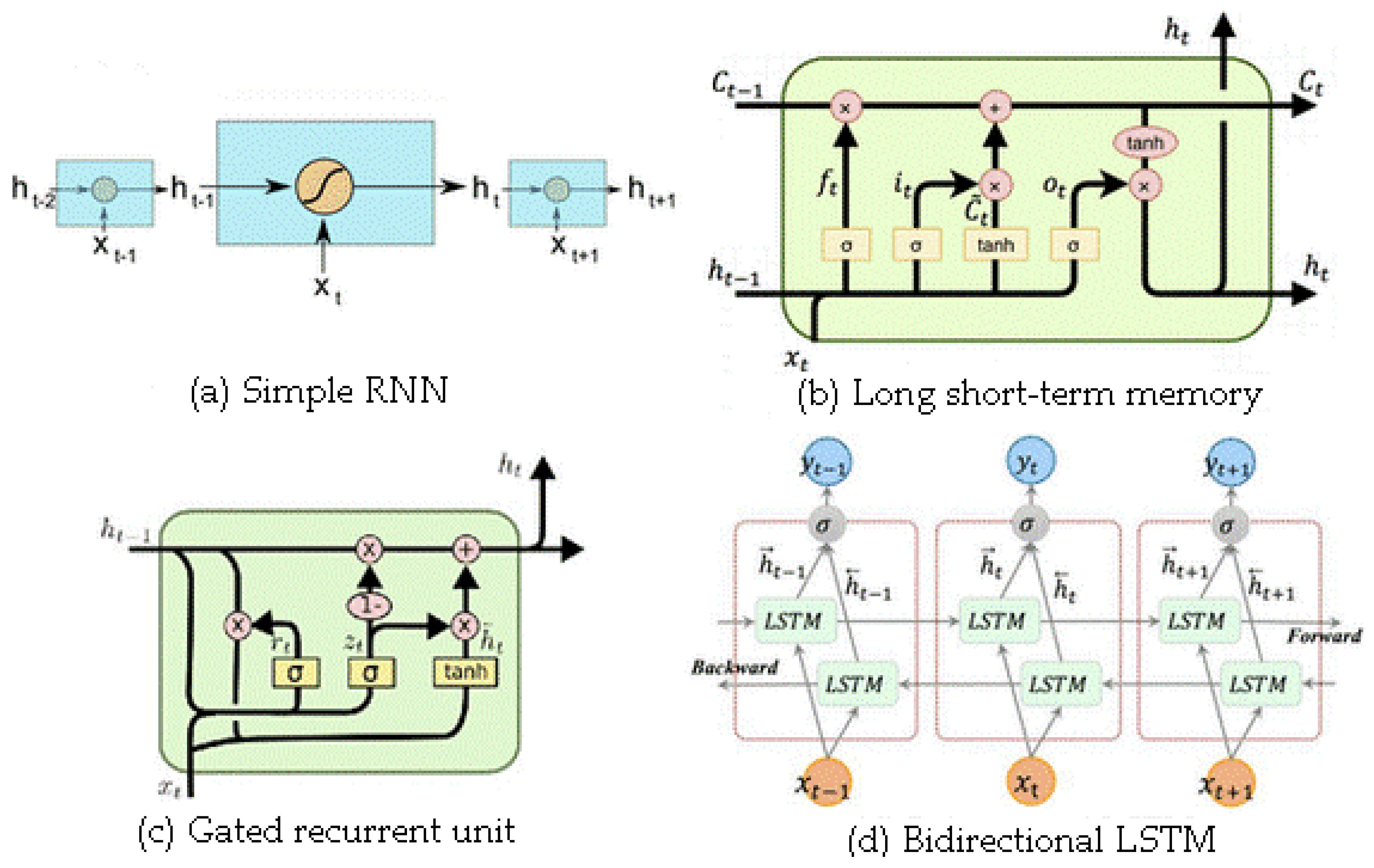

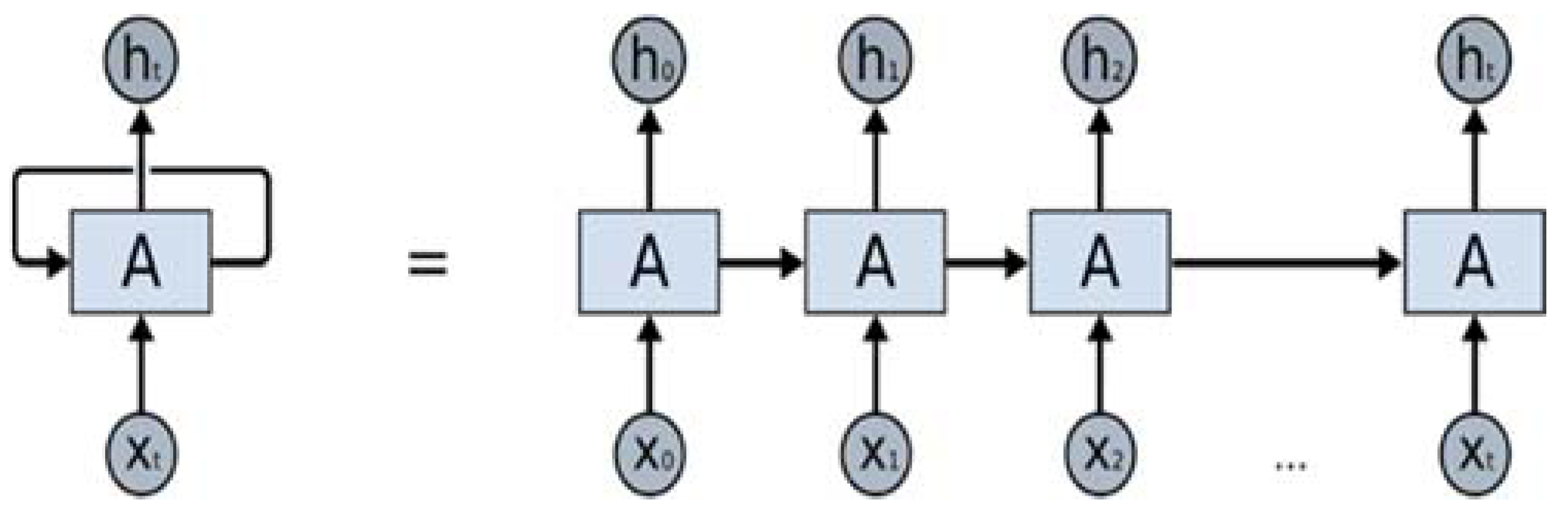

LSTM Explained

First, you must be wondering 'What does LSTM stand for?' LSTM stands for long short-term memory networks, used in the field of Deep Learning. It is a variety of recurrent neural networks (RNNs) that are capable of learning long-term dependencies, especially in sequence prediction problems.Is LSTM better than CNN?

An LSTM is a special model that is usually used for time series predictions [12,13,14,15,16,17], while a CNN network is mainly used for processing images. However, this model is still suitable for time series prediction [18,19,20,21].Is LSTM faster than CNN?

LSTM required more parameters than CNN, but only about half of DNN. While being the slowest to train, their advantage comes from being able to look at long sequences of inputs without increasing the network size.- LSTM networks have been used on a variety of tasks, including speech recognition, language modeling, and machine translation. In recent years, they have also been used for more general sequence learning tasks such as activity recognition and music transcription.

|

LONG SHORT-TERM MEMORY 1 INTRODUCTION

In comparisons with RTRL, BPTT, Recurrent Cascade-Correlation, Elman nets, and Neural Sequence Chunking, LSTM leads to many more successful runs, and |

|

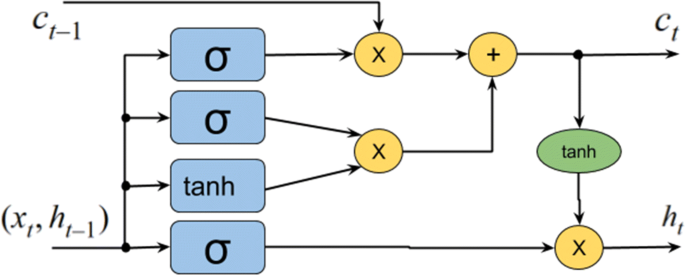

Understanding LSTM Networks

27 août 2015 · The repeating module in an LSTM contains four interacting layers (https:// scholar google com/citations?user=DaFHynwAAAAJ&hl=en) ↩ |

|

Deep learning with long short-term memory networks for - econstor

10 mai 2017 · Suggested Citation: Fischer, Thomas; Krauss, Christopher (2017) : Deep learning Long short-term memory (LSTM) networks are a state-of-the-art LSTM networks are developed with keras (Chollet, 2016) on top of Google |

|

A C-LSTM with Attention Mechanism for Question - Anna University

convolutional long short-term memory (C-LSTM) with an attention mechanism for question Google Scholar (http://scholar google com/scholar_lookup? |

|

LSTM-based Analysis of Industrial IoT Equipment - IEEE Xplore

Citation information: DOI 10 1109/ACCESS 2018 2825538, IEEE Access IEEE ACESS, VOL 13, NO LSTM neural network model to predict power station work- ing conditions from data ing to Google scholar is 15 Wuwu Guo Wuwu Guo is |

|

LSTMs Compose—and Learn—Bottom-Up - Association for

16 nov 2020 · a Google Scholar search restricted to aclweb since 2019 finds 191 LSTM variants explicitly encode syntax (Bowman et al , 2016; Dyer et |

![PDF] Multi-output bus travel time prediction with convolutional PDF] Multi-output bus travel time prediction with convolutional](https://i1.rgstatic.net/publication/319255940_Skip_RNN_Learning_to_Skip_State_Updates_in_Recurrent_Neural_Networks/links/59a03b73aca2726b90112773/largepreview.png)